A data center consolidation strategy isn't just about tidying up your IT closets anymore. It's a critical business decision that directly impacts your bottom line, security posture, and ability to adapt.

At its core, consolidation is the methodical process of reducing your IT assets and physical server locations. This often means migrating workloads to more efficient environments, whether that’s the cloud or a modernized on-premise platform. The goal is to finally get rid of all that redundant or underutilized infrastructure that naturally builds up as a company grows.

Why Your Data Center Consolidation Strategy Matters Now

The sheer volume of data today is pushing legacy IT infrastructure to its breaking point. Many organizations are juggling a sprawling collection of aging servers spread across multiple facilities. This "server sprawl" is often the unintended consequence of years of mergers, acquisitions, or just organic but unplanned growth, and it creates challenges that go far beyond a messy server room.

Actionable Insight: A retail client was drowning in operational costs across three separate data centers acquired through mergers. Their gear was old, inefficient, and a nightmare to patch. After we helped them build a focused consolidation strategy, they didn't just cut their physical footprint by 60%—they also centralized their security monitoring into a single SIEM, which dramatically improved their ability to detect and respond to threats.

The Modern Pressures Driving Consolidation

The push to consolidate is coming from all sides, fueled by powerful business and technological forces. It's no longer just an IT-led initiative; it's a strategic response to what the market demands.

Here’s a quick look at the main drivers pushing companies toward consolidation.

| Driver | Business Impact | Technical Challenge |

|---|---|---|

| Soaring Costs | High energy bills, expensive real estate, and extensive maintenance drain the IT budget. | Legacy hardware is power-hungry and requires specialized, often costly, support. |

| Demand for Agility | Slow infrastructure deployment stalls innovation and prevents quick responses to market changes. | Siloed, outdated systems can't support modern applications like AI and machine learning. |

| The Shift to Cloud | Businesses need to integrate cloud services without creating more complexity or security gaps. | Deciding which workloads to move to the cloud requires a clear understanding of the existing on-premise estate. |

| Security Risks | A distributed, aging infrastructure has a larger attack surface and is harder to secure and patch. | Inconsistent security policies and monitoring across multiple sites create blind spots for threats. |

These drivers show that consolidation is about more than just saving money. It’s about building a leaner, more secure, and more agile foundation for the business.

One of the biggest factors is the skyrocketing cost of energy and operations. Legacy hardware is notoriously power-hungry. The global data center market was valued at around $347.64 billion and is expected to hit $386.71 billion, with power consumption having jumped more than fivefold since 2005. A smart consolidation plan directly confronts these costs by migrating to more energy-efficient systems.

Then there's the demand for business agility. Modern applications require infrastructure that is flexible and can scale on demand. Old, siloed data centers simply can't provide the speed needed to innovate and compete.

And of course, there's the massive shift to cloud services. As organizations embrace digital platforms, a hybrid model often makes the most sense. Consolidating on-premise resources is usually the first crucial step in developing effective cloud computing strategies, as it forces you to take stock and decide which workloads truly belong in the cloud.

A data center consolidation strategy is not merely an infrastructure project. It's a fundamental shift that realigns your IT environment with your core business objectives, enabling growth, security, and efficiency in an increasingly competitive world.

Ultimately, a well-executed consolidation effort transforms IT from a cost center into a strategic partner for the business. It frees up capital, minimizes risk, and provides the agile foundation you need to chase future goals. In the next sections, we’ll walk through a practical framework for assessing, planning, and executing this vital process.

Laying the Groundwork for a Successful Consolidation

Every great data center consolidation project starts long before you unplug a single server. It begins with data. You can't optimize what you don't measure, and a successful strategy is built on a deep understanding of how your resources are actually being used—not just a simple server count.

This initial audit is, without a doubt, the most critical phase. If you skip this part or rush through it, you're flying blind. You risk making costly assumptions, triggering unexpected downtime, or completely missing the project's goals. It means getting your hands dirty and looking at everything, from that ancient piece of hardware humming in the corner to the complex web of application dependencies.

Conducting a Comprehensive Environment Audit

The first real step is to build a complete inventory of every single asset in your data centers. I'm not just talking about a spreadsheet with server names. You need to know their purpose, their performance, and how they all talk to each other. It’s in this phase that most organizations uncover a graveyard of "zombie servers"—machines burning power and taking up rack space but serving no real business function.

Practical Example: A manufacturing company used a combination of tools to get a holistic view. They paired a Data Center Infrastructure Management (DCIM) tool to map their physical assets with an Application Performance Monitoring (APM) solution to understand application dependencies. This combination was powerful because it revealed that their ERP system was communicating with an old, forgotten database server that was no longer in use—a perfect candidate for immediate decommissioning.

During this discovery, you need to zero in on a few key data points:

- Hardware Inventory: Get the details—server models, CPU, RAM, storage, and age. Older hardware is almost always a prime candidate for retirement. It's less energy-efficient and costs a fortune in maintenance.

- Application Dependency Mapping: This is huge. You need to map out how different applications and services communicate. This map is your bible for planning migration waves and preventing a single server move from breaking a critical business process.

- Performance Metrics: Dig into CPU and memory utilization, network traffic, and I/O operations. You'll be surprised how many servers are massively over-provisioned. Peraton, for example, retired over 100 servers during their migration to AWS after an initial assessment showed they simply weren't needed anymore.

An effective audit isn't a one-and-done task. Think of it as an ongoing process of discovery. The insights you gain here will shape every single decision that follows, from design all the way to execution.

Once you have this detailed analysis, you can start categorizing your assets logically. Group applications and infrastructure by business function, criticality, or technical needs. This simple step will make the later stages of planning your migration so much easier.

Aligning Stakeholders and Defining Clear Objectives

A data center consolidation is never just an IT project. It’s a full-blown business initiative that needs buy-in from multiple departments. Get stakeholders from IT, finance, and various business units in a room early. Your goal is to define clear, measurable objectives that actually line up with what the company is trying to achieve.

Without this alignment, you risk a project that looks great on a technical level but delivers zero business value. The conversation has to move beyond jargon and focus on real outcomes. Sure, your main goal might be a straightforward reduction in operational expenditure (OpEx), but there are often other powerful drivers at play.

Here are a few common objectives we see:

- Cost Reduction: This is the big one. The goal is to slash spending on power, cooling, real estate, and hardware maintenance. It's often about shifting from long-term capital expenses to a more flexible, pay-as-you-go model.

- Improved Disaster Recovery (DR): Moving from several aging sites to one or two modern ones can radically simplify and strengthen your DR capabilities. It's a massive win for business continuity.

- Enabling a Cloud-First Initiative: A thorough audit of your on-premise assets is the perfect first step to figuring out which workloads are ready for the public, private, or hybrid cloud.

- Enhanced Security and Compliance: A smaller physical footprint means a smaller attack surface. This makes it far easier to implement and enforce consistent security policies across a more modern, streamlined infrastructure.

Once you’ve settled on these high-level goals, it's time to get tactical. Create a visual timeline, assign responsibilities, and make sure everyone knows their role and the key milestones. To help structure this crucial planning phase, you can find a useful guide in our technology roadmap template. It's a great framework for turning your consolidation objectives into an actionable plan that keeps everyone aligned and informed.

Designing Your Consolidation Blueprint

With a thorough audit behind you, you have a crystal-clear picture of your current environment. Now it's time to design the future. This blueprinting phase is where your high-level goals start to take shape as a specific, actionable model for your new infrastructure.

Choosing the right consolidation model isn't a one-size-fits-all affair. The best path forward depends entirely on your unique business needs, how much risk you're willing to take on, and your long-term vision. Three primary models tend to dominate the conversation, each with its own set of distinct advantages.

The Physical-To-Virtual (P2V) Model

One of the most tried-and-true consolidation methods is physical-to-virtual (P2V) conversion. This is all about migrating workloads from dedicated physical servers onto virtual machines (VMs) that run on a smaller number of much more powerful host servers. The main goal here is simple: drastically shrink your physical footprint and get more out of your hardware.

Practical Example: A mid-sized logistics company had a data hall packed with 200 aging, underutilized physical servers. Each one was running a single app and barely ticking over at 10-15% CPU usage. With a P2V strategy, they moved all those workloads onto just 12 modern virtual hosts. The result? A massive drop in power, cooling, and space requirements, which translated to immediate and measurable savings on the operational side.

This approach is perfect for organizations that want to keep direct control over their hardware but are desperate to boost efficiency and make management easier. It's often the first big step toward building a private cloud.

Migrating Workloads to the Cloud

For many people, "consolidation" is just another word for "cloud migration." This model involves lifting applications and data out of on-premise data centers and shifting them to a public cloud provider like Amazon Web Services (AWS), Microsoft Azure, or Google Cloud. The big draw here is offloading all that tedious infrastructure management and switching to a flexible, pay-as-you-go model.

Actionable Insight: A classic scenario is a business moving its non-critical development and testing environments to the cloud first. This move frees up valuable on-premise resources for the core, mission-critical applications that might have stricter security or compliance rules. As you modernize your setup, designing and implementing things like S3 real-time data pipelines can become a central piece of your new, consolidated data platform in the cloud.

The cloud offers scalability and agility that are hard to beat, but it demands careful planning around security, cost management, and really understanding the different service models. To get the most out of it, you need to explore various cloud architecture patterns to make sure your design is resilient, secure, and cost-effective right from the get-go.

The Colocation Option

There's a third path that offers a happy medium: colocation. In this setup, you still own and manage all your servers, storage, and networking gear, but you lease space in a third-party data center. This lets you get out of the business of managing a physical building—no more worrying about power, cooling, or physical security—while keeping full control over your hardware.

Colocation is a fantastic choice for businesses that have recently invested in new hardware but are tired of the operational headache of running their own data center. You get access to enterprise-grade facilities with incredible connectivity and security that would be way too expensive to build and maintain yourself.

Choosing the right model is a strategic balancing act. You're weighing control against convenience, capital expenditure against operational expenditure, and current needs against future flexibility. The blueprint you create here will define the success of your entire data center consolidation strategy.

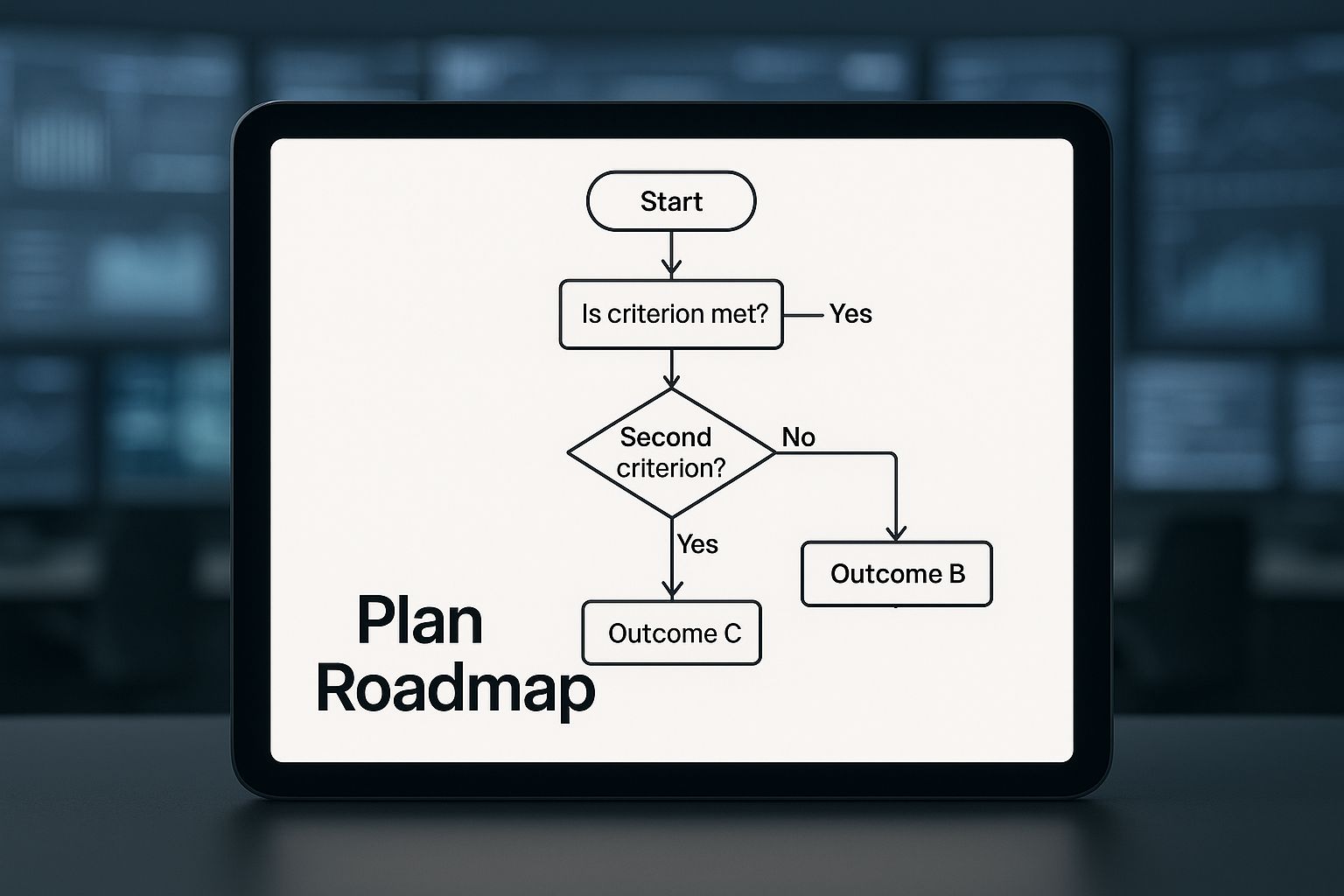

This decision-making process is a critical part of building your roadmap. The infographic below provides a simplified framework to help guide your choice based on key business drivers.

This visual roadmap helps illustrate how factors like control, scalability, and cost influence the path you take toward your ideal consolidated state.

A Framework for Making the Right Choice

To build your blueprint, you need a structured way to make a decision. This isn't about picking what's trendy; it's about selecting the model that perfectly aligns with the objectives you defined in the first place. As you weigh your options, think through these factors.

- Security and Compliance Needs: Do you handle sensitive data subject to strict rules like HIPAA or PCI DSS? This might point you toward a P2V/private cloud or colocation model where you maintain more direct control.

- Performance and Latency Requirements: Are your applications super sensitive to latency? Keeping them on-premise or in a strategically located colocation facility might be the only way to guarantee the performance your users expect.

- Budget and Financial Model: Are you trying to shift from capital expenses (CapEx) to operational expenses (OpEx)? The cloud's pay-as-you-go model is perfect for this, whereas a P2V project might require a significant upfront hardware investment.

- In-House Expertise and Resources: Does your IT team have the chops to manage a virtualized environment or a complex cloud deployment? The answer will heavily influence which model is actually feasible without a ton of retraining or new hires.

By methodically working through these questions, you can craft a detailed migration plan with clear timelines, assigned responsibilities, and a well-defined end-state. This blueprint is your guide for the entire execution phase, ensuring every action you take moves you closer to your consolidation goals.

Executing the Migration with Minimal Disruption

Alright, this is where the rubber meets the road. All that careful planning and blueprinting now gets put into action. Executing the migration is the most hands-on part of any data center consolidation, and your primary goal is simple but absolutely critical: move everything without breaking anything.

Success here boils down to a methodical, risk-averse approach that puts stability far ahead of speed.

The biggest mistake I see teams make is attempting a "big bang" migration—trying to flip the switch on everything over a single weekend. This strategy is incredibly risky. It almost guarantees you'll run into unforeseen problems, trigger extended downtime, and burn out your IT team. A much smarter, safer path is to break the migration into manageable waves.

Phasing the Migration for Maximum Safety

A phased approach is your best friend. It lets your team learn, adapt, and build confidence with each step. The key is to start with applications and systems that carry the lowest business risk. Think of it as a live-fire exercise. This strategy creates a real-world test bed for your entire process, allowing you to iron out any kinks before you even think about touching mission-critical systems.

Practical Example: A perfect candidate for the first migration wave might be an internal-facing, non-critical app like a documentation portal or a development sandbox. Moving this first lets you validate your entire workflow—from data replication to final cutover and user acceptance testing—in a low-pressure scenario. If something goes wrong, the business impact is minimal, but the lessons learned are priceless.

A typical migration wave sequence often looks something like this:

- Pilot Wave (Low Risk): Start with internal tools, dev/test environments, and non-essential departmental applications.

- Second Wave (Moderate Risk): Move on to important but not mission-critical business apps, like internal collaboration platforms or secondary analytics systems.

- Final Waves (High Risk): This is for the heavy hitters—your ERP, customer-facing applications, and primary production databases.

This tiered method ensures that by the time you get to your most important workloads, your team has a proven, battle-tested process locked down. It's all about systematically de-risking the entire project.

Choosing the Right Tools for the Job

The right tools can be the difference between a smooth transition and a weekend of chaos. Your choice will depend heavily on the consolidation model you decided on back in the blueprinting phase. Fortunately, there are mature, powerful tools out there for just about every scenario.

For teams doing a P2V (Physical-to-Virtual) strategy, tools like VMware vCenter Converter have been industry workhorses for years, reliably converting physical machines into virtual ones. If you're heading to the cloud, each provider has its own specialized toolset. For example, AWS Server Migration Service (SMS) is built to automate moving on-premise workloads to AWS, handling the replication and cutover process for you.

The goal isn't just to move data; it's to move entire functioning systems with their configurations, dependencies, and performance characteristics intact. The right migration tool automates this complexity, significantly reducing the potential for human error.

Whatever tool you land on, make sure it supports your key requirements for minimal disruption, like live replication and the ability to perform non-disruptive testing.

Avoiding Common Pitfalls and Mitigating Risk

Even with the best plan and tools, migrations are full of potential traps. The two biggest threats are always unplanned downtime and data loss. Both are completely avoidable with careful preparation and disciplined execution.

First, thorough pre-migration testing is non-negotiable. This means setting up a sandboxed, isolated environment that mirrors your target infrastructure. Here, you perform a full dry run of the migration. It’s your chance to validate application functionality, check for performance issues, and confirm that all data transferred correctly. This step is your ultimate safety net.

Your other critical safeguard is a detailed and validated rollback plan. You absolutely must have a clear, step-by-step procedure to revert to the original state if the migration fails or if post-cutover testing reveals critical issues. This plan needs to be tested just as rigorously as the migration itself. Knowing you can quickly and safely hit the "undo" button gives your team the confidence to move forward.

Many organizations get tripped up here, and understanding the common cloud migration challenges can help you get ahead of these hurdles before they derail your project.

By adopting a phased approach, using the right tools, and embedding rigorous testing and rollback procedures into your workflow, you can navigate the execution phase smoothly and finally realize the full benefits of your consolidation strategy.

Measuring Post-Consolidation Success

You’ve migrated the last server and powered down the old hardware. Mission accomplished, right? Not quite. The real proof of a successful data center consolidation strategy comes after the project is technically "done." This is the moment you validate that all the effort actually delivered on its promises.

This is where all that meticulous baseline data you gathered during the initial audit becomes your most valuable asset. The goal now is to track your new environment’s performance against those original benchmarks. This isn't just about patting yourselves on the back; it's about quantifying success and showing leadership a clear return on their investment.

Validating Success with Key Performance Indicators

The most direct way to measure your project's impact is by monitoring a specific set of key performance indicators (KPIs). These metrics provide the hard data you need to prove improvements in efficiency, cost, and performance. This is how you move the conversation from "we think it's better" to "we know it's better."

Here are the critical metrics I always focus on:

- Power Usage Effectiveness (PUE): This is a big one. A PUE closer to 1.0 signals a highly efficient data center. Showing a side-by-side comparison of your old, high PUE against your new, lower one is one of the most powerful ways to demonstrate immediate cost savings.

- Server Utilization Rates: Track the average CPU, memory, and storage usage across your new virtualized or cloud environment. If your utilization rates are higher, it means you're squeezing more value out of every single piece of hardware you're paying for. No more zombie servers.

- Application Latency and Response Times: Performance can't take a hit. You need to ensure that application performance has either improved or, at the very least, stayed consistent. This validates a positive end-user experience and proves the consolidation didn't compromise service quality.

- Operational Cost Reduction: This is the metric that gets the C-suite's attention. Tally up the direct savings from power, cooling, real estate leases, and expensive hardware maintenance contracts you no longer need. The numbers often speak for themselves.

The real power of a data center consolidation strategy lies in continuous improvement. The goal is not just to consolidate, but to create a leaner, more agile foundation that evolves with the business.

From Measurement to Continuous Optimization

Tracking these KPIs isn't a one-and-done activity. The insights you gather should kick off a cycle of continuous optimization. A consolidated environment is certainly easier to manage, but it still requires active tuning to deliver its full potential.

Practical Example: The ROI Dashboard

One of the best tools for this is a simple performance dashboard. Using a tool like Grafana or Power BI, you can create a visual, real-time display of your key metrics. This dashboard becomes your single source of truth for showing ROI to executives. Imagine showing a chart that compares pre- and post-consolidation energy costs, instantly highlighting thousands in monthly savings. It’s incredibly effective.

This ongoing process should include a few key activities:

- Right-Sizing Resources: Keep a close eye on your virtual machines and cloud instances. Are they over-provisioned? Scaling down oversized instances is one of the quickest wins for cutting costs.

- Refining Security Policies: Your new, smaller footprint is inherently easier to secure. Take this opportunity to regularly review and tighten access controls, firewall rules, and patch management processes.

- Automating Operations: With fewer moving parts, you can now automate routine tasks like provisioning, scaling, and monitoring. This frees up your IT team from mundane work and allows them to focus on more strategic initiatives.

This mindset of ongoing improvement dovetails perfectly with broader IT goals. Once your consolidated environment is stable and optimized, you can start exploring more advanced cloud cost optimization strategies to drive even greater efficiency. It reinforces the idea that consolidation wasn't just a project—it was the start of a new, smarter way of operating.

Common Questions About Data Center Consolidation

Even the most meticulously planned consolidation strategy will hit a few bumps. Thinking ahead about the common questions and sticking points is the best way to keep your project moving forward without any major surprises. Let's walk through some of the questions I hear most often from teams in the thick of it.

What Are the Biggest Risks in a Consolidation Project?

Without a doubt, the biggest risks are unplanned downtime, data loss, and post-migration performance issues. One bad move can directly impact your customers and put a serious dent in your company's credibility. Mitigation isn't just a buzzword here; it's everything.

Actionable Insight: The single best way to protect yourself is to completely ditch the "big bang" cutover. Instead, break the project into smaller, manageable phases. Start with low-risk, internal-facing applications. This lets you test and fine-tune your migration process in a live environment without betting the entire business on it.

And before you even think about touching a server, you need multiple, validated backups of everything. Even more critical is having a detailed rollback plan that you've actually tested. Knowing you can hit the "undo" button and be back to the previous state in minutes is a huge safety net. This kind of planning is fundamental to business continuity, much like the protocols outlined in a solid disaster recovery planning template.

How Do You Calculate the ROI of a Consolidation Strategy?

Calculating the Return on Investment (ROI) is how you get—and keep—your executive sponsors on board. It’s really just a straightforward comparison of what you'll save versus what you have to spend.

First, let's look at the savings side of the equation. Tally up the clear wins:

- Reduced costs for hardware maintenance contracts.

- Lower monthly power and cooling bills (OpEx).

- Decreased software licensing fees from servers you're shutting down.

- Savings from any data center leases you can terminate.

Next, add up the upfront investment costs:

- Purchases of new hardware and software.

- Fees for migration tools or professional services.

- Costs for team training or bringing in specialized contractors.

The ROI formula is simple: (Total Savings – Total Investment) / Total Investment. A compelling business case needs to show a clear payback period. For most data center consolidation projects I've seen, this typically falls somewhere between 18 and 36 months.

Should We Consolidate to a Private Cloud, Public Cloud, or Colocation?

This is one of those big strategic decisions where there’s no single "right" answer. It all boils down to your specific business needs, your security and compliance posture, and your financial model.

A private cloud gives you the ultimate level of control. It's usually the top choice for applications that have to meet strict compliance standards or data sovereignty rules. You get all the agility of virtualization but keep everything safely behind your own firewalls.

The public cloud offers scalability you just can't match on-premises and shifts your spending from a capital expense (CapEx) to an operational one (OpEx). This makes it perfect for workloads with unpredictable demand or for companies that want out of the hardware management game entirely.

Colocation is the happy medium. You still own and manage your own servers, but you house them in a specialized third-party facility. This lets you offload the massive headache of managing power, cooling, and physical security.

Practical Example: A financial services firm took a hybrid approach. They kept their core transaction systems in a highly secure private cloud to satisfy regulators. At the same time, they moved their customer-facing web apps and development environments to the public cloud to leverage its global reach and elasticity. The application dependency map you built during the audit phase is the exact tool you'll need to make these kinds of workload-specific decisions.

At DATA-NIZANT, we provide the expert analysis and in-depth articles you need to navigate complex technology decisions. Our content is designed to equip IT leaders and strategists with actionable intelligence for real-world challenges. Explore more at https://www.datanizant.com.

Wow, incredible blog layout! How long have you been blogging for? you make blogging look easy. The overall look of your website is fantastic, let alone the content!