When you're building an application in the cloud, you're not just throwing code onto a server and hoping for the best. You need a plan. Cloud architecture patterns are exactly that—they're proven blueprints for designing and building systems that work.

Think of it like building a house. You wouldn't start laying bricks without architectural plans. Those plans ensure the house can withstand storms, accommodate a growing family, and stay within budget. In the same way, cloud architecture patterns are the battle-tested designs that help you build applications that are scalable, resilient, and cost-effective.

Why Do These Patterns Actually Matter?

Let's get practical. Why should you care about these patterns? Because the choice you make at the beginning has a massive ripple effect on your application's entire lifecycle. It’s the foundational decision that dictates whether your app can handle a sudden traffic spike from a viral marketing campaign or recover gracefully when a service inevitably fails.

Picking the right pattern isn't just a technical box to check; it directly impacts your development speed, your monthly cloud bill, and your team's ability to ship features without stepping on each other's toes.

Aligning Your Tech with Your Business

Choosing a cloud architecture pattern is fundamentally a business decision disguised as a technical one. Get it right, and you set yourself up for success. Get it wrong, and you're staring down the barrel of costly re-engineering projects and a mountain of technical debt that stifles growth.

A well-chosen pattern delivers tangible benefits that any business leader can appreciate:

- Improved Scalability: Your application can grow to meet surging user demand without needing a complete tear-down and rebuild.

- Enhanced Resilience: The system is built to withstand and recover from failures, keeping your service online and your customers happy.

- Cost Optimization: You avoid the trap of over-provisioning and only pay for the resources you actually use.

- Faster Development: Your teams can work more independently, allowing them to build, test, and deploy features faster and with less friction.

The core idea is simple but incredibly powerful: A sound architecture creates business agility. An ill-fitting one creates technical debt and kills momentum.

This principle is a cornerstone of building any robust system. It's not just for applications, either. As we've discussed on the DataNizant blog, a similar mindset applies to data infrastructure, where a modern data architecture is crucial for managing and analyzing information at scale.

The Financial Side of Architecture

Don't just take my word for it—the money trail tells the story. Businesses are pouring money into the cloud because the right architecture delivers a real return on investment.

Global public cloud spending is on a steep climb, projected to hit $723.4 billion in 2025, up from $595.7 billion in 2024. This isn't just a game for the big players. 33% of large companies are already spending over $12 million a year on public cloud. Meanwhile, 54% of small and medium-sized businesses (SMBs) are spending more than $1.2 million annually. These numbers show that adopting smart cloud architectures is central to how modern companies operate and innovate.

Quick Overview of Key Cloud Architecture Patterns

Before we dive deep into each pattern, it helps to have a high-level map of the territory. This table provides a quick look at the main patterns we'll be discussing, their core ideas, and where they shine the brightest.

| Pattern Name | Core Concept | Best For | Key Benefit |

|---|---|---|---|

| Monolith | A single, unified application containing all functionalities. | Simple applications, small teams, and rapid initial development. | Simplicity in development and deployment. |

| Microservices | An application built as a collection of small, independent services. | Complex applications, large teams, and systems requiring high scalability. | Independent deployment and fault isolation. |

| Serverless | Running code without provisioning or managing servers. | Event-driven tasks, asynchronous workflows, and unpredictable traffic. | Extreme cost-efficiency and auto-scaling. |

| Event-Driven | Components communicate asynchronously through events. | Real-time systems, IoT applications, and decoupled workflows. | Loose coupling and high responsiveness. |

| N-Tier | A tiered model separating presentation, logic, and data layers. | Traditional web applications and systems requiring logical separation. | Clear separation of concerns and maintainability. |

| Service-Oriented | Loosely-coupled services communicating over a network. | Enterprise-level systems requiring integration and reusability. | Reusability and interoperability. |

This table is just a starting point. In the sections that follow, we'll break down each of these patterns—from traditional monoliths to modern microservices and serverless models—using clear analogies and real-world examples to build your understanding from the ground up.

Choosing Between Monolith and Microservices

One of the most fundamental decisions you'll make when architecting a cloud application is the choice between a monolithic or a microservices approach. This isn't just a technical detail; it's a strategic decision that ripples through your development speed, team structure, and how you'll handle maintenance for years to come. Think of it less as "old vs. new" and more as a question of what fits your project right now.

Let’s use an analogy. Building a monolith is like setting up a single, large, all-in-one restaurant kitchen. Everything from grilling and frying to baking and plating happens in one cohesive space. All your chefs (developers) work side-by-side on a single, unified system. In the beginning, this is fantastic—it’s simple to coordinate and get things done quickly.

On the other hand, a microservices architecture is more like a modern food court. You have specialized, independent stalls for burgers, tacos, and pizza. Each stall has its own staff and equipment and operates on its own schedule. While this offers incredible flexibility and specialization, it also requires a robust system to manage everything, like a central ordering platform and shared seating.

The Case for Monolithic Architecture

A monolithic architecture is the traditional model where the entire application is built as one indivisible unit. The user interface, business logic, and data access layer are all bundled into a single codebase and deployed together.

For many projects, especially for startups building a Minimum Viable Product (MVP) or for small, tight-knit teams, the monolith is often the smartest path forward. Its main advantage is simplicity. Development is more straightforward, debugging is easier since you can trace a request from start to finish within a single process, and deployment is a single, predictable action.

Practical Example: A small startup building a new project management tool. The initial product has basic features: task creation, user assignments, and a simple dashboard. Using a monolith, a team of three developers can quickly build, test, and deploy the entire application as a single unit, allowing them to get customer feedback and iterate much faster than if they had to manage a complex distributed system from day one.

When Microservices Make Sense

As an application grows, that single restaurant kitchen can get very crowded. A minor change to one feature—say, updating the dessert menu—suddenly requires re-testing and redeploying the entire kitchen. This is where development slows to a crawl, and the microservices pattern starts to look very appealing.

With microservices, the application is broken down into a collection of small, autonomous services. Each service is self-contained, focuses on a specific business capability, and can be developed, deployed, and scaled independently of all the others.

The real power of microservices lies in team and technological autonomy. One team can own the payment service, another can own the user profile service, and they can use totally different technologies without stepping on each other's toes. This decouples development cycles and dramatically speeds up feature delivery.

Here are a few key benefits:

- Independent Deployment: Teams can release updates to their specific service whenever it's ready, without having to coordinate a massive, high-risk "big bang" deployment.

- Improved Fault Isolation: If one service fails (e.g., the recommendation engine goes down), it doesn't have to crash the entire application. Core functions like user login and checkout can keep running smoothly.

- Technology Diversity: You're free to use Python for a data-intensive service and Go for a high-performance API endpoint, all within the same application. This flexibility is crucial in areas like machine learning in business, where picking the best tool for the job is non-negotiable.

The Real-World Challenges of Microservices

While the benefits are clear, the food court model brings its own operational headaches. Managing communication, data consistency, and discovery between dozens—or even hundreds—of independent services is no small feat.

A classic problem is simply routing requests from the outside world to the correct internal service. This is usually solved with an API Gateway, which acts as a single, smart entry point. The gateway can handle cross-cutting concerns like authentication, rate limiting, and then intelligently route traffic to the appropriate microservice.

Another significant hurdle is managing distributed data. In a monolith, everything typically lives in one big database. In a microservices world, each service often has its own private database to maintain its autonomy. This introduces the challenge of keeping data consistent across services, often requiring complex patterns like event sourcing or sagas to keep everything in sync. The operational overhead is undeniably higher, and it demands a mature DevOps culture to manage effectively.

2. Serverless and Event-Driven Architectures

So far, we've talked about monoliths and microservices. But what comes next? Two of the most interesting patterns in modern cloud design are serverless and event-driven architecture (EDA). They often go hand-in-hand, allowing teams to build incredibly efficient and scalable systems.

Let's get one thing straight first: "serverless" doesn't actually mean servers have vanished. They're still there, of course. The real meaning is much simpler: you don't have to manage them anymore. The cloud provider handles all the grunt work—provisioning, patching, and scaling the underlying infrastructure. You just focus on writing and deploying your code.

The most common way to do serverless is through Functions as a Service (FaaS). With FaaS, you deploy small, self-contained functions that only run when triggered by a specific event. This is where the magic of event-driven architecture really shines.

The Power of Asynchronous Events

Event-Driven Architecture (EDA) is a design where services communicate without directly talking to each other. Instead, they react to "events." An event is just a simple record of something that happened—a user clicked a button, a new file was uploaded, or a database entry changed.

Here’s how it works: instead of one service calling another and waiting for a response, it just publishes an event to a central message queue or event bus. Other services that care about that event can subscribe to it and react on their own time. The original service has no idea they even exist. This creates a beautifully decoupled system that’s far more resilient.

A Practical Example in E-commerce

Think about an online store. When a customer finalizes a purchase, a single PurchaseComplete event is fired off. This one event can set off a chain reaction of independent processes:

- Payment Function: Subscribes to the event to process the payment.

- Inventory Function: Listens for the same event to update product stock levels.

- Confirmation Function: Triggers a confirmation email to the customer.

- Analytics Function: Logs the purchase data for business intelligence reports.

Each of these is a small, independent serverless function. If the email confirmation service goes down for a minute, it doesn't stop the payment from processing or the inventory from updating. The entire system is inherently more robust.

The real game-changer with this combined serverless and event-driven approach is the pay-per-use cost model. With FaaS, you are only billed for the exact milliseconds your code is running. When nothing is happening, you pay nothing. This is a huge win for applications with unpredictable or spiky traffic.

This model is quickly becoming a pillar of modern cloud strategy. Research suggests that by 2025, serverless and multi-cloud architectures will be the norm. With 81% of companies already using multiple clouds and serverless adoption projected to grow by 30%, the trend toward managed, event-driven services is undeniable. The goal is simple: maximize flexibility and cut costs by offloading infrastructure management.

Actionable Insight for Event-Driven Systems

While incredibly powerful, designing these systems requires a new way of thinking. One of the biggest hurdles is managing state in a stateless world. Since FaaS functions are temporary, you can't just store information in memory between runs.

Actionable Insight: To solve this, you must lean on external services like a Redis cache or a DynamoDB table to maintain state. For more complicated workflows, use an orchestration tool like AWS Step Functions to manage a sequence of serverless functions as a single, stateful workflow.

Another critical piece of the puzzle is observability. When a single request flows through multiple functions, tracing it from start to finish can be a nightmare. Implementing structured logging and using distributed tracing tools aren't just nice-to-haves—they are absolutely essential for debugging and understanding what your system is actually doing. The insights from observability data are also invaluable for refining business processes, a topic we explore in our guide on the role of AI in business.

Mastering these hyper-scalable patterns is the key to building the next generation of fast, efficient, and cost-effective applications.

Implementing Hybrid and Multi-Cloud Strategies

Once you've gotten your feet wet with a single cloud provider, you'll quickly realize that a one-size-fits-all approach doesn't always work. Many organizations are moving past single-provider setups and embracing more sophisticated cloud architecture patterns like hybrid and multi-cloud. These strategies are all about meeting specific business, regulatory, and technical needs, but they come with a big catch: complexity. This isn't just about using more clouds; it's a strategic shift toward building a purpose-built technology ecosystem.

A hybrid cloud strategy is far more than just duct-taping old systems to new ones. It’s a deliberate architectural choice that blends your private infrastructure—whether it's on-premises hardware or a private cloud—with public cloud services. The whole point is to get the best of both worlds: the tight security and control of a private environment fused with the incredible scale and innovation of the public cloud.

The Practicality of Hybrid Cloud

Think about a large financial institution. It’s bound by strict regulations to keep its most sensitive client data tucked away in its own on-premises data center. At the same time, it wants to run a massive fraud detection platform that needs enormous computing power on demand—something that would be wildly expensive to build and maintain in-house.

This is a textbook case for a hybrid architecture:

- On-Premises: The crown jewels—the core database with all the sensitive customer PII—stay locked down in the private data center.

- Public Cloud: Anonymized transaction data gets streamed to a public cloud provider. There, it's fed into powerful, managed machine learning services for large-scale analysis.

This setup lets the institution stay compliant while tapping into the elastic resources needed for heavy-duty computation. The two environments are stitched together with a secure, high-speed link like a VPN or a dedicated interconnect, making them operate as a single, cohesive system.

Gaining an Edge with Multi-Cloud

While hybrid cloud is about mixing private and public, multi-cloud is about using services from more than one public cloud provider, like AWS, Google Cloud, and Azure. This isn't about simply duplicating your setup in another cloud for backup. It's a much savvier play: strategically picking the best-in-class service from each vendor to assemble a superior solution.

For instance, you might see a development team build an application that uses:

- Google Cloud's BigQuery for its unparalleled power in large-scale analytics.

- Amazon Web Services' Lambda for its mature, battle-tested, and cost-effective serverless functions.

- Azure's AI Services for some very specific cognitive computing features nobody else offers.

This "poly-cloud" approach helps you avoid getting locked into one vendor's ecosystem and lets you optimize every part of your stack for performance, features, and cost. It’s definitely an expert-level strategy, but it gives your teams the freedom to use the absolute best tool for every job.

The core principle of multi-cloud is choice. By refusing to be confined to a single ecosystem, you empower your teams to build more powerful and cost-effective applications, but this freedom comes at the cost of increased operational complexity.

Overcoming Key Implementation Challenges

Let's be clear: adopting these advanced patterns isn't a walk in the park. Every time you connect different environments, you create new seams that can introduce security gaps and a ton of management overhead. The headaches of a distributed setup are real, and the first step to a successful rollout is knowing what you're up against. As discussed in our previous post on cloud migration challenges, many of these issues require careful planning.

Here is some actionable insight on the biggest hurdles you'll face:

- Unified Identity and Access Management (IAM): To avoid chaos, don't manage permissions separately in each cloud. Implement a centralized identity provider (like Okta or Azure AD) that federates identity across all your environments, ensuring a single source of truth for user access.

- Consistent Security Posture: A security policy that works perfectly in one cloud might be useless in another. Use a Cloud Security Posture Management (CSPM) tool to enforce a consistent security baseline and automate compliance checks across all clouds.

- Network Complexity: Weaving together multiple clouds and data centers requires some serious networking skills. You have to keep a close eye on data transit costs and fight to keep latency low.

- Data Governance: When your data lives in multiple places governed by different rules, just tracking its lineage and ensuring compliance becomes exponentially harder.

To truly succeed with hybrid and multi-cloud, you have to stop thinking about individual components and start thinking like an architect. Security, governance, and operations can't be afterthoughts; they have to be designed to span every single environment from day one.

How to Choose the Right Cloud Architecture

Picking the right cloud architecture isn't about finding a single "best" pattern. It’s more like a strategic puzzle: you need to find the technical blueprint that perfectly fits your business goals, budget, and the actual skills your team has on hand.

Forget rigid checklists. The real goal is to turn architectural theory into something that creates genuine business value. Think of it this way: are you a startup hustling to get a Minimum Viable Product (MVP) out the door? Speed is your north star, and a monolith might be your best friend. Or are you an enterprise building a massive IoT platform where different parts need to scale on their own? An event-driven, microservices-based design is probably a much smarter bet.

Key Questions to Guide Your Decision

Before you commit to a specific architecture, get your team in a room (virtual or otherwise) and hash out the answers to these fundamental questions. Your answers will paint a clear picture of what your project truly needs.

- What is our team's skillset? Does your team live and breathe distributed systems, or are they more comfortable with a single, unified codebase? The operational complexity of microservices can easily overwhelm a team that isn't ready for it.

- What are our scalability needs? Do you expect growth to be pretty uniform across the board? Or will certain features (like video processing) need to scale massively while others (like user authentication) stay relatively stable? This is a classic reason teams move away from monoliths.

- How critical is time-to-market? If your number one priority is getting a product in front of users as fast as possible, the sheer simplicity of a monolith often wins. More complex patterns can bog you down with a lot of upfront work.

- What are our budget constraints? Serverless can be incredibly cheap for workloads with sudden spikes in traffic, but the pay-per-use model can get surprisingly expensive for applications with constant, heavy traffic. A solid grasp of your financial guardrails is a must, and it's a key part of effective cloud cost optimization.

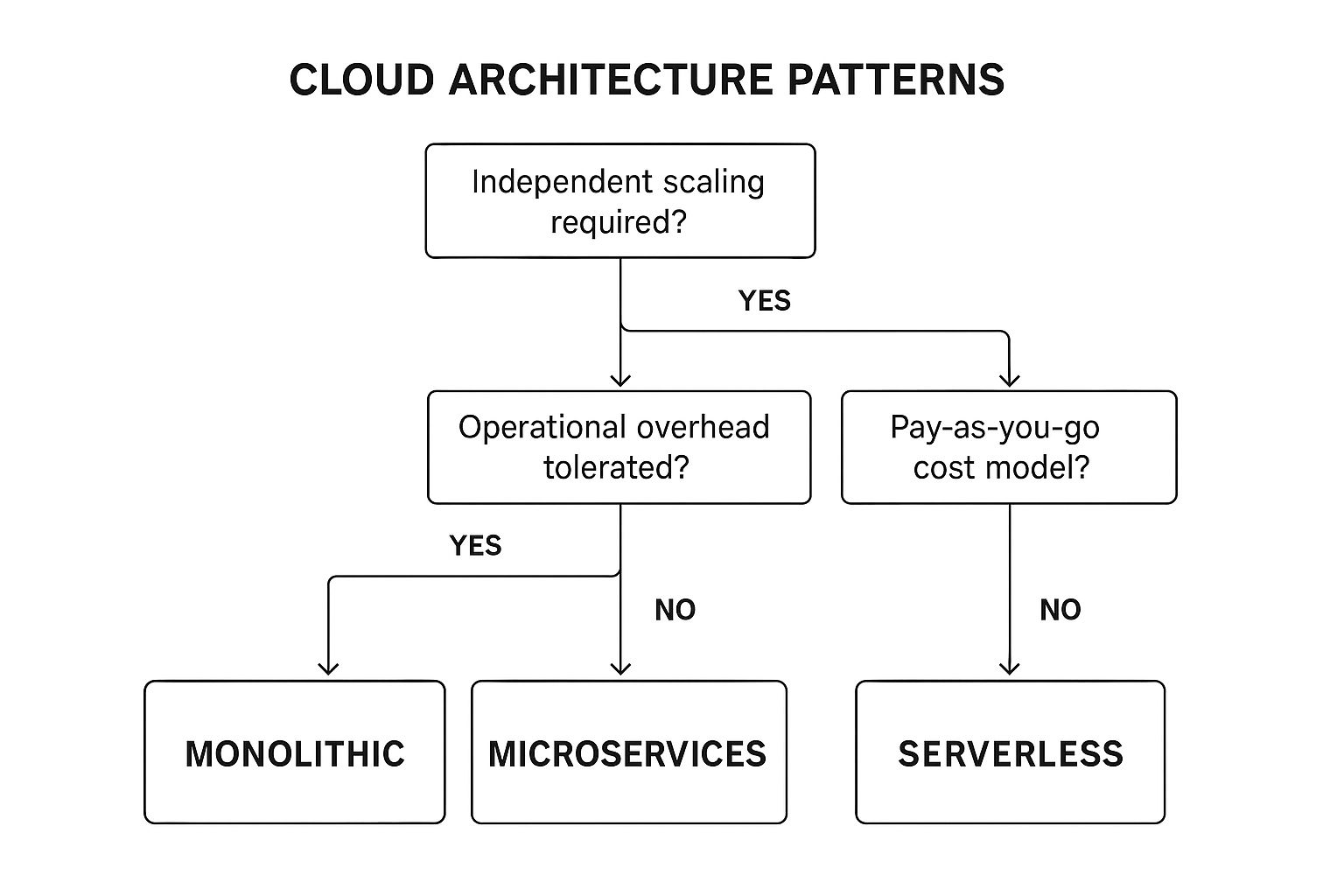

This decision tree gives you a quick visual guide for mapping your needs to a potential architecture based on how you need to scale, what your team can handle, and how the costs will likely play out.

As you can see, if every part of your application can scale together, a monolith is a perfectly fine choice. But if you need granular scaling and your team is ready for the challenge, microservices become a much more attractive option.

From Business Drivers to Architectural Choice

At the end of the day, the best cloud architecture patterns are the ones that directly help your business succeed. This isn't just a nice theory; there's real data to back it up.

A 2024 IEEE Cloud Computing Survey revealed that companies using cloud-native architectures like microservices and serverless achieve deployment cycles that are 40% faster and slash operational costs by 35%. These modern patterns are also unlocking new possibilities. For instance, edge-native architectures are cutting latency by a whopping 65%, which has a direct impact on user engagement. The financial industry has seen a 75% reduction in security incidents by building on cloud patterns that bake in zero-trust security and AI-powered threat detection.

The takeaway is clear: your architectural choices are a direct driver of business performance. You're not just building a system that works; you're building an engine for growth and efficiency.

Decision Matrix for Selecting a Cloud Architecture Pattern

To make this process less abstract and more concrete, use a decision matrix. This simple tool forces you to compare each pattern against your most important criteria, making the trade-offs crystal clear and helping your team get on the same page.

Here’s a sample matrix you can adapt to get started.

| Decision Factor | Monolith | Microservices | Serverless/FaaS | Hybrid Cloud |

|---|---|---|---|---|

| Speed of Initial Development | Very High | Low | High | Medium |

| Operational Complexity | Low | Very High | Medium | High |

| Scalability Granularity | Low | High | Very High | Medium |

| Team Autonomy | Low | High | High | Medium |

| Ideal for Startups/MVPs | Excellent | Poor | Good | Poor |

| Best for Complex Systems | Poor | Excellent | Good | Excellent |

| Infrastructure Cost Model | Fixed/Predictable | High/Complex | Pay-Per-Use | Mixed |

By thoughtfully working through these questions and frameworks, you shift the conversation from abstract ideas to a solid, well-reasoned decision. This empowers your team to choose an architecture that not only solves today's problems but also sets you up for future innovation.

Frequently Asked Questions

Even with a solid grasp of cloud architecture patterns, some questions always pop up when the rubber meets the road. Let's tackle a few of the most common challenges that architects and engineers run into when designing and scaling their systems.

When Should You Break a Monolith into Microservices?

The real answer? You should start thinking about breaking up a monolith when it starts creating genuine business pain, not just because microservices are trendy.

Look for tell-tale signs. Are development cycles grinding to a halt because teams are constantly stepping on each other's toes? Can you no longer scale one specific feature without having to scale the entire, expensive application? These are your cues.

A classic example is an e-commerce platform. During a holiday sale, the promotions engine might get absolutely hammered with traffic, while the rest of the site sees normal load. In a monolithic world, you have to scale everything up—a costly proposition. That's the perfect trigger to carve out the promotions engine into its own microservice.

The best way to do this is with the 'Strangler Fig' pattern. Don't fall into the trap of a "big bang" rewrite. Instead, you strategically peel off one piece of functionality at a time, build it as a new service, and slowly reroute traffic. This approach minimizes risk and delivers value almost immediately.

What Is the Biggest Challenge with Serverless Architecture?

Without a doubt, the single biggest headache in a serverless world is observability.

Think about it. In a monolith, tracing a user request is straightforward because everything happens in one codebase. But in a serverless architecture, a single click could trigger a complex, branching chain of dozens of independent functions. If just one of those functions fails, finding the root cause can feel like searching for a needle in a haystack.

This means you have to fundamentally change how you approach monitoring and debugging. You can't treat it as an afterthought. You need to invest heavily in structured logging, distributed tracing tools like AWS X-Ray or Jaeger, and comprehensive dashboards from day one.

Another practical hurdle is managing "cold starts"—that initial bit of latency a function has when it's invoked for the first time after being idle. Cloud providers have made huge strides here, but for applications that demand ultra-low latency, it’s still a critical factor to design around.

Is a Multi-Cloud Strategy Always Better Than Single-Cloud?

Absolutely not. While a multi-cloud strategy can be incredibly powerful, it’s not a one-size-fits-all solution. It introduces a massive amount of operational complexity and can easily drive up costs if you're not careful.

A multi-cloud approach is only "better" if the benefits clearly outweigh the headaches. These benefits usually boil down to two things:

- Using a "best-in-class" service from a specific provider (e.g., Google's BigQuery for data warehousing).

- Avoiding vendor lock-in and improving negotiation leverage.

For most organizations, especially small to medium-sized businesses, sticking to a single cloud is far more practical. It allows your team to build deep platform expertise, simplifies your security and operations, and helps you take advantage of volume-based discounts. As we've covered in other DataNizant articles, you should only go multi-cloud when there's a specific, compelling business driver that justifies the extra management burden.

At DATA-NIZANT, we provide expert analysis on AI, data science, and the digital infrastructure that powers them. Our in-depth articles help you understand complex technical trends and make strategic decisions. Explore more insights at https://www.datanizant.com.