Let's get straight to it: modern data architecture is a flexible, scalable way of managing data that helps you turn raw information into your most valuable business asset.

Forget the old, rigid data warehouses of the past. Those were like a central library with a single, overworked librarian trying to manage everything. Instead, imagine a network of specialized, connected libraries, each run by an expert who knows their subject inside and out. That's the modern approach. This guide will give you actionable insights and practical examples to build or transition to a modern data architecture that delivers real business value.

Breaking Down Modern Data Architecture

At its heart, a modern data architecture solves a very specific business problem: how to make sense of massive, messy, and constantly flowing streams of data. Traditional systems were built for a simpler time when data was structured and predictable. But today, businesses are swimming in information from every direction—social media feeds, IoT sensors, website user activity, and countless third-party apps.

This modern approach isn't just a technical upgrade; it's a fundamental shift in how a company thinks about its data. It moves away from a top-down, monolithic model where a central IT team acts as a gatekeeper, often causing frustrating bottlenecks. The new paradigm champions a decentralized, collaborative ecosystem where data is accessible, trustworthy, and owned by the teams who actually understand its business context.

The Driving Force Behind Modernization

So, what's behind the big push to modernize? It really comes down to the painful limitations of older systems. Traditional architectures just can't keep up with the demands of today's analytics. They're often sluggish, incredibly expensive to scale, and far too rigid to integrate new data sources or power sophisticated machine learning models.

The market numbers tell the story. The global market for data architecture modernization was valued at roughly $8.8 billion in 2024 and is expected to surge to over $24.4 billion by 2033. That growth, fueled by a 12% compound annual growth rate (CAGR), signals a clear global trend: companies are ditching legacy systems to stay competitive. You can dig deeper into these market projections and their underlying factors.

This investment isn't just about swapping out old tech for new. It's about unlocking critical business outcomes:

- Faster Decision-Making: Giving teams direct access to real-time, reliable data.

- Increased Agility: Empowering the business to pivot quickly and act on new opportunities.

- AI and ML Readiness: Building the solid foundation needed to train and deploy advanced analytical models.

- Reduced Operational Costs: Shifting from pricey on-premise hardware to more efficient and scalable cloud solutions.

Actionable Insight: A modern data architecture isn’t a product you buy off the shelf. It’s a design philosophy that weaves together technology, processes, and people to create a responsive, intelligent data ecosystem. The goal is to make data a reliable, self-serve product for everyone in the organization.

For instance, at Datanizant, we've explored how concepts like Data Fabric and Data Mesh fit into this picture. These aren't just buzzwords; they are specific strategies within a modern architecture designed to decentralize data ownership and improve access, perfectly illustrating the move from a rigid monolith to a flexible ecosystem.

Traditional vs. Modern Data Architecture

To really grasp the shift, it helps to see the old and new approaches side-by-side. The move from a "monolith" to an "ecosystem" changes everything from how data is stored to who can access it.

| Characteristic | Traditional Architecture (The Monolith) | Modern Architecture (The Ecosystem) |

|---|---|---|

| Primary Goal | Centralized reporting and business intelligence (BI). | Real-time analytics, AI/ML, and self-service data access. |

| Data Structure | Highly structured, schema-on-write (ETL). | Structured, semi-structured, and unstructured data. |

| Scalability | Vertical, expensive, and difficult to scale. | Horizontal, elastic, and cost-effective (cloud-native). |

| Data Access | Tightly controlled by a central IT team; creates bottlenecks. | Decentralized, democratized access for various teams. |

| Processing | Batch processing, with high latency. | Real-time stream processing and batch processing. |

| Key Technologies | On-premise data warehouses, relational databases. | Cloud data lakes, data lakehouses, streaming platforms. |

| Flexibility | Rigid and slow to adapt to new data sources. | Agile and flexible, easily integrates new technologies. |

As you can see, the modern approach is built for speed, scale, and flexibility—the exact things businesses need to thrive in a data-driven world. It's less about building a single, perfect source of truth and more about creating a resilient network that can adapt to whatever comes next.

The Core Principles Driving Modern Architectures

To really get what makes a modern data architecture tick, we have to look beyond the shiny new tools. The real magic lies in the core principles that give these systems life. Think of these less as rigid technical specs and more as a cultural and organizational mindset—one that shifts from centralized, top-down control to decentralized empowerment.

This isn't just a niche trend; it’s a seismic shift in the industry. In 2023, the market for data architecture modernization was already valued at $8.32 billion, and it's projected to explode to $38.2 billion by 2033. This massive growth is fueled by the fact that over 85% of companies now have dedicated budgets for these exact kinds of projects. The old ways just aren't cutting it anymore. You can dig deeper into these market growth projections and their drivers.

These guiding ideas are the "why" behind the "what" of modern data systems. Let's break them down.

Data as a Product

The first and most fundamental principle is treating data as a product. For decades, we’ve treated data as an exhaust fume—a technical byproduct of our business operations. This new mindset flips that script entirely, viewing datasets as valuable products built for a consumer, who just happens to be another team inside the company.

Think about it. A product team would never ship an app without proper documentation, quality assurance, or a clear owner responsible for its success. Why should data be any different?

Actionable Insight: A data product isn't just raw data. It’s an asset that is cleaned, well-documented, trustworthy, and explicitly designed to solve a business problem for its consumers. This approach ensures data quality and reusability across the entire organization.

Domain-Oriented Ownership

This principle is a direct solution to the classic bottleneck problem created by traditional, centralized data teams. Domain-oriented ownership distributes the responsibility for data to the business domains that know it best.

Simply put, the people who create and live with the data every day are put in charge of it. The marketing team owns its customer campaign data. The finance team owns its transactional data. The logistics team owns its supply chain data. It just makes sense, because these teams have the deep, contextual knowledge to ensure their data is accurate, relevant, and meaningful.

A Practical Example:

Imagine an e-commerce company where a single, overwhelmed IT team manages all data. If the marketing department needs a new report on customer lifetime value, they file a ticket and get in line. But with domain ownership, the marketing team’s own data experts can build, manage, and share a "Customer 360" data product themselves, making it instantly available for analysis without the long wait times.

The Self-Serve Data Platform

The third principle is the glue that holds everything together: the self-serve data platform. This is the underlying set of tools and infrastructure that enables domain teams to build, share, and consume data products on their own. It’s essentially "Platform-as-a-Service," but built specifically for data.

The platform provides the common, standardized capabilities every domain needs, which removes technical friction and lets them focus on creating value. These capabilities usually include:

- Data Storage: A shared space to store data products, like a data lakehouse.

- Ingestion Tools: Simple ways to get data into the platform.

- Transformation Engines: Tools for cleaning and modeling raw data into polished products.

- Data Catalog: A searchable library to discover available data products.

- Access Controls: A standardized way to handle security and permissions.

As we've covered in other Datanizant posts, like our breakdown of data fabric and data mesh architectures, building a solid self-serve platform is non-negotiable. It’s what empowers teams to move fast, accelerate insights, and drive real business value without getting bogged down by technical plumbing.

The Building Blocks of a Modern Data Platform

If the principles we just covered are the "why" behind a modern data architecture, then the core technologies are the "how." It's time to pop the hood and see what this powerful ecosystem is actually made of. This isn't just a shopping list of tools; it’s a look at how these components work together to make principles like domain ownership and self-service a reality.

Think of it like building a high-performance engine. You need distinct parts—a fuel injector, pistons, a cooling system—and each has to do its job perfectly while integrating with everything else. The same goes for a modern data platform, where each layer of technology plays a very specific and critical role.

The move to these integrated, cloud-native platforms has become a huge marker of enterprise maturity. Modern architectures are now the backbone of digital-first companies, with the top five cloud data warehouse solutions seeing a combined growth rate of over 30% year-over-year in major markets like the US, EU, and Asia. You can get more details on this trend from these key cloud market insights.

Data Storage: The Foundation

At the very bottom of any modern setup is the storage layer, which has come a long way. Two models have traditionally driven the conversation, each with its own clear strengths.

-

Cloud Data Lakes: Picture a massive, natural reservoir that can hold any kind of water—clean, muddy, from rivers, from rain. A cloud data lake (like Amazon S3) is a lot like that. It stores huge volumes of raw, unstructured, and semi-structured data in its original format. It’s incredibly cost-effective and flexible, which makes it perfect for data scientists who need to sift through raw information for things like machine learning.

-

Cloud Data Warehouses: In contrast, a data warehouse (like Snowflake or Google BigQuery) is more like a water bottling plant. It takes in structured data that has already been cleaned up and processed, then organizes it for lightning-fast analysis. This has been the traditional home for business intelligence (BI) and reporting, where analysts need quick, dependable answers to specific questions.

The Rise of the Lakehouse Architecture

For years, companies had to pick between the raw flexibility of a data lake and the structured performance of a data warehouse. Many ended up maintaining both, which was expensive and a huge headache. The Lakehouse architecture came along as a game-changer, blending the best of both worlds.

A lakehouse basically builds data warehouse-like structures and management features right on top of the cheap, flexible storage of a data lake. This unified approach gives organizations a single system for everything—from data science on raw files to high-speed BI on polished tables—dramatically simplifying the entire architecture.

Actionable Insight: A Lakehouse architecture breaks down data silos by creating a single source of truth for all kinds of analytical needs. It truly supports the "Data as a Product" principle by letting teams refine raw data into trusted, queryable assets all within one platform.

Core Functional Layers

With the storage foundation set, several other functional layers are needed to build a true self-serve platform.

1. Data Ingestion

This is the front door to your entire architecture. Ingestion tools are responsible for pulling data from hundreds of different sources—think Shopify, Salesforce, Google Ads, or internal databases—and loading it into your data lake or warehouse.

- Practical Example: A tool like Fivetran completely automates this. You just give it your credentials for a source like Shopify, and it automatically builds and maintains a pipeline that keeps your sales, customer, and product data flowing into your warehouse. We walk through how these systems work in our guide on how to create automated data pipelines.

2. Data Transformation

Once the raw data lands, it’s almost always messy. The transformation layer is where all the cleaning, modeling, and shaping happens to get it ready for analysis. This is where you actually build your "data products."

- Practical Example: The undisputed leader in this space is dbt (Data Build Tool). It lets analysts and engineers transform data using simple SQL

SELECTstatements they already know. An e-commerce company could use dbt to join raw sales data with customer info to create a clean, trustedcustomer_360model that the whole business can rely on.

3. Orchestration and Automation

Finally, an orchestration engine acts as the conductor for your data orchestra. It makes sure all these different jobs run in the right order, on schedule, and knows how to handle any failures gracefully.

- Practical Example: A tool like Apache Airflow lets you define your entire data workflow as code. You could schedule your Fivetran ingestion to run daily at 1 a.m., then automatically trigger your dbt models to run right after. This ensures that by the time your team logs on, their BI dashboards are filled with fresh, reliable data.

Understanding Data Mesh and Data Fabric

When you start digging into modern data architecture, two terms pop up constantly: Data Mesh and Data Fabric. They might sound similar, and they both aim to solve the chaos of distributed data, but they get there in fundamentally different ways. Nailing down their differences is the key to picking the right path for your organization.

At its heart, a Data Mesh is really a socio-technical strategy. It's less about a specific tool and more about flipping the organizational model for data on its head. The core idea is to stop funneling everything through a central IT or data team and instead push data ownership out to the business domains that actually create and understand the data.

This model treats data as a first-class product. Each domain team becomes responsible for delivering high-quality, secure, and ready-to-use data products to everyone else.

The Data Mesh in Action

Think about a big financial institution. In the old world, teams like retail banking, investment banking, and fraud detection would all line up at the door of a central data engineering team, creating a huge bottleneck.

A Data Mesh breaks that logjam.

- Practical Example: The Retail Banking domain takes ownership of its customer transaction data, building and maintaining a "Daily Customer Transactions" data product. The Investment Banking team owns its market trade data, offering a "Real-Time Trade Feed" as its data product. The Fraud Detection team can then simply consume these products to train its own sophisticated analysis models.

Each team is accountable for the quality and accessibility of its own data products, all while operating under a shared governance framework and using a self-service data platform. This empowers teams to move much faster and builds a genuine culture of data accountability.

What Is a Data Fabric?

A Data Fabric, on the other hand, is all about the technology. It’s an architectural layer that leans on intelligent metadata, AI, and automation to spin up a single, virtual view of all your data—no matter where it’s physically stored. Instead of reorganizing your teams, a Data Fabric connects your existing, scattered data sources through an intelligent "fabric."

You can think of it like a smart data catalog that's been supercharged. It automatically finds, catalogs, and enriches metadata from everywhere—your data lakes, warehouses, and operational databases. This active metadata then becomes the engine that makes it easy for anyone to find, access, and integrate the data they need without getting bogged down in its physical location or underlying complexity.

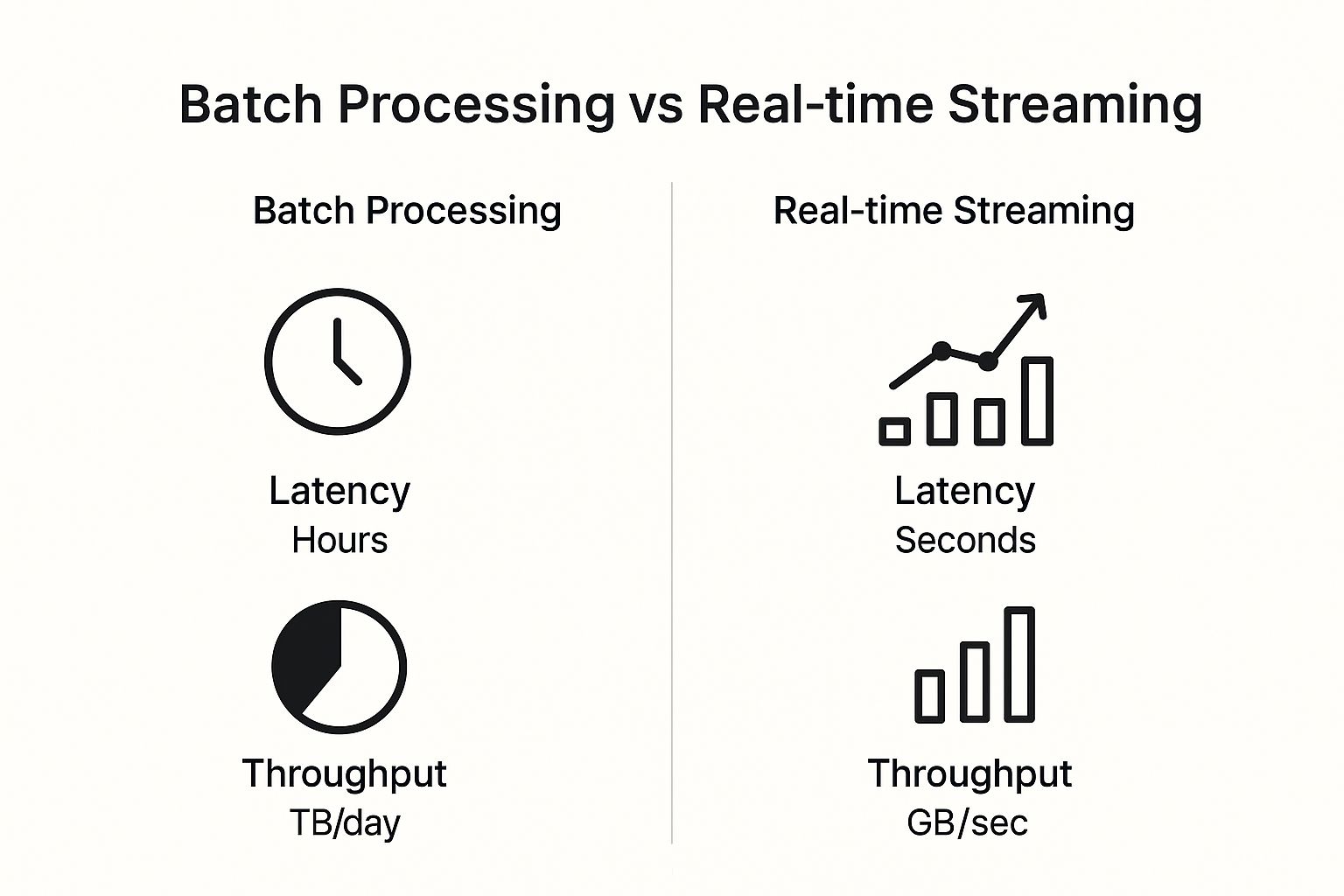

The image above does a great job showing how different data processing needs, from batch to real-time streaming, fit within these modern architectures. It highlights the constant trade-off between latency and throughput, and how today's systems are built to handle both.

Actionable Insight: A Data Fabric is designed to automate data integration and delivery, creating a frictionless, self-service experience for data consumers. It focuses on augmenting the existing data landscape with an intelligent layer rather than re-architecting teams and ownership.

Data Mesh vs. Data Fabric at a Glance

To quickly see how these two concepts differ, this table breaks down their core philosophies and ideal use cases.

| Aspect | Data Mesh | Data Fabric |

|---|---|---|

| Core Philosophy | Decentralized ownership (socio-technical) | Centralized metadata, virtualized access (technology-driven) |

| Focus | People, process, and domain-oriented data products | Technology, automation, and intelligent metadata management |

| Implementation | Organizational restructuring, creating domain data teams | Deploying a technology layer over existing infrastructure |

| Ownership | Data is owned by the business domains that produce it | Data ownership remains distributed; access is unified |

| Best For | Large, complex organizations with distinct business domains | Organizations wanting to unify access to diverse, siloed data |

While Data Mesh and Data Fabric tackle decentralization from different angles—one focusing on people and process, the other on technology—they aren't mutually exclusive. In fact, many organizations find that a Data Fabric can be the perfect technological backbone for a broader Data Mesh initiative.

To learn more, check out our complete guide on Data Fabric and Data Mesh for modern applications, where we break down exactly how these powerful architectures can work together.

How to Modernize Your Data Architecture

Trying to modernize a data architecture can feel like you’re being asked to boil the ocean. It's a massive undertaking, and the natural instinct is often to plan a massive, all-at-once "big bang" project. That's usually a recipe for disaster.

A much better approach is to think like a startup. Start small, aim for a quick win, and use that success to build momentum. The key is to follow a pragmatic, step-by-step framework that proves value from day one, rather than trying to replace everything at once. This isn't about one huge project; it's about a series of focused, iterative steps that solve real business problems.

Start with a Pain Point Assessment

Before you even think about new tools or write a single line of code, you need to ground yourself in the business. Where is the current data architecture causing the most friction? Go talk to your stakeholders—the marketing analysts, the finance team, the product managers. Your goal is to find their biggest data-related headaches.

You'll hear complaints that sound a lot like this:

- "It takes the data team two weeks to pull the quarterly sales report, so by the time we get it, the data is already stale."

- "We can't seem to connect our website clickstream data with our CRM data, so we have no idea what the full customer journey looks like."

- "Our fraud detection models are just too slow. They can't catch suspicious transactions in real time."

Listen for a specific, high-impact problem. You're looking for that sweet spot: a challenge that is significant enough to make a difference but small enough that you can actually solve it in a reasonable timeframe.

Define a Pilot Project with Clear Metrics

Once you've zeroed in on a pain point, it's time to frame a pilot project around it. Think of this as your first "data product." This project needs a tightly defined scope and, most importantly, success criteria you can actually measure. Vague goals like "improve analytics" won't cut it. You need concrete, undeniable metrics.

Actionable Insight: A successful pilot project becomes your internal case study. It’s the hard proof that a modern data architecture delivers real-world value, which makes it far easier to get the buy-in and investment you need to continue.

Practical Example: If the problem is slow reporting, your success metrics could be:

- Reduce the time to generate the quarterly sales report from two weeks to under one hour.

- Enable self-service access to sales data, cutting down ad-hoc ticket requests by 50%.

Select a Foundational Tech Stack

With a clear goal and measurable outcomes, you can finally start thinking about technology. But remember, you don't need a massive, all-encompassing suite of tools for your pilot. Just pick a lean, foundational stack that directly solves the problem at hand.

A typical starter stack for a pilot project often looks something like this:

- A Cloud Data Lakehouse: A platform like Snowflake, Databricks, or Google BigQuery is perfect for storing and processing your data.

- An ELT Tool: A service like Fivetran or Airbyte helps automate the painful process of getting data from your key sources into your lakehouse.

- A Transformation Tool: Using dbt has become the standard for modeling raw data into clean, reliable data sets that people can actually trust and use.

- A BI or Analytics Tool: This is the finish line—the interface like Tableau or Power BI where your end-users will finally get their hands on the data.

The name of the game here is agility. You want tools that are easy to set up and get running, so your team can deliver results quickly without getting bogged down in complex infrastructure. As you scale, you can always expand this stack to handle more advanced use cases. For instance, down the line, you might want to explore how to fine-tune large language models on the pristine data products you've created.

Iterate and Champion Your Success

Once your pilot project is live and hitting its metrics, your job isn't over. In fact, one of the most important parts is just beginning. You need to become an evangelist for this new way of working.

Share your success story far and wide within the organization. Make a presentation. Write an internal blog post. Showcase the metrics you achieved—the hours saved, the new insights uncovered, and the tangible business impact.

Use that momentum to identify the next big pain point and kick off your second data product. By repeating this cycle, you'll incrementally expand the footprint and value of your modern data architecture, replacing old, clunky legacy systems piece by piece with proven, high-impact solutions that people actually love to use.

From Theory to Reality: A Modernization Story

Principles and diagrams are great, but nothing drives home the value of a modern data architecture like a real-world story. Let's follow the journey of "UrbanBloom," a fictional (but very familiar) e-commerce company, to see how these concepts create real business impact.

UrbanBloom was hitting a wall. Like many growing companies, they had plenty of valuable data, but it was all locked up in different places. Customer orders were in Shopify, website clicks lived in Google Analytics, and their inventory data was stuck in a separate ERP system.

The result? A completely fractured view of their business. The marketing team couldn’t personalize campaigns because they couldn't connect a customer's ad click to their final purchase and lifetime value. Answering a simple question like, "Which marketing channels bring in our most profitable, repeat customers?" turned into a nightmare. It meant days of manually exporting CSV files and trying to stitch them together in spreadsheets. The reports were slow, always outdated, and often wrong.

The Pilot Project That Changed Everything

The leadership team knew they were flying blind and decided it was time for a change. But instead of trying to fix everything at once—a classic mistake—they took a smarter, more focused approach. They launched a pilot project with a single, clear goal: build a reliable "Customer 360" view to finally answer that profitability question and give marketing the insights they desperately needed.

Here’s the practical example of how they did it, step-by-step:

-

Laying the Foundation: They chose a cloud-based Lakehouse architecture. This was the perfect fit, giving them a cheap way to dump all their raw data from different sources while also providing a powerful query engine for their analytics and BI tools.

-

Automating the Data Flow: Next, they set up an ELT tool to automatically pull data from Shopify, Google Analytics, and their ERP. This simple move killed the dreaded manual CSV export process. Now, a steady, reliable stream of raw data flowed into their new Lakehouse without anyone lifting a finger.

-

Creating a Trusted Data Product: This is where the magic really happened. Using a tool called dbt (Data Build Tool), their small data team started transforming the raw, messy data. They cleaned it, joined tables, and applied business logic to build a set of trusted data models. The star of the show was their very first data product: a clean, reliable

customer_360table.

This

customer_360model wasn't just another table in a database. It was a reusable, trustworthy asset. For the first time, it combined a customer's entire journey—purchase history, website behavior, and marketing touchpoints—into a single, unified view that everyone in the company could depend on.

The Payoff: Actionable Insights and Real Growth

With this new data asset ready, UrbanBloom plugged it into their business intelligence (BI) tool. The change was immediate. The marketing team could now build dashboards that answered their questions in minutes, not weeks. They could finally see which ad campaigns were actually making money and which were just attracting low-value, one-time buyers.

But they didn't stop there. This customer_360 asset became the engine for even more powerful applications. They connected it to their website's product recommendation engine, which began suggesting items based on a customer's entire history, not just what they clicked on in that session. This single change boosted their average order value by 15% in the first quarter alone.

UrbanBloom’s story is a perfect example of why modernizing your data architecture isn't just some abstract IT project. It’s a practical journey of finding a real business pain point, using modern tools to build a trusted asset, and then using that asset to drive measurable growth.

Common Questions About Modernizing Data Architecture

Kicking off a data architecture modernization project always brings a few tough—but critical—questions to the surface. It’s only natural. Leaders and technical teams both need straightforward answers before they can move forward with real confidence. Let's dig into some of the most common things we hear.

How Do I Justify the Cost to Leadership?

Getting the budget approved is often the first and biggest hurdle. The trick is to stop talking about it like a technology expense. Instead, frame it as a strategic investment with a clear, measurable return.

I always recommend focusing on three concrete areas:

- Operational Efficiency: Show them the money. Calculate the hours your teams will save by automating tedious manual reporting or finally clearing out those data engineering bottlenecks.

- New Revenue Opportunities: Connect the dots between better data and business growth. Show how faster, more reliable insights can power things like hyper-personalization or even entirely new data-driven products.

- Risk Reduction: This one gets executives’ attention. Explain how modern systems don't just improve security, but also ensure compliance and make critical business metrics far more reliable.

Can a Small Business Actually Do This?

Absolutely. Modernization isn't just a game for massive enterprises anymore. In fact, smaller, more agile businesses can often get this done much faster. The key is to start lean and take advantage of scalable, pay-as-you-go cloud services.

Practical Example: Think about a small e-commerce shop. They don't need to build a gigantic, complex platform on day one. They can start with a simple cloud data warehouse and an automated ELT tool to solve one high-impact problem, like finally getting a single, unified view of their customers.

Actionable Insight: The biggest mistake organizations make is focusing exclusively on the technology while completely ignoring the people and process changes required. A modern data architecture is a socio-technical shift; without new skills, new roles, and a new mindset, even the best tools will fail to deliver value.

This kind of cultural shift is held up by strong data governance. You can’t succeed without establishing clear rules and responsibilities, which is why a solid framework is non-negotiable. To get started, you can explore a sample data governance policy to see what those foundational elements look like in practice.

At DATA-NIZANT, we provide expert-authored articles and in-depth analysis to help you navigate complex topics in AI, data science, and digital infrastructure. Our goal is to equip you with the actionable intelligence needed to drive transformative outcomes. Explore our insights at https://www.datanizant.com.