Introduction: A Decade of Big Data Blogging When I began writing about Big Data in 2013, it was an exciting new frontier in data management and analytics. My first blog, What’s So BIG About Big Data, introduced the core pillars of Big Data—the “4 Vs”: Volume, Velocity, Variety, and Veracity. As the years passed, I expanded into related topics with posts like Introduction to Hadoop, Hive, and HBase, Data Fabric and Data Mesh, and Introduction to Data Science with R & Python. Each blog marked the evolution of Big Data and reflected the shifting focus in the field as data…

-

-

Welcome to an exciting new chapter in exploring the world of AI, Machine Learning (ML), and Data Science! Over the years, I have posted on a variety of topics, covering everything from Python basics to the intricacies of neural networks. But now, it’s time for something bigger—a cohesive, structured series that will demystify these domains, guiding you step-by-step from foundational concepts to advanced applications. In this revamped series, I will reorganize my previously published blogs, presenting them in a logical progression so you can easily follow along, regardless of your current experience level. Alongside these, I’ll also introduce new posts…

-

Introduction Back in 2013, I began blogging about Big Data, diving into the ways massive data volumes and new technologies were transforming industries. Over the years, I’ve explored various aspects of data management, from data storage to processing frameworks, as these technologies have evolved. Today, the conversation has shifted towards decentralized data architectures, with Data Fabric and Data Mesh emerging as powerful approaches for enabling agility, scalability, and data-driven insights. In this blog, I’ll discuss the core concepts of Data Fabric and Data Mesh, their key differences, and their roles in modern applications. I’ll also share a bit of my…

-

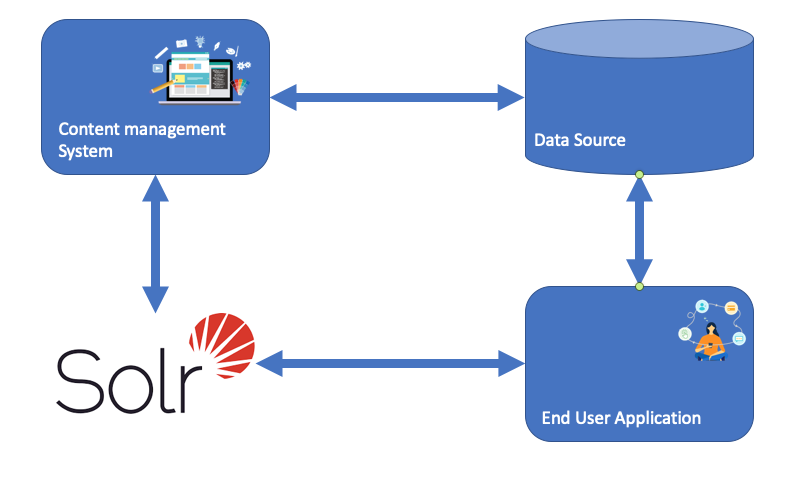

Solr is the popular, blazing-fast, open-source enterprise search platform built on Apache Lucene™. Here is a example of how Solr might be integrated into an application This blog has a curated list of SOLR packages and resources. It starts with how to install and then show some basic implementation and usage. Installing Solr Typically in order to install on my Mac, I always use Homebrew first update your brew: brew update Updated Homebrew from 37714b5ce to 373a454ac. then install solr: brew install solr However this time I am going to show step by step installation on mac as explained in…

-

Elasticsearch is a search engine based on the Lucene library. It provides a distributed, multitenant-capable full-text search engine with an HTTP web interface and schema-free JSON documents. Elasticsearch is developed in Java. Following an open-core business model, parts of the software are licensed under various open-source licenses (mostly the Apache License),[2] while other parts fall under the proprietary (source-available) Elastic License. Official clients are available in Java, .NET (C#), PHP, Python, Apache Groovy, Ruby and many other languages. According to the DB-Engines ranking, Elasticsearch is the most popular enterprise search engine followed by Apache Solr, also based on Lucene. Original author Shay Banon talking about Elasticsearch at Berlin Buzzwords 2010 Initial release 8 February 2010 Written in Java License Various (open-core model), e.g. Apache License 2.0(partially; open source), Elastic License (proprietary; source-available) Website…

-

Weekend started, pored myself a glass of Long Meadow Ranch Anderson Valley Pinot Noir. It smelled like cherry cola, cinnamon, and a forest in autumn. Probably not the right time to think or even blog about OLAP. – Kinshuk Dutta Online analytical processing, or OLAP Is an approach to answer multi-dimensional analytical (MDA) queries swiftly in computing. OLAP is part of the broader category of business intelligence, which also encompasses relational databases, report writing and data mining. Typical applications of OLAP include business reporting for sales, marketing, management reporting, business process management (BPM), budgeting and forecasting, financial reporting and similar…

-

In my recent post I tried explaining how different data collection mechanisms are available and how due to modern day requirement, modern data lakes were formed. Iceberg is one such solution that came out really strong. What is Apache ICEBERG? Apache Iceberg is an open table format for huge analytic datasets. Iceberg adds tables to Trino and Spark that use a high-performance format that works just like a SQL table. With special emphasis on User Experience Reliability and Performance & Open Standards What makes it special is its unique table design for big data. This is explained brilliantly and covered well…

-

Introduction: My Journey into Presto My interest in Presto was sparked in early 2021 after an enriching conversation with Brian Luisi, PreSales Manager at Starburst. His insights into distributed SQL query engines opened my eyes to the unique capabilities and performance advantages of Presto. Eager to dive deeper, I joined the Presto community on Slack to keep up with developments and collaborate with like-minded professionals. This blog series is an extension of that journey, aiming to demystify Presto and share my learnings with others curious about distributed analytics solutions. What is PRESTO Presto is a high performance, distributed SQL query…

-

Data Lake The modern enterprise runs on data. However storing the same has always been challenging, expensive and it results in data silos. A data lake consists of a cost-effective and scalable storage system along with one or more compute engines. Data Lakes are consolidated, centralized storage areas for raw, unstructured, semi-structured, and structured data, taken from multiple sources and lacking a predefined schema. Data Lakes have been created to save data that “may have value.” It supports a broad range of essential functions from traditional decision support to business analytics to data science. The value of data and the insights…

-

In order to understand the criticality of Big Data Search, we need to understand the enormity of data. A terabyte is just over 1,000 gigabytes and is a label most of us are familiar with from our home computers. Scaling up from there, a petabyte is just over 1,000 terabytes. That may be far beyond the kind of data storage the average person needs, but the industry has been dealing with data in these sorts of quantities for quite some time. In fact, way back in 2008, Google was said to process around 20 petabytes of data a day (Google doesn’t release information on how much data it processes today). To put…