When we talk about Human-Centered AI, we're not just throwing around another buzzword. We're talking about a fundamental shift in how we build intelligent systems. It’s an approach that places human needs, values, and well-being at the absolute core of the entire development process—from the first sketch on a whiteboard to the final product in a user's hands.

This philosophy ensures that technology is built to augment and empower people, not just replace them.

Shifting From Automation to Collaboration

For a long time, the conversation around AI was dominated by automation—how can we get a machine to do a human's job faster and cheaper? While efficiency is obviously important, that’s a limited view. A more thoughtful, and frankly more powerful, approach is now taking center stage.

Human-Centered AI completely reframes the goal. It sees AI not as a replacement for human intellect but as a collaborative partner.

Think of it like the difference between a fully autonomous autopilot and an expert co-pilot. The autopilot is designed to take over completely. But a co-pilot works alongside the human pilot, feeding them crucial data, managing complex systems, and augmenting their skills to achieve a safer, better outcome. That collaborative spirit is the essence of building AI that genuinely serves our goals.

Why This Shift Matters Now

This pivot to a people-first AI philosophy isn’t just an academic idea; it’s a market-driven necessity. The demand for transparent, accountable, and fair AI systems is exploding. The global human-centered AI market is on a steep upward curve, projected to climb from roughly $9.5 billion in 2023 to $13.96 billion in 2025. You can dig deeper into the numbers in this detailed market overview.

This really is the natural next step for data-driven decision-making. We've moved beyond just hoarding data to making sure the intelligent systems we build from it are trustworthy and make sense. If you’re curious about the mechanics of building that trust, our earlier posts on understanding Explainable AI (XAI) offer a great starting point.

To better illustrate the difference in these two development mindsets, let's break it down.

Traditional AI vs Human-Centered AI

This table provides a clear, at-a-glance comparison highlighting the fundamental differences in goals, processes, and outcomes between traditional AI development and a human-centered approach.

| Aspect | Traditional AI Approach | Human-Centered AI Approach |

|---|---|---|

| Primary Goal | Automate tasks, maximize efficiency, and reduce human involvement. | Empower users, augment human capabilities, and solve real-world problems. |

| Design Focus | Algorithm performance, accuracy, and speed. | User experience, trust, interpretability, and ethical considerations. |

| Development Process | Data-first. The process is driven by available data and technical feasibility. | Human-first. The process starts with user research, empathy, and problem definition. |

| User Role | Often seen as a passive recipient or simply a source of data. | An active collaborator and co-creator throughout the design lifecycle. |

| Success Metrics | Accuracy, precision, recall, F1-score, and computational efficiency. | User adoption, satisfaction, task success rate, trust, and overall well-being. |

| Outcome | A system that performs a task. | A collaborative tool that people want to use and that enhances their work. |

As you can see, the human-centered path leads to profoundly different—and often more successful—outcomes because it's built on a foundation of collaboration from day one.

The Measurable Impact of a Human-First Approach

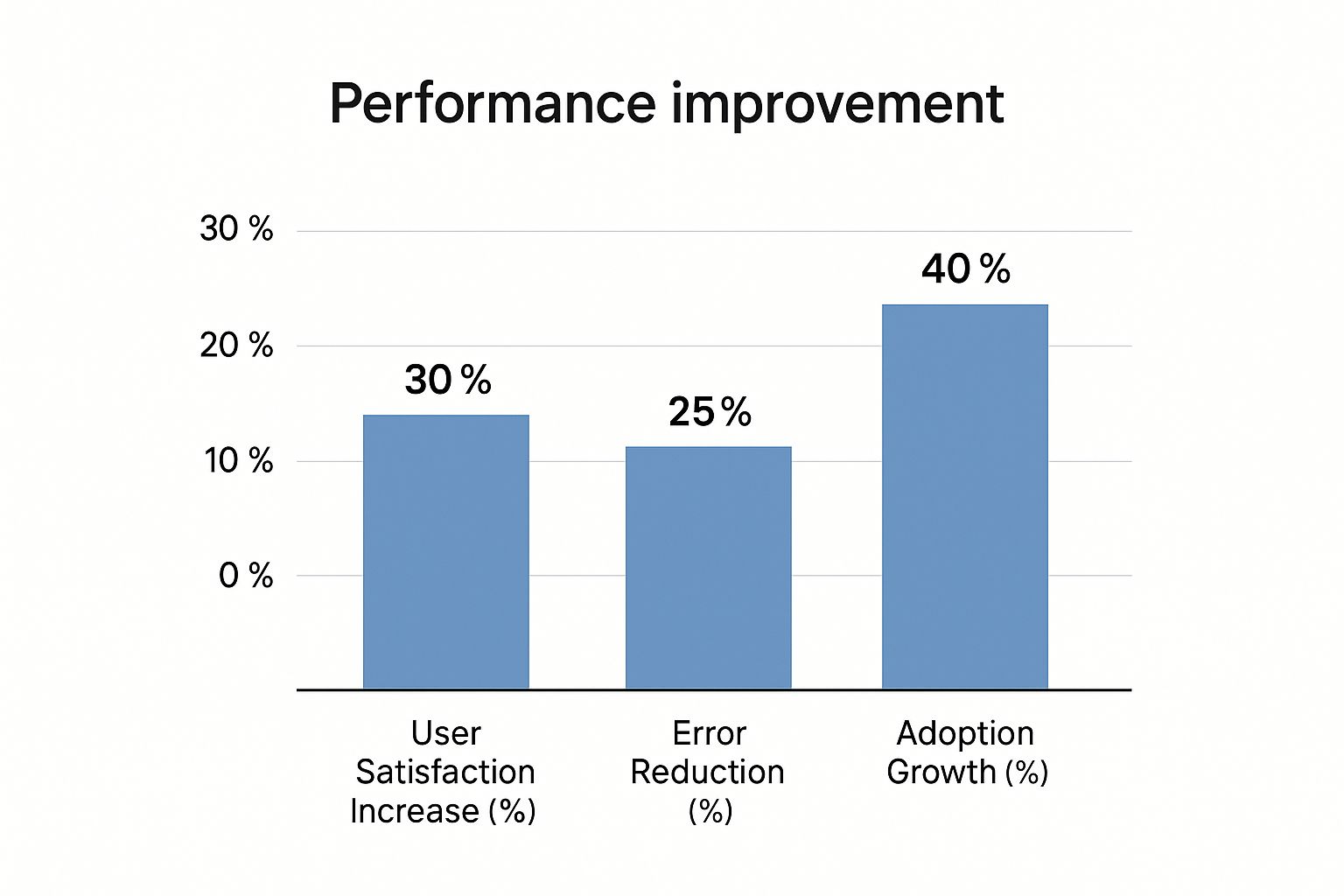

Putting people first isn't just about ethics; it delivers tangible business results. It directly boosts user satisfaction, cuts down on operational errors, and makes it much easier for teams to adopt new technologies.

The chart below visualizes the concrete benefits organizations often see when they make human-centric design a priority in their AI projects.

The numbers speak for themselves. A human-centered focus drives a 40% increase in adoption growth. It's simple, really: when people trust and understand a system, they're far more likely to embrace it and weave it into their daily workflows. This is the key to creating technology that doesn't just work, but actually thrives.

The Core Principles of Human-Centered AI

To build AI that actually works for people, good intentions aren't enough. You need a solid set of guiding principles to act as a blueprint. Think of these principles as the foundational pillars of human-centered AI—they turn a lofty philosophy into a practical roadmap for building technology we can trust.

At its core, this approach is all about creating a symbiotic relationship. AI should enhance what we're good at, and our insights should guide the AI's development. This is how we ensure technology remains a tool for empowerment, not just a replacement for people.

Making AI Decisions Understandable

One of the most critical principles here is explainability. If an AI makes a decision that affects someone's life, they deserve to know why. This means getting away from so-called "black box" models, where the internal logic is a mystery even to the developers. Instead, we need systems that can clearly articulate their reasoning.

Practical Example: Imagine a credit scoring AI denies a loan application. A traditional, "black box" model would simply output "Loan Denied," leaving the applicant frustrated and confused. A human-centered system, built for explainability, would provide actionable reasons: "Loan denied because the debt-to-income ratio of 48% exceeds the 43% threshold, and recent credit history shows two late payments." This transparency empowers the applicant with a clear path to improve their financial standing.

Ensuring Fairness by Actively Mitigating Bias

Another core tenet is fairness. AI models learn from the data we give them, and if that data reflects historical biases in society, the AI will learn and amplify those same biases. A human-centered approach demands that we actively hunt for, measure, and root out this bias to ensure fair outcomes for everyone.

Practical Example: A company uses an AI to screen résumés. The model was trained on a decade's worth of historical hiring data, which overwhelmingly favored male candidates for technical roles. As a result, the AI learns to penalize résumés that include words like "women's chess club" or mention attendance at a women's college. A human-centered approach would involve auditing the training data for gender bias and implementing fairness constraints on the algorithm to ensure it evaluates candidates based on skills and experience, not proxies for gender. As we covered in our analysis of the dangers of biased data, this proactive mitigation is crucial for building equitable systems.

Actionable Insight: To mitigate bias, form a diverse data review team to audit your training datasets for historical skews. Use fairness toolkits like IBM's AI Fairness 360 or Google's What-If Tool to test models for disparate impact across different demographic groups before deployment.

Augmenting Human Skills, Not Replacing Them

Finally, the principle of augmentation is about designing AI to be a collaborative partner that makes people better at their jobs. The goal isn't to build a system that can do a person's job, but one that helps them do it better. This creates a synergy where the human and the machine together can achieve things neither could on their own.

Practical Example: Consider a radiologist analyzing medical scans for signs of cancer. A traditional AI might try to automate the diagnosis entirely, risking errors and removing expert judgment. A human-centered, augmentative AI acts as a diagnostic assistant. It scans thousands of images to highlight subtle anomalies that a human eye might miss, presenting them to the radiologist with confidence scores and references to similar cases. The AI doesn't make the final call; it empowers the doctor with enhanced data, leading to faster, more accurate diagnoses. The human remains in control, amplified by a powerful tool.

Actionable Frameworks for Building People-First AI

Knowing the principles of human-centered AI is a great start, but principles alone don't build great products. You need a structured game plan. Just wanting to create fair and transparent AI isn't enough; you need practical frameworks to guide you from the first sketch on a whiteboard to the final deployed system. These methods give you the scaffolding to make sure human values are baked in from the beginning, not just sprinkled on at the end.

Two of the most powerful frameworks out there are Participatory Design and Value Sensitive Design. They flip the script on the old top-down, "we-know-best" development model. Instead, they push for a collaborative and value-focused approach that ensures the final product actually works for the people it's meant to serve.

Incorporating End-Users with Participatory Design

Think of Participatory Design as bringing your end-users onto the development team. Instead of treating them like subjects in a lab, you treat them as co-creators. You bring them into the room—literally or virtually—to share their unique insights and lived experiences. This simple shift ensures the technology is built with them, not just for them.

Actionable Insight: Start your next AI project with a co-design workshop. Invite a small group of target users and give them tools like sticky notes and whiteboards. Ask them to map out their ideal workflow or solution, rather than just reacting to your ideas. This generates user-centric features you might never have considered. For instance, involving customers directly in designing an AI recommendation engine can prevent it from feeling intrusive, a key takeaway from our guide on machine learning in marketing.

Embedding Ethics with Value Sensitive Design

While Participatory Design is all about the who, Value Sensitive Design (VSD) is about the what—specifically, which human values the system needs to champion. VSD is a methodology for weaving ethical considerations like privacy, fairness, and trust directly into the technology's DNA, right from the start.

It’s a proactive approach to ethics, making sure that core values are identified and prioritized before a single line of code is written. VSD forces teams to ask tough questions early: "Whose values are we embedding?" and "How might this technology be misused?"

The VSD framework is typically broken down into three key investigations: conceptual, empirical, and technical. First, teams identify all the stakeholders and the values at stake. Then, they do the research to see how those values actually play out in the users' world. Finally, they translate those real-world findings into concrete design features.

A Practical Walkthrough: An AI Learning Tool

To make this less abstract, let's walk through building an AI-powered educational tool for students with learning disabilities using these frameworks.

-

Empathy and User Research (The Foundation): The project doesn't kick off with algorithms; it starts with people. The team sits down for in-depth interviews with students, teachers, and parents to really get a handle on their daily struggles, goals, and frustrations. Empathy maps are created to visualize what these users think, feel, say, and do.

-

Collaborative Ideation (Participatory Design in Action): With that foundation, the team hosts workshops with these same stakeholders. But instead of showing off a polished concept, they use low-fidelity tools like paper sketches and simple wireframes. This invites everyone to co-design the tool's core features. A student might suggest a text-to-speech function with adjustable speeds, while a teacher might ask for a dashboard to track progress without compromising student privacy.

-

Prioritizing Core Values (Value Sensitive Design in Action): Right in the middle of these creative sessions, the team works to identify the most important values. For this tool, autonomy, privacy, and encouragement bubble up to the top. These aren't just feel-good words; they become hard design requirements. The tool must empower students (autonomy), protect their data (privacy), and offer positive reinforcement (encouragement).

-

Iterative Prototyping and Feedback: Armed with co-designed ideas and clear values, the team builds a minimally viable product (MVP). This early version gets put right back into the hands of the students and teachers for testing. Feedback is gathered, and the cycle begins again. Each loop refines the tool, making it a better fit for both user needs and the ethical principles the team committed to.

This human-centered process guarantees that the final AI tool isn't just a cool piece of tech. It’s genuinely helpful, respectful, and effective for the very people it was built to serve. It’s a clear roadmap for turning abstract principles into real-world impact.

Human-Centered AI in the Real World

Principles and frameworks are a great starting point, but the real power of human-centered AI comes to life when you see it in action. Moving past abstract ideas, this approach is already making a huge difference across industries by helping create tools that people actually want to use. Let’s look at a few practical examples of how this philosophy is solving real problems.

These case studies prove that when you put human needs first, the technology simply becomes more effective, trustworthy, and valuable for everyone.

Augmenting Expertise in Healthcare Diagnostics

In the high-stakes world of medical imaging, there's no substitute for an expert's judgment. One of the most powerful applications of human-centered AI is in diagnostic tools designed to assist radiologists, not replace them.

Picture an AI system that has analyzed thousands of MRIs, X-rays, and CT scans. Instead of spitting out an automated diagnosis, it acts more like a highly skilled assistant. The system flags subtle patterns or anomalies a human eye might miss after a long day of reviewing scans, and it even assigns a confidence score to each of its findings.

This approach keeps the medical professional squarely in the driver's seat. The AI offers data-driven suggestions, but the radiologist—with their deep expertise and the patient's full medical history—makes the final call.

Actionable Insight: When designing an expert-assist AI, focus on visualizing uncertainty. Instead of just showing a result, use heatmaps to highlight areas of interest in an image and display confidence intervals. This gives the human expert the context they need to make a well-informed final decision.

Boosting Satisfaction in Customer Service

We've all been there: trapped in a frustrating loop with a rigid chatbot. Human-centered design flips this experience on its head by building AI systems that are smart enough to know their own limits.

Think about a modern customer service AI. It can handle routine requests—like checking an order status or resetting a password—with incredible efficiency. This frees up human agents to tackle the more complex issues. But the moment a customer's query becomes emotionally charged or needs nuanced problem-solving, the AI does something brilliant: it seamlessly hands the conversation over to a person.

Crucially, it transfers the entire context of the interaction, including everything the customer has already tried. The agent can pick up right where the AI left off without forcing the user to repeat themselves. This creates a fluid, supportive experience that blends the speed of automation with the empathy of a human. Many of these systems rely on clean data flows, similar to the concepts in our guide on building automated data pipelines, to make sure that context is never lost.

Making E-Commerce Recommendations Genuinely Helpful

Personalized recommendations are everywhere in e-commerce, but they often walk a fine line between being helpful and just plain intrusive. A human-centered approach focuses on creating suggestions that feel like they’re coming from a trusted friend, not an aggressive salesperson.

Instead of just pushing products based on your browsing history, these smarter systems think about context. They might recommend an item that complements something you just bought, or suggest products that other people with similar tastes have genuinely loved. The key is transparency. The platform will often explain why it’s recommending an item (“Because you bought the [product name]”), which gives users a sense of control and understanding.

This simple shift builds trust and encourages people to actually engage with the recommendations, leading to a much better shopping experience and, ultimately, more sales.

To better illustrate these points, the table below summarizes the tangible benefits we see when a human-centered approach is applied in different industries.

Human-Centered AI Application Benefits

This table summarizes the tangible benefits observed in our case studies across different industries, showcasing the impact of a human-centered approach.

| Industry | Application Example | Key Benefit for Humans | Business Outcome |

|---|---|---|---|

| Healthcare | AI-Assisted Medical Imaging | Faster, more accurate diagnoses reduce radiologist fatigue and improve patient care. | Increased diagnostic accuracy and improved hospital efficiency. |

| Customer Service | Smart Chatbot Escalation | Reduced frustration by connecting users to human agents for complex issues. | Higher customer satisfaction scores (CSAT) and lower agent burnout. |

| E-Commerce | Transparent Recommendation Engine | Users feel understood and in control, leading to a more enjoyable shopping journey. | Increased customer loyalty and higher average order value (AOV). |

| Finance | Fraud Detection Support Tool | Empowers analysts to make confident decisions by flagging suspicious activity with clear context. | Reduced false positives and stronger security for customer accounts. |

As you can see, the outcomes benefit both the user and the business, creating a win-win scenario.

The Common Thread Connecting These Examples

In each of these scenarios, the AI’s primary job is to act as a decision-support tool. Human-centered AI changes the goal of development from simply automating tasks to augmenting human capabilities, with a strong focus on fairness, security, and transparency. This philosophy is driving incredible applications in fields where AI can truly enhance human performance. For anyone curious about the market trends, you can learn more about these human-centered AI trends and their growing impact.

These real-world examples prove a simple truth: building AI with a deep understanding of human needs doesn't just lead to more ethical technology—it leads to better technology, period.

Overcoming Common Implementation Challenges

Embracing a human-centered AI philosophy is a worthy goal, but the road from principle to practice is almost always a bumpy one. Shifting an entire organization’s mindset and technical workflows isn't as simple as flipping a switch. What many teams discover is that the biggest hurdles aren’t really about the code; they're about culture, communication, and complexity.

To pull it off, you need an honest look at the common roadblocks and a clear strategy to get around them. It’s a real commitment that tests an organization’s agility and its dedication to actually putting people first.

From Abstract Ideas to Concrete Code

One of the first places teams get stuck is trying to translate abstract values like “fairness” or “transparency” into tangible software requirements. Everyone on a development team can agree that an AI model should be fair. But what does that actually mean in terms of data processing, algorithmic logic, and output validation?

Practical Example: Defining "fairness" for a hiring algorithm is complex. Does it mean ensuring equal outcomes (e.g., hiring the same percentage of candidates from different demographic groups)? Or does it mean ensuring an unbiased process, even if outcomes differ due to applicant pool variations? These definitions can be contradictory.

Actionable Insight: Don't leave these definitions to chance. Before development, create a "Values & Metrics" document. For each ethical principle (like fairness), define it specifically for your project and assign a quantifiable metric. For example: "Fairness in our hiring tool will be measured by ensuring the pass-rate variance between any two demographic groups is less than 5%." This turns an abstract idea into a testable requirement.

Managing the Necessary Cultural Shift

Let's be clear: a truly human-centered AI approach demands a deep cultural change. It means breaking down the traditional silos between departments. Suddenly, data scientists have to work side-by-side with UX designers, sociologists, ethicists, and legal experts. This kind of cross-functional model is essential for a holistic perspective, but it can easily clash with rigid, established workflows.

Another common point of friction is trying to weave deep user feedback into fast-paced agile development cycles. Teams accustomed to focusing purely on technical metrics may struggle to prioritize qualitative user insights, often dismissing them as too subjective or difficult to quantify. To get past this, leadership has to champion the value of human-centric input right from the start.

Here are a few actionable strategies to help manage the transition:

- Build Cross-Functional Teams: Intentionally create "AI pods" that include an ethicist, a UX designer, and a domain expert alongside engineers from day one. This makes human-centric thinking a shared responsibility, not an external critique.

- Start with Low-Risk Projects: Kick off with an internal tool, like an AI to help the sales team prioritize leads. Demonstrating a clear win—like a 15% increase in lead conversion—builds the internal trust and momentum needed to tackle more ambitious, customer-facing projects.

- Implement "Human-in-the-Loop" Validation: Design systems where human experts can review and override AI decisions, especially in critical applications. This not only improves safety and accuracy but also builds trust in the technology across the organization.

Navigating the Technical Trade-Offs

Finally, the technical hurdles themselves can be significant. One of the classic trade-offs in machine learning is the tension between a model’s performance and its explainability. Often, the most accurate models—like complex deep learning networks—are also the most opaque “black boxes,” making their decisions incredibly difficult to interpret.

Choosing a simpler, more transparent model might make it easier to explain its reasoning, but it could come at the cost of a few percentage points in predictive accuracy. This balancing act is at the heart of effective human-centered AI and requires a smart strategy.

Proper AI model management is crucial for tracking these trade-offs and ensuring that the chosen model aligns with both business goals and ethical principles. For a deeper dive, our guide on effective AI model management offers practical frameworks for making these critical decisions.

The Future of AI Governance and Regulation

As human-centered AI shifts from a theoretical concept to everyday practice, a new landscape of governance and regulation is quickly taking shape to steer its development. Think of these emerging frameworks less as restrictive hurdles and more as essential guardrails. Their job is to push organizations to build safer, more trustworthy systems that actually earn public confidence.

This proactive approach makes sure that principles like fairness and transparency are hard-coded requirements, not just optional add-ons. By setting clear rules for accountability, regulators are fostering an environment where ethical innovation can truly flourish.

Catalysts for Trust and Innovation

It turns out that strong governance is a powerful catalyst for building better AI. Frameworks like the NIST AI Risk Management Framework provide clear, actionable guidelines that help organizations manage the potential downsides of AI while getting the most out of its benefits. This kind of structure encourages companies to think critically about their models right from the start.

In 2024, government and regulatory bodies took a much more active role in this movement, allocating serious funding and creating new governance structures. The U.S. federal government, for instance, set aside over $1.9 billion for AI research focused specifically on responsible applications. At the same time, more than 59 new AI-related regulations were introduced to reinforce these ethical standards. You can read the full research on human-centered AI market trends to see how these policies are shaping the industry.

Actionable Insight: Don't wait for regulation to force your hand. Proactively adopt a framework like the NIST AI Risk Management Framework to conduct an "AI Impact Assessment" before launching a new system. This process helps you identify potential harms (like bias or privacy risks) and build mitigation strategies from the start, turning compliance into a competitive advantage.

Our comprehensive guide on AI governance best practices breaks down how to build a strong internal framework from the ground up. This approach doesn't just reduce risk; it builds deep, lasting trust with your users. The future of AI will be defined by those who build it responsibly.

Frequently Asked Questions

When you start shifting your AI development to focus more on people, a lot of good questions come up. We see these all the time. Here are some straightforward answers to the most common ones, with practical advice to guide you as you build more human-centered AI.

What Is the First Step to Making Our AI More Human-Centered?

The single most important first step is a mental one: shift from a technology-first mindset to a people-first one.

Actionable Step: Before writing a single line of code for your next AI project, conduct at least five interviews with your target end-users. Create simple "user journey maps" to visualize their current process, pinpointing their specific frustrations and needs. This foundational research becomes your North Star, ensuring every technical decision is tied directly to solving a real human problem.

Does Human-Centered AI Mean Less Powerful or Accurate AI?

Not at all. The goal isn't to sacrifice performance but to optimize it for human collaboration and trust. It's a classic trade-off: is a super-complex, high-accuracy "black box" model better than a slightly less accurate one that's completely transparent?

In high-stakes fields like healthcare or finance, the transparent model is almost always more valuable and safer. This is a core challenge in building trustworthy systems. As we've covered in our deep dives on Explainable AI (XAI), the focus is on creating an effective human-AI team where the combined performance is far greater than what either could achieve alone.

The real measure of success in human-centered AI is not just raw accuracy, but the overall effectiveness of the combined human-machine system. A slightly less accurate but fully understandable AI is often superior to a marginally more accurate but completely opaque one.

How Can a Small Business Implement These Principles on a Budget?

Good news: human-centered AI is about your methodology, not about buying expensive tools. Startups and small businesses can absolutely do this cost-effectively by building a tight feedback loop with users early and often.

Actionable Insight: Use free and low-cost tools to embed user feedback into every development sprint.

- User Interviews: Conduct 3-5 targeted video calls using free tools like Google Meet to uncover core user needs.

- Simple Surveys: Use Google Forms or SurveyMonkey's free tier to get quick quantitative feedback on features.

- Low-Fidelity Prototypes: Test concepts using simple, free wireframing tools like Figma or even just paper sketches. This lets you fail fast and cheap before committing to heavy development costs.

The key is to adopt an agile, iterative process. When user feedback is a core part of every single sprint, you naturally build a product that stays aligned with human needs right from the start.

At DATA-NIZANT, we believe that building intelligent systems starts with understanding people. Our expert analyses and in-depth articles are designed to help you navigate the complexities of AI, from governance to implementation. Explore more actionable insights at https://www.datanizant.com.