Prompt engineering is the art and science of crafting precise instructions—or "prompts"—to guide Large Language Models (LLMs) to generate exactly the output you need. This isn't about complex coding; it's about communicating with clarity and strategic intent. A well-crafted prompt turns a simple question into a powerful command, unlocking the AI's full potential to solve real-world problems.

What Is Prompt Engineering Anyway?

Think of an LLM as a brilliant but inexperienced assistant. If you give a vague instruction like, "Draft a marketing email," you'll get a generic, unusable result. But if you provide clear, detailed instructions, that same assistant can produce a masterpiece. That’s the core of prompt engineering: structuring your requests to get the best possible results from an AI.

It’s the skill that transforms you from a casual AI user into a power user. It's the difference between asking "write about shoes" and commanding an AI to produce a compelling, 150-word product description for a new line of trail running sneakers, targeted at eco-conscious millennial hikers, written in an adventurous and inspiring tone.

More Than Just a Buzzword

Prompt engineering has become a critical skill for anyone using AI tools, from marketers and writers to developers and data analysts. As we see in applications like machine learning in marketing, the ability to guide AI models effectively has a direct impact on productivity and the quality of work. It’s the key to unlocking the practical, day-to-day power of AI.

The role of a "prompt engineer" is a new and evolving specialization. A 2025 study of AI job postings found that while dedicated "prompt engineer" titles were still rare (less than 0.5% of related AI jobs), the required skills were highly specialized. The analysis showed that 22.8% of these job descriptions demanded deep AI knowledge, 21.9% prioritized communication skills, and 18.7% required specific prompt design expertise. You can learn more about the high value of prompt engineering roles in today's job market.

An Actionable Example of Prompt Engineering

Let's apply this to a common task: generating a social media post.

Vague Prompt (Ineffective):

"Create a social media post about our new productivity app."

This is too broad. The AI has to guess the audience, tone, key features, and desired action. The result will be bland and ineffective.

Engineered Prompt (Actionable):

"Act as a social media marketing expert with a witty and engaging voice. Craft a 280-character Twitter post announcing our new productivity app, 'Zenith Focus.' Highlight its main benefit: blocking digital distractions to help users enter a 'flow state.' End with a compelling question to boost engagement and include the hashtag #Productivity."

This actionable prompt provides:

- A Persona: "Act as a social media marketing expert…"

- Specific Context: "…announcing our new productivity app, 'Zenith Focus.'"

- Clear Constraints: "Craft a 280-character Twitter post…"

- A Defined Goal: "Highlight its main benefit… End with a compelling question…"

By providing clear, structured instructions, you turn a vague request into a strategic command. This ensures the output isn't just correct but effective. This is the essence of successful prompt engineering.

The Core Principles of Effective Prompting

To get consistently great results from a Large Language Model (LLM), you must move beyond simple questions and start thinking like a director. Prompt engineering isn't about finding "magic words." It's about mastering a set of core principles that turn your ideas into crystal-clear instructions.

Think of these principles as the grammar for communicating with an AI. By internalizing them, you give the model everything it needs to understand what you want, how you want it, and why. This is how you eliminate guesswork and transform generic outputs into precise, valuable assets.

Principle 1: Assign a Persona

One of the most powerful and actionable techniques is giving the AI a role to play. When you tell an LLM to "act as" an expert, you instruct it to access the specific knowledge, vocabulary, and communication style associated with that profession, immediately focusing the output and elevating its quality.

Practical Example:

- Before (Vague Request): "Write some marketing copy for a new coffee blend."

- After (With Persona): "Act as an expert coffee marketer with 15 years of experience launching luxury brands. Write marketing copy for a new single-origin Ethiopian coffee blend."

This simple change frames the entire task, guiding the AI to produce copy that is far more sophisticated and targeted.

Principle 2: Provide Deep Context

AI models don't know your project, goals, or audience unless you tell them. Providing context is crucial for getting useful results. The more relevant details you include, the less the AI has to guess. For an in-depth look at how these models work, our guide on generative AI and large language models explains why context is so vital.

Actionable Insight: Create a Context Checklist

Before writing your prompt, answer these questions to build context:

- Target Audience: Who is this for? (e.g., "beginner data analysts," "C-suite executives")

- Goal: What is the desired outcome? (e.g., "to generate leads," "to educate users," "to summarize key findings")

- Key Information: What details are non-negotiable? (e.g., "mention our 'Smart Sync' feature," "the report focuses on Q3 sales data")

Principle 3: Set Clear Constraints and Define the Format

Telling the AI what to do is only half the battle. You also need to tell it what not to do and how to structure the final output. Constraints act as guardrails, preventing the model from going off-topic or delivering information in an unusable format.

An unconstrained AI is like an artist with an infinite canvas—the result can be overwhelming and unfocused. Constraints provide the frame and the palette, directing creativity toward a specific, useful outcome.

Clearly defining your desired format is a critical constraint. Do you need a bulleted list, a JSON object, a formal email, or a table? Specifying this upfront saves a massive amount of time you'd otherwise spend editing.

Practical Example: The Transformation

Let's see how these principles combine to transform a vague request into a powerful, actionable instruction.

Transforming Vague Prompts into Powerful Instructions

| Ineffective Prompt (Before) | Effective Prompt (After) | Core Principle Applied |

|---|---|---|

| "Summarize the attached article about AI trends." | "Act as a senior technology strategist preparing a briefing for our non-technical executive team." | Persona |

| (No context provided) | "Context: Your goal is to inform our leadership about the commercial implications of the report. They are busy and need a high-level overview." | Context |

| (No format or constraints) | "Format: Start with a one-sentence summary, then three bullet points on business impact, and conclude with one action item. Constraint: Keep it under 200 words and use jargon-free language." | Constraints & Format |

This transformation highlights the night-and-day difference these principles make. The first prompt is a gamble; the second is a well-defined directive.

Final Actionable Prompt:

"Act as a senior technology strategist preparing a briefing for our non-technical executive team.

Context: Your goal is to quickly inform our leadership about the most important commercial implications of the attached AI trends report. They are busy and need a high-level overview.

Task: Read the attached article and produce a summary.

Format:

- Start with a single, powerful sentence summarizing the core takeaway.

- Follow with three bullet points, each explaining a key trend and its direct business impact.

- Conclude with one sentence on the most immediate action our company should consider.

Constraint: The entire summary must be under 200 words and written in clear, jargon-free language."

By applying these core principles, we’ve turned a lazy request into a precise instruction that is almost guaranteed to produce a relevant and immediately usable output.

A Repeatable Prompt Engineering Workflow

Great prompts are rarely created in a single flash of genius; they are the result of a deliberate, iterative process. To get consistently high-quality results, shift from guessing to a structured method. A repeatable prompt engineering workflow provides that structure, turning a potentially frustrating task into a reliable system.

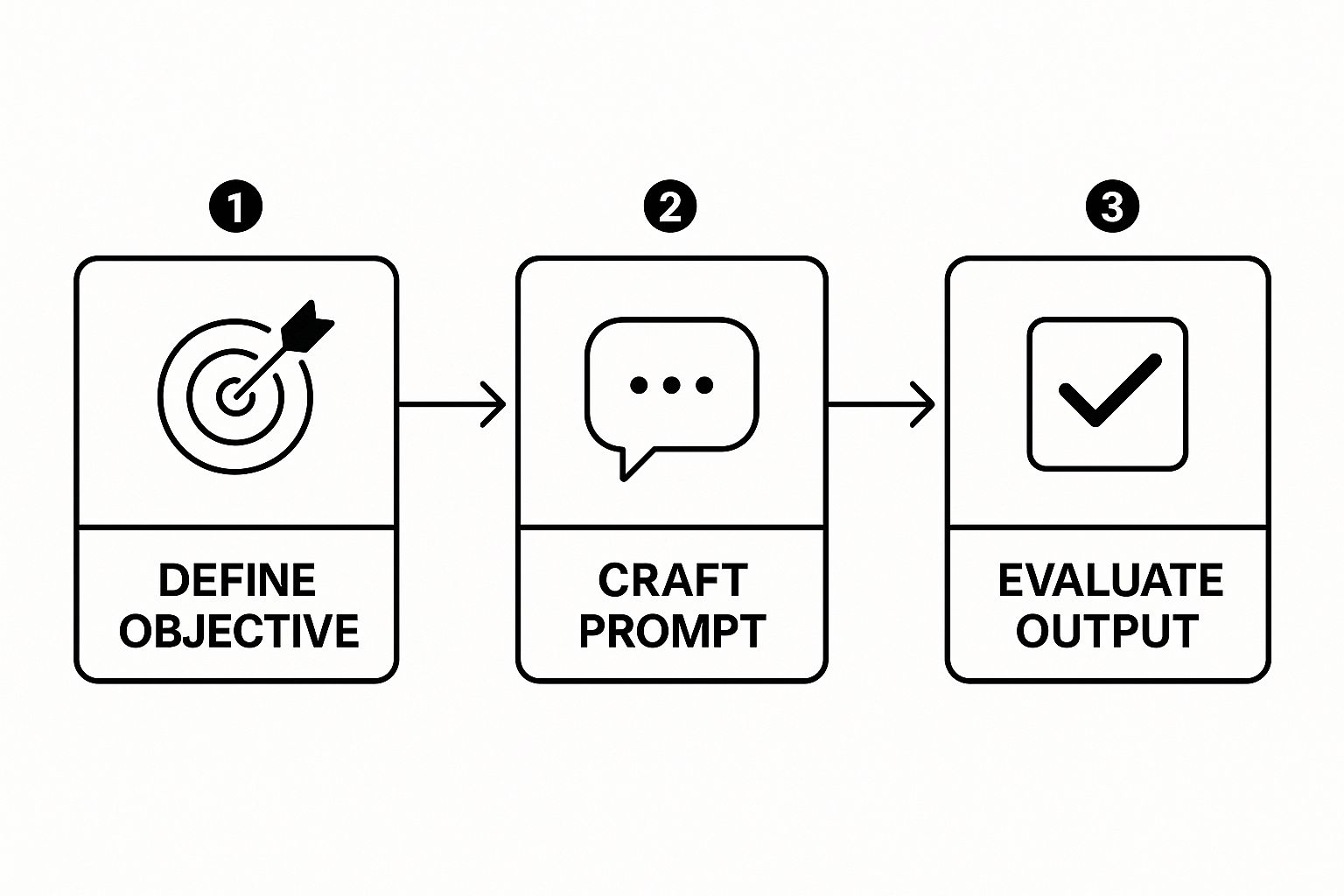

Think of this workflow as a continuous loop: define your objective, craft an initial prompt, analyze the output, and refine your instructions based on the results. This iterative refinement is where you progressively shape the AI’s response to perfectly match your needs.

This process flow shows the core loop of defining an objective, crafting a prompt, and evaluating the output.

The key takeaway here is that prompt engineering is a continuous cycle of refinement, not a one-and-done action.

The Five-Stage Prompting Cycle

This five-stage cycle offers an actionable path from a vague idea to a polished, high-performing prompt.

- Define the Goal: What specific, measurable outcome are you trying to achieve?

- Draft the Initial Prompt: Create your first version using the core principles of persona, context, and constraints.

- Analyze the Output: Critically evaluate the AI's response. Where does it meet your goal, and where does it fall short?

- Refine and Iterate: Pinpoint weaknesses in the output and trace them back to your prompt. Add constraints, clarify instructions, or provide better examples.

- Test with Variations: Once you have a strong prompt, test it with slight variations in wording to ensure it is robust and delivers consistent results.

Actionable Example: Summarizing a Marketing Report

Let's walk through this workflow with a common business task: summarizing a dense data analysis report for a non-technical audience. This is a frequent challenge in fields like machine learning in marketing, where complex findings must be communicated clearly to stakeholders.

Stage 1: Define the Goal

Our objective is to create a concise, jargon-free summary of a quarterly marketing analytics report for the executive team. The summary must spotlight three key performance indicators (KPIs), one major success, and one area for improvement.

Stage 2: Draft the Initial Prompt

A first attempt might be:

"Summarize the attached marketing report."

This is too vague and lacks the necessary detail to produce a useful summary for our specific audience.

Stage 3: Analyze the Output

The AI would likely return a generic, technical summary, restating facts without prioritization or business context. It would be unsuitable for busy executives.

Stage 4: Refine and Iterate

Now, we apply our principles to create a much more effective prompt.

Improved Prompt:

"Act as a senior marketing analyst reporting to the executive team. Your audience is non-technical and needs a high-level overview of our Q3 performance.Task: Read the attached marketing analytics report and create a summary.

Format:

- Start with a one-sentence executive summary.

- List the top 3 KPIs with their results and a brief explanation of what they mean for the business.

- Highlight our biggest success this quarter.

- Identify one key area for improvement.

Constraint: The entire summary must be under 250 words and use clear, simple language. Avoid all marketing jargon."

This revised prompt is a massive improvement. It guides the AI to produce an output that is accurate, strategically aligned with the intended goal, and immediately actionable for the audience.

Prompt Engineering in the Real World

, 'monthly_sales' (float), and 'region' (string). The DataFrame contains sales data for the last quarter.

Task: Generate a Python script that creates a grouped bar chart to compare 'monthly_sales' across different 'product_category' for each 'region'.

Formatting Requirements:

- Use the Seaborn library for the visualization.

- The x-axis should represent 'product_category'.

- The y-axis should represent 'monthly_sales'.

- The bars should be grouped by 'region'.

- Add a clear title: "Monthly Sales by Product Category and Region".

- Label the x-axis "Product Category" and the y-axis "Total Monthly Sales".

- Ensure the legend is clearly visible.

Why This Prompt Works: This prompt leaves nothing to chance. It assigns a specific persona, provides complete context (the DataFrame structure), defines a clear task, and includes a checklist of formatting requirements. This level of detail ensures the AI produces ready-to-use code.

Example 2: Crafting a High-Converting Marketing Email

A vague request like "write a marketing email" yields bland results. An engineered prompt detailing the audience, tone, product, and call-to-action (CTA) can produce something truly powerful.

The power of prompt engineering isn't just about speed; it's about raising the quality and strategic focus of AI-generated content. By giving the AI a detailed creative brief, you're guiding it to produce work that hits specific business goals.

Actionable Prompt:

Act as a senior direct-response copywriter with a persuasive and urgent tone.

Product: 'FocusFlow', a new AI-powered productivity app that helps remote workers minimize distractions.

Target Audience: Freelancers and remote employees who struggle with time management.

Goal: Drive sign-ups for a 14-day free trial.Task: Write a marketing email with the subject line and body copy.

Instructions:

- Subject Line: Must create curiosity and urgency (max 60 characters).

- Email Body:

- Start with a hook that identifies the audience's main pain point (distractions while working from home).

- Introduce 'FocusFlow' as the solution, highlighting its key benefit: reclaiming 5-10 hours of productive time each week.

- Include two bullet points detailing features: 'Smart Notification Filtering' and 'AI-Powered Task Prioritization'.

- End with a strong, clear call-to-action to "Start Your Free 14-Day Trial".

- Constraint: Keep the email body under 150 words.

Why This Prompt Works: This prompt provides a complete creative brief: a persona, product details, a specific audience, and a clear conversion goal. The step-by-step instructions ensure the output is a polished piece of marketing material, demonstrating why prompt engineering has become a high-impact skill. To learn more, see the high value of prompt engineering roles in today's job market.

Example 3: Outlining a Datanizant-Style Technical Blog Post

A good prompt can generate a comprehensive outline, ensuring a technical article is logical, informative, and useful. This example aims to mirror the style and depth of Datanizant's technical guides, such as our article on machine learning model monitoring.

Actionable Prompt:

Act as an expert technical content strategist for Datanizant.

Topic: An introductory guide to Machine Learning Model Monitoring.

Target Audience: Data scientists and ML engineers with 1-3 years of experience.

Goal: Create a comprehensive and easy-to-follow blog post outline.Task: Generate a detailed outline with an H1, H2s, and bullet points for H3s.

Outline Structure:

- H1: A compelling title about the importance of model monitoring.

- Introduction (H2):

- Hook: Start with the problem of model drift.

- Explain what model monitoring is and why it's critical post-deployment.

- Key Concepts to Monitor (H2):

- Data Drift (H3): Input data changes.

- Concept Drift (H3): Relationship between inputs and output changes.

- Performance Metrics (H3): Accuracy, precision, recall.

- A Practical Monitoring Workflow (H2):

- Setting Baselines (H3).

- Automated Alerting (H3).

- Visualization and Dashboards (H3).

- Tools for Model Monitoring (H2):

- Open-Source Options (H3): Mention tools like Evidently AI.

- Cloud-Based Solutions (H3): Mention AWS SageMaker Model Monitor.

- Conclusion (H2):

- Summarize key takeaways.

- Emphasize that monitoring is an ongoing process, not a one-time setup.

Why This Prompt Works: This prompt establishes a distinct brand persona ("Datanizant"), defines the audience's experience level, and provides a rigid template. By dictating the exact heading structure, it forces the AI to organize the information logically, resulting in a comprehensive and reader-friendly structure ready for writing.

The Surprising History of Prompting

While prompt engineering feels new, its roots trace back decades. The core challenge—how to make a machine understand and execute our intent—has been a central puzzle in computer science since its inception.

Long before today's conversational LLMs, early AI systems required carefully constructed inputs. These early "prompts" were rigid and rule-based, designed to navigate the systems' limited understanding of language and context. The journey from those strict commands to the flexible dialogues we have now is a story of continuous innovation in human-computer interaction.

From Block Worlds to Neural Networks

The field's origins can be traced to foundational AI research in the 1970s. A prime example is Terry Winograd's SHRDLU system (c. 1971), which could understand natural language commands to manipulate virtual blocks. Its success, however, depended entirely on perfectly structured prompts.

The arrival of deep learning models in the 2010s marked a monumental shift. These models could finally begin to grasp the complex relationships between words, laying the groundwork for modern prompt engineering. This evolution mirrors the progression seen in other data science fields; for instance, understanding historical data is key to forecasting, a concept explored in our guide to the ARIMA model in Python.

Understanding this history is crucial. It shows that prompt engineering isn't just a temporary trick for current AI models; it's the modern expression of a fundamental challenge in computer science—making technology a true partner in human thought.

The Modern Era of Instruction

The core principles of clarity, context, and structure have remained constant. What has changed is the incredible scale and capability of the models we now command.

- 1970s – Rule-Based Systems: Prompts were rigid commands like "PUT THE RED BLOCK ON THE BLUE BLOCK."

- 1990s – Early NLP: Instructions became slightly more flexible but were still easily confused by ambiguity.

- 2010s – Deep Learning: Models started understanding semantics (the meaning behind words), enabling more conversational prompts.

- Today – LLMs: We can provide nuanced, multi-layered instructions that blend persona, context, constraints, and examples to guide outputs with unparalleled precision.

This journey from basic commands to complex creative briefs illustrates how prompt engineering has evolved from a technical necessity into a strategic skill—the latest chapter in the ongoing story of how humans and machines learn to communicate.

Common Questions About Prompt Engineering

As you dive into prompt engineering, questions are inevitable. It's a new skill for most, and the information landscape can be confusing. This section provides straightforward answers to common questions, busting a few myths along the way.

Do I Need to Know How to Code?

Actionable Insight: No, you do not need to code to be an effective prompt engineer. The core skill is communication, not programming.

Think of it like driving a car: you don’t need to be a mechanic to be a great driver. Prompt engineering is about giving clear, logical, and creative instructions. It’s rooted in language, critical thinking, and a clear understanding of your objective. While coding skills (like Python) are essential for integrating AI into software or building automated workflows, for the vast majority of users, strong language and strategic thinking skills are far more important.

What Are the Most Common Mistakes Beginners Make?

Beginners often make a few classic mistakes that hinder their results. The good news is they are easy to fix.

The single biggest mistake is being too vague. A prompt like "write about marketing" is a shot in the dark. The second is failing to provide context. The AI doesn't know your audience, goals, or specific details unless you provide them.

Actionable Checklist: Avoid These Common Mistakes

- Forgetting to Assign a Persona: Always tell the AI who to be (e.g., "act as an expert financial advisor").

- Not Setting Constraints: Define boundaries for length, format, and tone to avoid unusable outputs.

- Giving Up After One Try: The best results come from iteration. Treat your first prompt as a draft and refine it based on the output.

Avoiding these simple traps will dramatically improve the quality of your AI-generated content and save you significant editing time.

How Is Prompt Engineering Likely to Change?

The field is evolving rapidly. Sam Altman, CEO of OpenAI, has suggested that the need for "hacky" prompts filled with magic keywords will likely fade as models become better at understanding natural language.

However, the core skill of clearly communicating your intent will become even more valuable. The focus will shift from how you ask to the quality and strategic depth of what you ask.

The future of prompt engineering is less about finding the perfect sequence of words and more about having high-quality ideas and a deep understanding of the outcome you want to achieve. The core skill is strategic thinking, not technical tricks.

As AI becomes more integrated into our tools, responsible use is paramount. Understanding concepts like those in our guide to AI governance best practices will become essential for anyone working professionally with AI.

Which AI Model Is Best for Practicing My Skills?

Choosing the right AI model depends on your goals and budget. Here are a few top contenders for practice.

- For Text Generation (Writing, Summarizing, Analysis): OpenAI's GPT-4o is a fantastic, widely available choice with a powerful free tier perfect for learning the fundamentals. Anthropic's Claude 3 Sonnet is another excellent option, known for handling large documents and balancing performance with cost.

- For Image Generation: Midjourney is the leader for artistic and stylized visuals. It operates on Discord, which is a great learning environment because you can see the prompts others are using. For photorealistic images, DALL-E 3 (available via ChatGPT Plus) is also a top choice.

Actionable Insight: The best way to learn is by doing. Start with the free versions of these models, pick one that suits your task, and begin practicing the core principles: clarity, context, and iteration.

At DATA-NIZANT, we are committed to providing expert insights into the world of AI and data. To continue learning and stay ahead of the curve, explore more of our in-depth articles at https://www.datanizant.com.