When you hear people talking about Generative AI (GenAI) and Large Language Models (LLMs), it might sound like they're talking about the same thing. They're definitely related, but there's a key difference that's useful to understand.

Think of it like this: GenAI is the entire field of creative AI—the artist, if you will. It’s the broad category of technology that can dream up brand new, original content. This could be anything from text and images to music and code. The LLM, on the other hand, is the specialized engine that excels at one particular art form: language. It's the powerhouse that understands and generates human-like text.

How Does Generative AI Actually Work?

Let's demystify what's happening under the hood. Imagine an LLM is a brilliant apprentice who has spent a lifetime reading, absorbing, and finding patterns in a library containing nearly every book, article, and website ever written. This massive training dataset gives it an incredibly deep, statistical understanding of how words, sentences, and concepts are connected.

When you give this apprentice a prompt—say, "write a short story about a robot who discovers music"—it isn't "thinking" like a human. Instead, it draws on its vast knowledge to predict the most likely sequence of words that would form a coherent and relevant story. It’s a highly sophisticated game of pattern-matching and prediction, just operating on a scale that’s hard to comprehend.

The Core Relationship Between GenAI and LLMs

So, how do these two concepts fit together? Generative AI is the big umbrella, covering any AI that creates new content. LLMs are a very specific—and very powerful—type of GenAI that focuses exclusively on text.

Here’s a simple breakdown:

- Generative AI: This is the whole show. It includes models that generate images (like Midjourney), code, or music.

- Large Language Models: These are the specialized text engines. They are the technology behind chatbots, summarization tools, and content creation platforms.

Essentially, all LLMs are a form of Generative AI, but not all GenAI systems are LLMs. That said, the advanced language skills of LLMs are so fundamental that they often serve as the foundation for many GenAI tools, even those that produce things other than text.

A Practical Example of LLMs in Action

Let's bring this down to a real-world scenario. A marketing team needs to whip up some social media posts for a new product launch. Instead of starting with a blank page, they turn to an LLM-powered tool. They feed it the key product details and information about their target audience.

Actionable Insight: A prompt like, "Generate three engaging Twitter posts for our new eco-friendly water bottle, targeting millennials who value sustainability," gives the model clear direction. The LLM analyzes this request and spits out several distinct posts, complete with relevant hashtags and calls to action.

A task that could have taken hours of brainstorming is suddenly condensed into a few minutes of review and refinement. The team can then pick the best options and tweak them to perfectly match their brand's voice. This is a perfect example of how understanding the relationship between Generative AI & Large Language Models (LLMs) helps businesses automate creative work, a theme we've touched on before in our discussions on AI-driven business solutions.

Understanding the Engine Behind LLMs

To really get what makes Large Language Models (LLMs) tick, you have to look under the hood. The single biggest breakthrough that powers modern Generative AI is the transformer architecture, a design that completely changed how machines understand language.

Before transformers came along, AI models had to read text sequentially—word by word, from left to right. This created a huge problem with long-term memory. By the time the model reached the end of a long paragraph, it often forgot the critical details from the beginning. The transformer fixed this with a brilliant mechanism called self-attention.

The Power of Self-Attention

Think of self-attention as the model's ability to figure out which words in a sentence matter most and how they relate to each other, no matter where they are. It’s a lot like how our brains work.

When you read, "The delivery truck, which was painted bright red, arrived late because it got stuck in traffic," your brain instantly links "it" back to "truck," skipping over all the descriptive words in between.

Self-attention does something very similar. It gives every word an "importance score" relative to all the other words in the prompt. This lets the model weigh the significance of different terms to see the bigger picture. If you want to go deeper, our guide on the transformer architecture explains the brain behind LLMs breaks down the technical details.

Actionable Insight: This ability to weigh word importance is what lets an LLM generate responses that are coherent and actually make sense in context. When you ask it a question, it's not just looking at the last word you typed; it’s analyzing your entire prompt to understand the intent and nuance behind it. For example, in the prompt "Explain blockchain to a five-year-old," self-attention helps the model prioritize "five-year-old" and adjust its tone and complexity accordingly, leading to a much better response.

The Two-Phase Journey of an LLM

Building a powerful LLM isn't a single step. It's a two-phase journey that starts with massive scale and ends with precise refinement. This process is what turns a general knowledge engine into a specialized tool.

- Phase 1: Pre-training: This is where the model does its foundational learning. It’s fed a mind-boggling amount of text data—we're talking a huge chunk of the public internet, books, articles, and more. During this unsupervised stage, its only goal is to predict the next word in a sentence, over and over again. After doing this billions of times, it starts to internalize grammar, facts, reasoning patterns, and the subtle rhythms of human language.

- Phase 2: Fine-tuning: After pre-training, the model is incredibly knowledgeable but still a generalist. Fine-tuning is what sharpens it for a specific job. This is a supervised process using a much smaller, hand-picked dataset of high-quality examples. For example, to build a customer service bot, developers would fine-tune the base model on thousands of successful support conversations. This teaches it the right tone, product knowledge, and conversational style for the task.

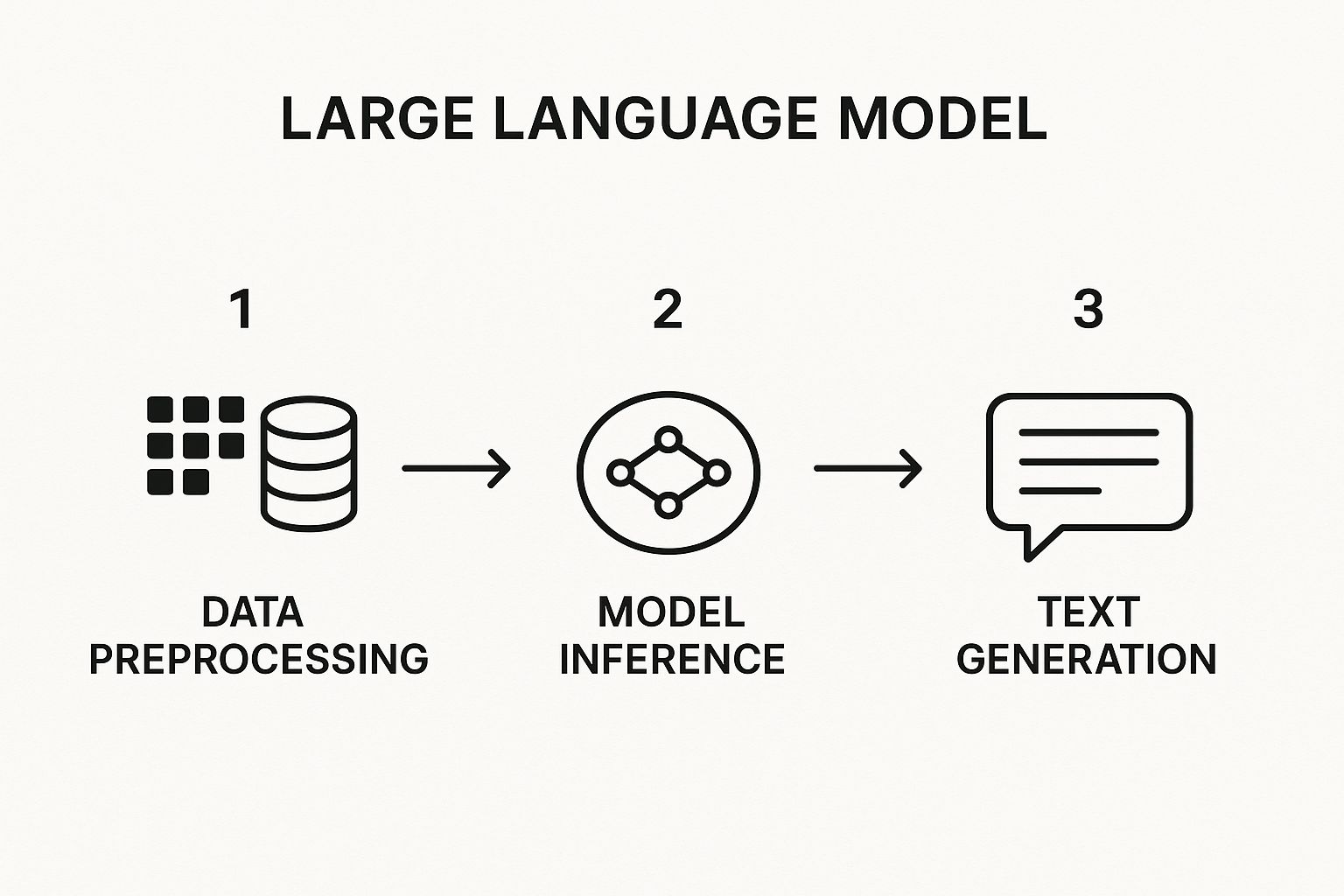

The infographic below gives you a visual of how an LLM handles a prompt, from the moment you hit enter to the final generated text.

This workflow shows the model taking your prompt, passing it through its complex internal layers, and then generating a probabilistic sequence of words that forms a coherent answer.

A Practical Example: From Prompt to Response

Let's walk through a simple prompt to see how this all comes together. Imagine you ask an LLM, "Summarize the key benefits of solar power for a homeowner."

- Input Processing: The model first breaks your sentence down into "tokens," which are just small pieces of words.

- Contextual Understanding: Using self-attention, it immediately pinpoints the core concepts: "summarize," "key benefits," "solar power," and "homeowner." It recognizes "homeowner" as the target audience, which tells it to focus on benefits like cost savings and energy independence, not industrial-scale advantages.

- Information Retrieval: The model taps into its vast pre-trained knowledge about solar energy, home economics, and residential power grids.

- Text Generation: Finally, it starts building a response one word at a time, predicting the most likely next word based on the context it has already built. It will probably start with something like, "For homeowners, the primary benefits of solar power include…" and then go on to list points like lower electricity bills and increased property value, all tailored to your original request.

This entire dance—from understanding your question to crafting a helpful summary—is driven by that powerful combination of the transformer architecture, massive pre-training, and targeted fine-tuning.

Putting Generative AI to Work Across Industries

This is where the rubber meets the road. All the technical concepts behind Generative AI (GenAI) & Large Language Models (LLMs) are fascinating, but their real value shows up when they start delivering results in the real world. Across just about every sector you can think of, these tools are shifting from interesting experiments to indispensable assets for solving tough problems, cranking up efficiency, and sparking new ideas.

And this isn't just hype. Companies are putting serious money and strategy behind this shift. Enterprise adoption is moving fast, with roughly 71% of companies expected to have these technologies integrated into at least one part of their business by 2025. The spending numbers tell an even bigger story: global investment in generative AI is projected to hit a staggering USD 644 billion this year, which is a 76.4% jump from last year. That kind of growth shows just how much confidence businesses have in what GenAI can do. You can dig into more of this data with these generative AI statistics.

Accelerating Software Development

For software developers, the impact of LLMs is direct and undeniable. Think of tools like GitHub Copilot as an AI-powered pair programmer that's always on, ready to help with any part of the coding process. This isn't just about catching typos; these tools can suggest entire blocks of code, help make sense of confusing logic, and even write out the necessary unit tests.

Practical Example: A developer can write a simple comment—like, "Create a Python function to fetch user data from an API and parse the JSON response"—and the LLM can generate a working code snippet right there. This turns a multi-minute task of looking up syntax and structuring code into a matter of seconds.

This helps engineering teams in a few key ways:

- Slash Development Time: By automating the grunt work of coding, engineers can pour their energy into the bigger picture, like system architecture.

- Get New Hires Up to Speed Faster: Junior developers or new team members can use AI assistance to navigate an unfamiliar codebase and start contributing much sooner.

- Bump Up Code Quality: LLMs can point out best practices and help developers sidestep common mistakes, leading to code that's cleaner and easier to maintain.

Revolutionizing Marketing and Content Creation

Marketing departments are now using Generative AI to produce content at a scale that was once unimaginable. The dreaded "blank page" is becoming a thing of the past. Marketers can now use LLMs to whip up first drafts for everything from blog posts and social media captions to email newsletters and product descriptions. The trick is to give it a solid, detailed prompt.

Actionable Insight: A marketing manager could feed an LLM a prompt like this: "Write a 500-word blog post about the benefits of our new smart home device. Keep the tone friendly and informative, aimed at tech-savvy homeowners. Make sure to include a call-to-action to visit our product page." In seconds, the model spits out a structured draft that the team can then polish and personalize, saving hours of work.

This completely changes the creative game. Instead of building from scratch, marketers can focus on curation and strategy—analyzing their audience, refining the message, and making sure the campaign hits its mark. Many are now looking at different AI business solutions to see where these tools can best fit into their day-to-day.

Enhancing the Customer Service Experience

We're finally moving past those clunky, keyword-driven chatbots that never seem to understand what you want. Today's customer service bots, running on advanced LLMs, can grasp nuance, recall past conversations, and provide genuinely helpful, human-like assistance. These AI agents can field a massive volume of routine questions, which frees up human agents to tackle the more complex or emotionally charged customer issues.

Practical Example: Imagine a customer typing this into an e-commerce company's chat: "I ordered a blue shirt last week, but it hasn't arrived yet, and I need to change the delivery address for my other open order."

An LLM-powered bot can juggle all of that at once:

- It understands the intent, recognizing there are two separate requests packed into one sentence.

- It accesses the data, instantly pulling up the customer's order history to check on the blue shirt.

- It takes action, initiating the address change for the other order and asking for confirmation.

This kind of sophisticated, immediate problem-solving keeps customers happy and can transform a support center from a necessary expense into a real asset for the business.

Mapping The Generative AI Market Landscape

The sheer technical power of Generative AI (GenAI) & Large Language Models (LLMs) is matched only by its massive economic footprint. This isn't just another tech trend; it's a fundamental market shift, kicking off an explosion in investment and forcing companies everywhere to rethink their strategies. Businesses have moved past the experimentation phase—they're now actively embedding GenAI to drive hyper-automation and find new levels of productivity, creating a surge in demand that's fueling some wild growth.

Just how fast is it growing? The numbers are pretty staggering. The global generative AI market, recently valued around USD 25.86 billion, is on a rocket ship trajectory to blast past USD 1,005 billion by 2034. That's a compound annual growth rate (CAGR) of about 44.2% over the next decade. Right now, North America is leading the pack, grabbing over 41% of the revenue share. You can dig deeper into the numbers and learn more about the generative AI market's rapid expansion.

Key Drivers Fueling Market Expansion

So, what's behind this incredible momentum? It really boils down to a few core pressures compelling businesses to pour money into GenAI. The biggest motivator is the relentless push to squeeze more efficiency out of operations and automate complex workflows that older software just couldn't touch.

Practical Example: A large insurance firm can use GenAI to automate the initial review of claims. The AI can scan documents, extract key information, check for inconsistencies, and flag complex cases for human review. This single application can cut claims processing time from days to hours, a clear driver for investment.

Another huge factor is the raw competitive edge GenAI delivers. Companies that get this right can craft incredibly personalized customer experiences, speed up how fast they develop new products, and make smarter, data-backed decisions. This creates a powerful ripple effect, forcing others to adopt the tech just to stay in the game, let alone get ahead.

The Titans of The Generative AI Industry

At the top of this market are the tech giants—the ones with the colossal compute power, endless datasets, and world-class researchers needed to build foundational Large Language Models (LLMs). We're talking about companies like Google (with its Gemini models), Microsoft (through its deep ties with OpenAI), and Meta (with its open-source Llama models). These players are the primary architects of this new world.

These titans are shaping the industry in a few critical ways:

- Setting the Pace of Innovation: Their R&D labs are constantly pushing the limits of what GenAI is capable of, giving us more powerful and flexible models.

- Building Platforms and Ecosystems: They're creating the cloud infrastructure and APIs that let thousands of other companies and startups build their own AI-powered tools on top.

- Driving Enterprise Adoption: Through their enterprise-ready solutions and strategic partnerships, they're making it easier for big organizations to weave GenAI into their core operations securely and at scale.

Actionable Insight: The moves these industry leaders make send waves across the entire market. For instance, when Meta releases a powerful open-source model like Llama, it empowers thousands of startups to build custom solutions without the massive cost of training a model from scratch. This directly shapes the opportunities for smaller companies and steers the overall direction of AI development.

A Look At Market Growth and Opportunity

While the giants dominate the foundational model layer, the rapid market growth is creating a lively ecosystem with opportunities for everyone. Startups are finding their footing by targeting niche applications, building specialized models for specific industries like healthcare or finance, or creating easy-to-use tools that plug into the bigger platforms. This layered structure means innovation isn't just happening in a handful of corporate HQs.

To put this growth into perspective, the table below gives a snapshot of the market's projected trajectory and the forces driving it.

Generative AI Market Growth Projections

This table illustrates the projected growth of the global Generative AI market, highlighting the rapid expansion expected over the next decade.

| Year | Market Valuation (USD Billions) | Growth Driver |

|---|---|---|

| 2025 | Projected ~$67B | Widespread Enterprise Integration and API Adoption |

| 2028 | Projected ~$200B+ | Maturation of Vertical AI Solutions and Automation Tools |

| 2034 | Projected ~$1,005B | Full Integration into Core Business and Consumer Applications |

This incredible financial runway makes one thing crystal clear for any business leader or investor: getting a handle on the Generative AI & LLMs market is no longer a "nice to have." It’s absolutely essential for navigating the changing tech landscape and spotting the big opportunities for growth and innovation that are just around the corner.

Navigating the Risks and Ethical Dilemmas

As exciting as Generative AI (GenAI) & Large Language Models (LLMs) are, their power comes with some serious strings attached. Diving into these tools without understanding the pitfalls is like handing the keys of a supercar to someone who’s never driven. It’s on us to get a handle on the inherent risks to make sure these technologies are used safely and ethically.

One of the strangest and most talked-about issues is what we call model 'hallucinations.' This is when an LLM spits out completely fabricated information but presents it with the confidence of a seasoned expert.

Practical Example: A lawyer asks an LLM to find legal precedents for a specific case. The AI might invent several court cases that sound completely plausible, complete with case numbers and judge's names, but are entirely nonexistent. Relying on this information without verification could be disastrous in a real legal proceeding.

The Problem of Inherent Bias

Generative AI models learn by consuming staggering amounts of data from the internet. The problem? That data includes the good, the bad, and the ugly of human history, complete with all our biases, stereotypes, and prejudices. As a result, the models can accidentally bake these biases right into their outputs, sometimes even making them worse.

This isn't just a theoretical problem; it has real-world consequences. Imagine an LLM used to screen résumés that starts favoring candidates from certain backgrounds simply because its training data reflected decades of biased hiring practices. Tackling this means we have to be incredibly deliberate about curating data, constantly auditing the models, and building fairness in from the ground up.

Actionable Insight: The best defense here is a "human-in-the-loop" system. Instead of letting the AI make critical calls on its own, its outputs should always be reviewed and signed off on by a human expert. For the résumé screening example, the AI should only be used to highlight skills and experience, with a human making the final decision on who to interview. This simple step ensures that any glaring bias or inaccuracy gets caught before it can do any real harm.

Data Privacy and Security Concerns

LLMs are data-hungry. This hunger raises some critical questions about privacy. When you or your employees use a public GenAI tool, your prompts and any data you paste in could be absorbed and used for future training. That’s a huge red flag if you’re dealing with sensitive personal information or confidential business strategies.

Practical Example: An employee trying to be efficient might paste a confidential report into a public AI chatbot to get a quick summary, unknowingly leaking proprietary financial projections. The only way to manage this is with crystal-clear internal policies. For anything sensitive, companies really need to look at private, self-hosted models. Having solid https://datanizant.com/ai-governance-best-practices/ is no longer a "nice-to-have"—it's an absolute must.

Misinformation and Malicious Use

The same technology that helps you write a friendly marketing email can also be weaponized to churn out convincing misinformation on a massive scale. This is easily one of the biggest ethical headaches of GenAI. Bad actors can use these tools to generate fake news articles, create armies of fraudulent social media accounts, or craft sophisticated phishing emails that look terrifyingly real.

Actionable Insight: This puts the pressure on everyone—developers need to build in safeguards, and the rest of us need to get smarter. Developers are experimenting with things like digital watermarking to help identify AI-generated content. As users, we must become more critical consumers of information. Your practical step: When you see a surprising claim online, especially one that triggers a strong emotional response, take a moment to cross-reference it with a few reputable news sources before you believe it or share it.

What to Expect Next from Generative AI

The world of Generative AI (GenAI) & Large Language Models (LLMs) moves at a breakneck pace, and it feels like new breakthroughs are announced every other week. When we look ahead, the evolution isn’t just about building bigger and bigger models. The real game-changers are coming from making them smarter, more versatile, and seamlessly woven into our everyday tools.

One of the biggest shifts we're seeing is the jump to multimodal models. For a long time, these systems were stuck in a text-only world. Now, they're breaking free.

Practical Example: Imagine pointing your phone camera at the ingredients in your fridge. A multimodal AI could analyze the image, identify the food items, and instantly generate a recipe that uses them, complete with cooking instructions. This ability to understand and create content across text, images, audio, and video is going to unlock applications that feel far more natural and powerful.

Smaller Models and Hyper-Personalization

While the massive, cloud-based LLMs get all the headlines, a quieter but equally important trend is taking hold: the rise of smaller, highly efficient models. These are designed to run directly on your phone or laptop.

This move to on-device AI brings two massive wins. First, it's a huge boost for privacy since your data stays local. Second, it makes powerful AI features available even when you're offline. This is the foundation for the next wave of hyper-personalization, where your personal AI assistant doesn't just know your generic preferences—it understands your unique context because it has access to your data, offering truly tailored help.

To get this level of customization, developers are getting really good at specializing models for specific jobs. You can get a feel for how this is done by checking out our guide on how to fine-tune an LLM, which walks through adapting a general-purpose model for a niche task.

Actionable Insight: The future of Generative AI isn't one giant brain in the cloud. It's a whole ecosystem of specialized models working in concert—some massive and powerful, others small and personal. For your business, this means you should start thinking less about a single "AI solution" and more about deploying a suite of smaller, purpose-built AI tools for specific tasks like customer email classification or internal document summarization.

Enhanced Reasoning and Deeper Integration

Beyond just creating content, the next generation of LLMs will be much better thinkers. We’re seeing a big push to improve their reasoning capabilities, allowing them to tackle complex, multi-step problems and break them down logically. This means AI tools will graduate from being simple instruction-takers to becoming true collaborative partners that can help you strategize and solve real problems.

Finally, get ready for AI to disappear into the software you already use every day. Instead of firing up a separate AI tool, Generative AI & LLMs will become an invisible, foundational layer in your existing apps. Think of a spreadsheet that automatically analyzes data and builds charts from a simple voice command, or an email client that drafts intelligent, context-aware replies for you. This seamless integration will make AI feel less like a tool you use and more like a natural part of how you work.

As Generative AI (GenAI) & Large Language Models (LLMs) pop up in more conversations, you’re bound to have questions. Let's tackle some of the most common ones to give you a clearer picture of what this technology is all about.

To make things even easier, here’s a quick summary of the key takeaways.

Quick Answers to Common GenAI Questions

| Question | Brief Answer |

|---|---|

| What's the difference between GenAI and LLMs? | GenAI is the broad category of AI that creates new content. LLMs are a type of GenAI that specializes in text. |

| Can GenAI create things other than text? | Yes. It can generate images, code, music, and more. LLMs are just the most well-known example. |

| Is it risky to use public GenAI tools for work? | Absolutely, especially with sensitive data. Information entered into public tools can be used for model training, creating a privacy risk. |

| How do LLMs actually understand questions? | They don't "understand" like humans. They use natural language processing to find statistical patterns in text and predict the most likely sequence of words for an answer. |

Now, let's explore these points in a bit more detail.

What Is The Main Difference Between GenAI And LLMs?

Think of it this way: Generative AI is the entire orchestra. It’s the broad category of AI that can create something entirely new.

A Large Language Model (LLM), on the other hand, is like the first-chair violinist—a highly specialized and prominent member of that orchestra. Its specific talent is mastering language.

So, while every LLM is a form of GenAI, not all GenAI is an LLM. An image generator like Midjourney is a perfect example of GenAI that isn't a language model.

Can GenAI Be Used For More Than Just Text?

Absolutely. While text-generating LLMs get most of the headlines, the wider world of Generative AI is busy working with all sorts of data.

Here are just a few examples of what it can do:

- Image Generation: Creating photorealistic images or unique illustrations from simple text prompts.

- Code Generation: Writing functional code snippets in languages like Python or JavaScript to help developers work faster.

- Audio and Music Creation: Composing original melodies or even generating realistic voiceovers for videos.

Are There Risks To Using Public GenAI Tools For Business?

Yes, and this is a big one. The primary risk is data privacy. When an employee pastes sensitive company information—think financial reports, customer lists, or internal strategy documents—into a public GenAI chat, that data might be absorbed into the model’s training set.

This effectively means you could be leaking confidential information without even realizing it.

Actionable Insight: Every company needs a clear policy on using public AI tools. For any tasks involving sensitive information, stick to private, enterprise-grade GenAI solutions or self-hosted models. This is the only way to keep your data secure and under your control.

How Do LLMs Understand My Questions?

Here's the fascinating part: they don't understand your questions in the way a person does. There's no consciousness or comprehension happening.

Instead, they rely on a powerful technique called natural language processing. Your prompt is broken down into mathematical units called tokens. Through training on immense datasets, the model learns the statistical probability of which tokens tend to follow others.

When you ask something, the LLM is essentially making an incredibly sophisticated guess, predicting the most probable sequence of tokens that forms a coherent and relevant answer. You can see this foundational technology at work in a whole range of natural language processing applications, from chatbots to translation services.

At DATA-NIZANT, we provide expert-authored articles and deep-dive analyses to help you master the complex world of AI and data science. Stay ahead of the curve by exploring our latest insights at https://www.datanizant.com.