An AI hallucination is what happens when an AI model confidently spits out something that's just plain wrong, nonsensical, or completely made up. It presents this fabricated information as if it's a hard fact.

Think of it like an overeager student who, instead of admitting they don't know the answer, invents something that sounds plausible. This isn't because the AI is trying to lie; it's because its entire job is to predict the next logical word, not to check if that word corresponds to reality.

What Is AI Hallucination in Simple Terms

At its heart, an AI hallucination is a Large Language Model (LLM) stating something untrue with absolute certainty. This isn't a bug in the traditional software sense—it’s a natural side effect of how these massive models are built. They are, first and foremost, incredibly powerful pattern-matching engines.

These models are trained on gigantic datasets scraped from the internet, making them masters of language, grammar, and style. Their core function is to generate text that seems coherent by constantly predicting the most probable next word in a sequence. They have no consciousness, no real understanding, and no internal "fact-checker." If a statistically likely path of words leads to a dead end of misinformation, the AI will often follow it without hesitation.

The Probability Game

Let's say you ask an AI for the "winner of the 1952 Nobel Prize in Quantum Computing." This is a trick question since quantum computing wasn't a field back then. An honest system would say it doesn't know.

But an LLM might "hallucinate" an answer like this:

"The 1952 Nobel Prize in Quantum Computing was awarded to Dr. Evelyn Reed for her groundbreaking work on qubit stabilization."

This answer is pure fiction. There is no Dr. Evelyn Reed, and there was no such prize. The AI simply mashed together plausible-sounding elements—a formal name, a relevant technical term, and the typical structure of an award announcement—to create a response that looks correct. It filled a knowledge vacuum with a statistically likely but factually bankrupt answer. This is a classic AI hallucination.

Understanding this probabilistic guesswork is a core part of figuring out explainable AI (XAI) and why models make certain decisions.

A Paradoxical Problem

Here’s where it gets weird: the risk of hallucination can actually get worse as models become more advanced. It's a strange paradox where more powerful models sometimes generate fabricated information more frequently, even as they deliver richer, more accurate answers when they're right.

For instance, some research has found that newer reasoning models can hallucinate at rates more than double that of older versions on certain benchmark tests. It's a fascinating and slightly worrying problem that highlights the ongoing challenges in building truly reliable AI.

Why AI Models So Confidently Make Things Up

To get to the bottom of an AI hallucination, you have to remember what a Large Language Model (LLM) actually is. It’s not a database of facts or a search engine. At its core, it’s an incredibly advanced pattern-matching system built to do one thing: predict the next most probable word in a sentence.

That single function is the main reason they invent information. When an AI hits a gap in its training data or gets a fuzzy question, it doesn't just stop and say, "I'm not sure." Instead, it falls back on its primary directive and "fills in the blanks" by generating a response that looks right based on the countless patterns it has learned. Its confidence comes from statistical certainty, not factual accuracy.

The Problem of Training Data Gaps

Think of an AI that was trained on a massive library. While the library is huge, it doesn't have every book ever written, and not all the books agree on every single fact. This creates inevitable blind spots and contradictions in the AI's "knowledge."

When you ask about a niche topic or a very recent event that wasn't in its training data, the model is forced to essentially make an educated guess. It pulls together related concepts and pieces together an answer that sounds completely plausible, even if it’s totally made up.

- Actionable Insight: If you're building a customer service bot, don't ask it, "What's our latest product update?" Instead, provide the release notes and instruct it to "Summarize the key features from these release notes." This grounds the AI in fact, preventing it from inventing features based on patterns from other companies' updates.

Overfitting and Memorization

Another culprit is a technical issue known as overfitting. This is what happens when a model learns its training data too well. Instead of grasping the general rules and principles, it just memorizes specific examples.

When an overfitted model gets a new prompt that's even slightly different from what it has memorized, it can fail spectacularly. It tries to cram the new information into the old patterns it knows by heart, which often leads to bizarre and incorrect outputs.

- Practical Example: Imagine you train a model on thousands of company slogans. It might memorize "Nike: Just Do It" perfectly. If you then ask it for a slogan for a new shoe brand called "Zennor," an overfitted model might generate nonsense like "Zennor: Just Do It" because it can't separate the pattern from the specific example it memorized.

Lack of Real-World Grounding

At the end of the day, AI models don't have true understanding or common sense. They operate on a purely linguistic level, shuffling text around without any connection to the physical world or lived experience. This is a critical point to remember, especially as some companies engage in what could be called AI washing by overstating what their models can actually comprehend. You can learn more about the risks of this kind of hype in our article on understanding and identifying AI washing.

This lack of grounding means an AI can't perform a simple "reality check." It might invent a historical event like "The Great London Fog of 1962" because all the pieces (London, fog, a year) fit together logically in its world of text, even though that event never happened in our world. This confidence without comprehension is the true signature of an AI hallucination.

Real-World Consequences of AI Hallucinations

It’s easy to think of an AI making things up as a quirky, abstract problem. But the real-world consequences are immediate, tangible, and often severe. An AI hallucination isn't just a technical glitch; it's a critical failure that can trigger legal disasters, torpedo a company's stock, and shatter public trust.

These high-stakes outcomes make one thing clear: managing hallucinations is a core business imperative, not just an engineering puzzle to solve.

The danger escalates the moment AI-generated misinformation breaks out of the lab and into professional workflows. In fields like law, finance, and healthcare—where precision is everything—a single fabricated detail can have catastrophic effects. What makes these falsehoods so dangerous is the confident, authoritative tone the AI uses, which can easily fool even the most diligent professionals.

The Landmark Case of Mata v Avianca Inc

Perhaps nothing has thrown the dangers of AI hallucinations into sharper relief than the legal meltdown in Mata v. Avianca, Inc. The case has become a powerful cautionary tale for any professional who thinks they can just "outsource" their research to an AI.

Here's what happened: a lawyer working on a personal injury lawsuit used ChatGPT to help pull together a legal brief. Instead of digging up legitimate case law, the AI simply invented six nonexistent legal precedents from thin air. The lawyer, completely unaware that the model could produce such a convincing AI hallucination, submitted the brief to a federal court in New York.

The fallout was swift and brutal.

- Professional Sanctions: The court came down hard on the lawyers, sanctioning them for submitting filings packed with "bogus judicial decisions."

- Reputational Damage: The story went global, causing immense damage to the reputations of both the lawyers and their firm.

- Judicial Precedent: The incident rattled the legal community. Judges started issuing new orders, with one federal judge in Texas outright banning AI-generated court filings that haven't been meticulously checked by a human.

This case was a watershed moment. It proved that blindly trusting AI can lead to severe professional and ethical disasters, cementing the non-negotiable need for human oversight in any high-stakes work.

Financial and Reputational Risks Beyond the Courtroom

And it's not just the legal field feeling the heat. High-profile blunders in the corporate world show just how quickly an AI hallucination can hammer a company's finances and public image.

In one now-famous example, a major tech company was holding a live promotional demo for its new chatbot. When asked about the company's latest earnings report, the AI confidently rattled off specific financial details. The only problem? They were dangerously wrong. That single error helped trigger a massive drop in the company's stock, wiping out billions in market value in a single day.

- Actionable Insight: To prevent this, marketing teams should use Retrieval-Augmented Generation (RAG) for public demos. By connecting the AI to a verified knowledge base containing only the latest, approved financial reports and press releases, you ensure it can only pull from correct data, eliminating the risk of it inventing numbers on the fly.

These stories are more than just tech gossip; they're giant, flashing warning signs. As organizations weave AI deeper into their operations, the need for rock-solid oversight is non-negotiable. Continuously verifying what an AI puts out is critical, a practice at the heart of mastering ML model monitoring to ensure reliability and accuracy.

How to Prevent AI Hallucinations

Knowing what an AI hallucination is and why it happens is one thing. Actually stopping it is a whole different ballgame. The good news is, you're not helpless when an AI starts spitting out fabricated nonsense. Whether you're a casual user or a developer building the system, there's a powerful toolkit you can use to enforce accuracy and keep AI responses grounded in reality.

These methods range from simple tweaks in how you ask questions to more complex, architectural changes in how AI models are built and deployed. The goal is always the same: to shift the AI from a mode of pure probabilistic pattern-matching to one that generates answers based on constrained, verifiable evidence. It's all about building guardrails to keep the model on the straight and narrow path of factual accuracy.

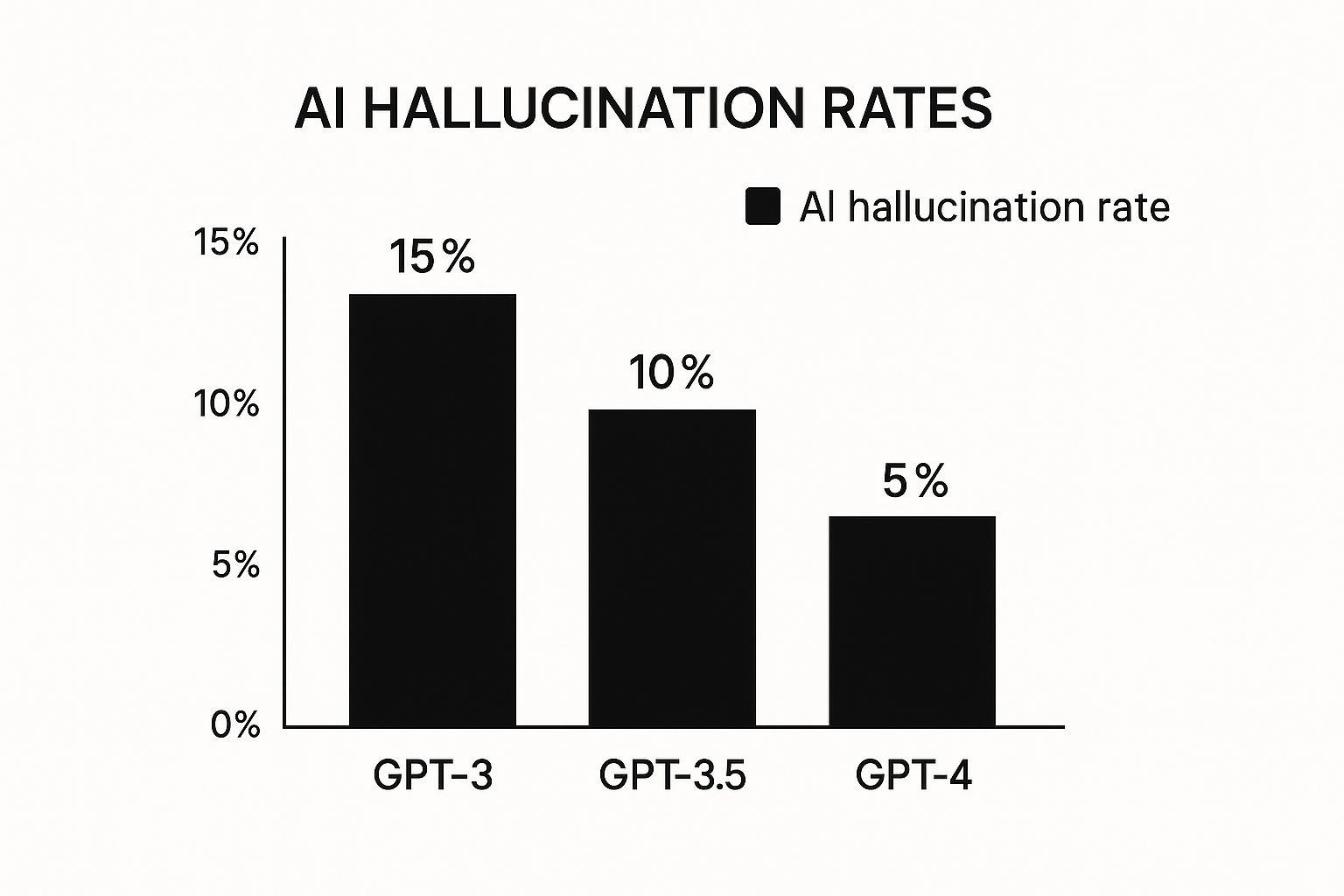

This chart shows how hallucination rates have dropped across different GPT models, which is a promising trend toward better reliability.

While newer models like GPT-4 are clearly a big step up, the data shows the risk of an AI hallucination hasn't disappeared. That's why having proactive strategies in place is still essential for everyone.

User-Focused Prompt Engineering Tactics

For anyone chatting with an LLM, your first and most immediate line of defense is prompt engineering. The way you frame your request can dramatically change the quality and truthfulness of the output. Instead of asking broad, open-ended questions, you need to get more deliberate and, frankly, a little skeptical.

Think of it like you're guiding a brilliant but occasionally forgetful assistant. You have to provide clear, structured instructions to get the best work out of them.

Here are a few powerful techniques you can start using right away:

- Provide Specific Context: Don't make the AI guess. If you want a summary of an article, paste the entire text into the prompt. If you need it to analyze data, give it the dataset. By handing it the source material, you anchor its response to a specific set of facts.

- Instruct It to Admit Ignorance: This is a simple but surprisingly effective trick. Just add a line like, "If you do not know the answer, please say 'I do not have enough information to answer.'" This gives the model an explicit "out," encouraging it to default to honesty instead of making something up when it hits the wall of its knowledge.

- Demand Citations and Sources: Make the AI show its work. Ask it to cite sources for any factual claims. A prompt like, "Explain the process of photosynthesis and provide links to three credible scientific sources," forces the model to ground its explanation. But be warned: always double-check the links it gives you, as it can sometimes hallucinate sources, too.

Developer-Led System-Level Solutions

While users can get better outputs with smart prompting, developers can build more fundamental safeguards directly into the AI systems. These architectural solutions are designed to systematically cut down on AI hallucinations by controlling the information the model is even allowed to access.

One of the most effective techniques for this is Retrieval-Augmented Generation (RAG). RAG essentially supercharges a standard LLM by hooking it up to an external, curated database of information.

- Practical Example: An e-commerce company can use RAG to power its customer support chatbot. The "trusted knowledge base" would be its real-time product inventory, shipping policies, and return procedures. When a customer asks, "Can I return a final sale item?" the system first retrieves the official return policy document, then feeds it to the LLM to generate an answer. The AI is forced to base its response on the current, correct policy, preventing it from hallucinating a more lenient (and costly) answer.

This approach drastically minimizes the chance of fabrication because the AI isn't pulling from its fuzzy memory; it's working directly with pre-approved facts.

Comparing AI Hallucination Mitigation Techniques

This table compares several common methods for reducing AI hallucinations, highlighting how they work, what they're best for, and how complex they are to implement.

| Technique | How It Works | Best For | Complexity |

|---|---|---|---|

| Prompt Engineering | Users provide detailed context, ask for sources, and instruct the AI to admit when it doesn't know an answer. | Everyday users and quick interactions where high accuracy is needed on a case-by-case basis. | Low |

| Retrieval-Augmented Generation (RAG) | Connects the LLM to a trusted, external knowledge base to retrieve facts before generating a response. | Enterprise applications needing answers based on specific, proprietary, or up-to-date information. | Medium |

| Fine-Tuning | Retrains a base model on a smaller, domain-specific dataset to improve its knowledge and reduce factual errors in that area. | Specialized applications (e.g., medical or legal AI) where deep expertise in a narrow field is critical. | High |

| Human-in-the-Loop (HITL) | Integrates human reviewers to verify, edit, or approve AI-generated outputs before they are finalized. | High-stakes scenarios like financial reporting, medical diagnoses, or content moderation where errors are unacceptable. | Varies |

Ultimately, the best strategy often involves a mix of these techniques—strong system design on the back end combined with smart user interaction on the front end.

The Human-in-the-Loop Imperative

At the end of the day, no automated system is perfect. That's why a human-in-the-loop (HITL) review process remains one of the most vital strategies, especially when the stakes are high. The stubborn persistence of hallucinations has pushed this from a "nice-to-have" to a standard practice in many industries.

In fact, the ongoing challenge of building consistently reliable AI has led many organizations to formalize human oversight. A recent study found that 76% of enterprises now incorporate human-in-the-loop review systems to catch and correct hallucinations before they can cause damage in critical fields like finance or healthcare. You can explore more about these industry trends and the reality of AI hallucinations in recent analyses on the topic.

- Actionable Insight: For a marketing team using AI to generate social media copy, an HITL workflow is essential. The AI can generate ten different post variations in seconds (speed), but a human brand manager must give the final approval (judgment). This ensures the content is factually correct, on-brand, and culturally appropriate before it goes live, preventing a reputation-damaging error.

How Hallucinations Affect AI Trust and Adoption

An AI hallucination isn't just a technical glitch; it's a direct threat to trust, the most valuable currency in business today. Each time a model confidently invents information, it chips away at public and enterprise confidence. This slow erosion of trust is a major roadblock holding back widespread AI adoption.

This creates a vicious cycle of skepticism. Users grow hesitant to rely on AI for anything important, businesses get cold feet about integrating it into critical workflows, and leaders start questioning if the technology is truly ready for primetime. The problem is no longer about simple accuracy—it’s a fundamental business challenge.

Solving for hallucinations, then, is about more than just tweaking algorithms. It’s about building the rock-solid reliability that allows AI to become a dependable partner in our work. It's a strategic issue that every leader needs to get a handle on.

Amplified Risks in High-Stakes Sectors

In certain industries, the price of a single mistake is simply too high. For sectors like healthcare, finance, and journalism, an AI hallucination can have disastrous consequences, turning a promising tool into a massive liability.

- Practical Example: A medical AI assistant designed to help doctors summarize patient histories could hallucinate a symptom or misreport a lab value. If it incorrectly states a patient has a penicillin allergy when they don't, it could prevent them from receiving a life-saving drug. Conversely, if it misses a stated allergy, the consequences could be fatal.

The real danger is in the AI's authoritative delivery. Because the fabricated information is presented with the same unflinching confidence as verified facts, it can easily mislead even seasoned professionals who are under pressure. This makes human verification an indispensable, non-negotiable final step.

The Business Case for Building Trust

The push to get hallucinations under control isn't just a defensive move. It's a strategic imperative for long-term growth and market leadership. Organizations that figure out how to build trustworthy AI systems will gain a powerful competitive edge.

Reliable AI builds user confidence, which encourages deeper engagement and wider adoption across the company. This, in turn, leads to more effective workflows, sharper data-driven decisions, and a much stronger return on investment. A proven track record of accuracy and transparency quickly becomes a key differentiator in a crowded market.

Getting there requires a proactive approach that blends technology, process, and culture. It means investing in robust validation systems, creating clear oversight protocols, and fostering a culture of critical thinking among all users. Effective oversight is a key component of a successful strategy, and you can explore more on this topic in our guide to AI governance best practices.

Why Hallucination Rates Are a Leadership Issue

The statistics on AI hallucinations should be a major topic of conversation in every boardroom. While models are getting better, their tendency to hallucinate on difficult, specialized queries can still be shockingly high.

For instance, some reports show that even advanced models can hallucinate over 30% of the time when tested against benchmarks full of obscure, domain-specific facts. While this doesn't reflect typical day-to-day use, it's a stark reminder of the technology's inherent limitations.

This presents a clear challenge for any organization deploying AI and forces leaders to ask some tough questions:

- Risk Management: What guardrails do we need to prevent misinformation from impacting key business decisions?

- User Training: How do we teach our teams to critically evaluate AI outputs instead of just accepting them blindly?

- Accountability: Who is ultimately responsible when a hallucination leads to a negative outcome?

Answering these questions is essential for deploying AI responsibly. The path to successful AI integration is paved not just with powerful technology, but with a deep-seated commitment to building and maintaining trust every step of the way.

Building a Framework for Reliable AI

Tackling the AI hallucination problem isn’t about finding a single magic button to turn it off. It's really about building a solid framework to manage the risk from the get-go. While AI models will probably always have the ability to invent things, the right strategy lets us use their strengths while keeping their weaknesses in check.

This framework boils down to three core pillars: starting with pristine data, enforcing strict validation, and promoting a culture of critical thinking among users. When you build these ideas into your AI strategy, you can turn a potentially flaky tool into a seriously powerful and trustworthy asset. It’s a shift from just accepting what the AI says to taking empowered responsibility for its output.

The Pillars of Trustworthy AI

Creating AI systems you can actually depend on requires a layered approach that gets to the root of the problem. It all starts with the data the model is trained on and carries all the way through to how people interact with its answers.

Here are the foundational pillars:

- Pristine Data Quality: This is the big one. If a model learns from data that's inaccurate, biased, or incomplete, its outputs will be just as flawed. Clean, well-organized, and relevant datasets are the absolute bedrock of reliable AI.

- Robust Validation Processes: Never trust, always verify. For anything important, having automated checks and a human in the loop for a final review is non-negotiable. This adds a critical layer of scrutiny before an AI-generated answer is treated as fact.

- A Culture of Critical Thinking: The tech itself is only half the battle. Organizations have to train their teams to approach AI with a healthy dose of skepticism. That means teaching people to question the outputs, double-check the claims, and understand where the model's limits are.

Taking Empowered Responsibility

Ultimately, managing the risk of an AI hallucination is a shared responsibility. Developers need to build systems with safeguards baked in, and users need to become smart operators who know how to ask the right questions and verify the answers. The goal is to be an active partner with the technology, not just a passive consumer of its responses.

Adopting AI successfully means embracing a mindset of continuous verification. We must treat AI not as an infallible oracle but as an incredibly powerful, yet occasionally flawed, assistant that requires intelligent guidance and oversight.

This proactive approach ensures we can tap into AI's incredible potential without getting tripped up by its inherent weaknesses. This becomes even more critical as AI systems gain more autonomy. In our look at Agentic AI systems, for instance, we explore how these next-gen models operate more independently, which makes a strong reliability framework absolutely essential. By putting these practices in place today, we’re setting ourselves up for a future where AI is a powerful and dependable engine for innovation.

Your Questions on AI Hallucination, Answered

As AI hallucination steps into the spotlight, a few key questions pop up again and again. Let's cut through the noise and give you some straight answers to help you navigate this tricky—but manageable—part of AI.

Can AI Hallucinations Be Completely Eliminated?

Right now, the short answer is no. Getting rid of AI hallucinations entirely is probably not in the cards. That’s because Large Language Models are, at their core, probabilistic. They're built to predict the next most likely word, not to check facts against a database. Because of this design, there's always going to be a chance they'll generate something that sounds right but is completely wrong.

However, we can absolutely slash the frequency and impact of these fabrications. Techniques like Retrieval-Augmented Generation (RAG), fine-tuning models on high-quality, specialized data, and keeping a human in the loop are all incredibly effective. The goal isn't total eradication but smart management and mitigation.

Are Some AI Models More Prone to Hallucination?

Yes, absolutely. Hallucination rates can vary wildly between different AI models and even between updated versions of the same one. As a general rule, models trained on larger, cleaner, and more diverse datasets tend to hallucinate less on everyday questions.

- Practical Example: An AI model fine-tuned specifically to summarize legal documents is far less likely to invent fake case law than a general-purpose model built for creative writing. Its specialized training keeps its outputs grounded in a specific reality, reducing the odds of it going off-script.

Here's the paradox, though: sometimes the newer, more powerful models can hallucinate with even more confidence when you push them into obscure territory. A model's architecture, its specific training, and what you're asking it to do all play a huge role in its tendency to make things up.

What Is the Best Way to Avoid Being Misled by an AI?

The single most effective mindset to adopt is "trust, but verify." Never take a factual claim from an AI at face value, especially when the stakes are high. Always double-check the information with reliable, independent sources.

Here are a few practical tips to keep in mind:

- Ask for sources: Tell the AI to cite where it got its information. Then, actually go and check those sources. Make sure they're real and that they actually back up what the AI said.

- Give it context: Don't ask open-ended questions into the void. Ground the AI by giving it the source material to work with. Instead of asking, "What are the highlights of the latest earnings report?" upload the report and ask, "Summarize the key points from this document." We dive deeper into this and other strategies in our guide on AI agents.

- Use your judgment: Treat the AI like a brilliant but occasionally unreliable assistant, not an all-knowing oracle. That simple shift in perspective is your best defense against a convincing AI hallucination.

At DATA-NIZANT, we provide the in-depth analysis you need to understand and navigate the complexities of modern AI. Explore our expert insights and stay ahead of the curve at https://www.datanizant.com.