Vectorless RAG Explained: Beyond Embeddings and Vector Databases

Artificial Intelligence practitioners often assume that Retrieval Augmented Generation (RAG) automatically means chunking documents, embedding them, and storing them in a vector database. That assumption is understandable but technically incomplete. RAG fundamentally means augmenting a language model with retrieved external knowledge before generating an answer. The retrieval mechanism does not have to rely on embeddings or vector similarity.

Recently, a new family of approaches often referred to as Vectorless RAG has gained attention. These systems retrieve information without relying on dense embeddings or vector databases. Instead, they rely on document structure, lexical search, or reasoning-driven traversal. This article explains what vectorless RAG actually means, why it is being explored, and when it makes sense compared to traditional vector-based RAG.

What Is Retrieval Augmented Generation (RAG)?

Retrieval Augmented Generation was introduced in the 2020 research paper:

Retrieval-Augmented Generation for Knowledge-Intensive NLP Tasks

Patrick Lewis et al., Facebook AI Research

The idea is simple. Instead of relying entirely on the parameters of a language model, the system retrieves relevant external documents and provides them to the model as context before generating an answer. The typical pipeline looks like this:

- Documents are split into chunks

- Each chunk is converted into an embedding

- Embeddings are stored in a vector database

- At query time the system retrieves the most similar chunks

- The language model generates an answer grounded in the retrieved text

This architecture became the dominant RAG implementation pattern, especially with the rise of vector databases such as Pinecone, Weaviate, Milvus, and FAISS.

However, vector search is only one possible retrieval strategy.

Why Traditional Vector RAG Became Popular

Vector RAG became the default approach because it solves several difficult problems. Semantic embeddings allow systems to retrieve documents even when the wording of the query and the document are different. For example:

Query

“How do I reset my password?”

Document text

“Steps for credential recovery”

A vector embedding can still match these semantically similar phrases. Vector search also scales well across very large corpora of documents. Because of these advantages, vector-based retrieval quickly became the backbone of many production AI systems. However, this approach also introduces limitations.

The Limitations of Vector-Based RAG

Vector RAG pipelines often rely on fixed-size chunking. This creates several problems.

Chunk boundaries can destroy context

Large documents such as financial filings, legal agreements, or technical manuals contain carefully organized sections. When those documents are broken into arbitrary chunks, critical context may be lost. For example, a chunk may include: “…risk disclosures related to international operations…” But the heading explaining the context may appear in another chunk.

Semantic similarity is not always relevance

Vector similarity retrieves text that is semantically similar to the query. But similarity does not always equal correctness. For example, a financial question about: “Deferred tax assets in the risk disclosure section” May retrieve a chunk about taxes in a different context simply because the words are similar.

Lack of interpretability

Vector retrieval can be difficult to explain. Why was this chunk retrieved?

Because it was close in embedding space. For enterprise use cases that require auditability, this explanation is often insufficient.

What Is Vectorless RAG?

Vectorless RAG refers to retrieval pipelines that do not rely on embeddings or vector databases.

Instead, they retrieve information using alternative strategies such as:

- lexical search (BM25 or full-text search)

- document structure and hierarchy

- metadata filtering

- symbolic queries

- LLM-guided search through document trees

In other words, the system retrieves knowledge without performing vector similarity search.

The generation stage remains the same: the retrieved content is provided to the language model as context.

A Simple Example of Vectorless RAG

Consider a 200-page financial report.

A vector RAG pipeline might:

- Split the document into 1,000 chunks

- Embed each chunk

- Search the embeddings

A vectorless pipeline might instead:

- Parse the document structure

- Build a hierarchy of sections and subsections

- Allow the model to traverse that hierarchy

This approach behaves more like a human analyst navigating a document rather than searching for semantically similar chunks.

Research on Embedding-Free Retrieval

Several recent projects explore this idea.

ELITE: Embedding-Less Retrieval with Iterative Text Exploration

The ELITE framework proposes a retrieval method where the language model iteratively narrows down the search space instead of relying on embedding similarity.

The model explores the document structure step by step until the relevant section is identified.

Research paper: ELITE: Embedding-Less Retrieval with Iterative Text Exploration

GitHub repository

PageIndex

PageIndex is a system designed for vectorless retrieval over structured documents.

It builds a hierarchical representation of a document similar to a table of contents and allows an LLM to navigate the tree to locate relevant sections.

TreeSearch

TreeSearch is another implementation that combines lexical search with hierarchical traversal of document structures.

When Vectorless RAG Works Best

Vectorless RAG is particularly useful for structured documents. Examples include:

- financial filings

- legal contracts

- policy manuals

- technical specifications

- compliance documents

- research papers

These documents contain natural structure that can be leveraged for retrieval. Benefits include:

- better preservation of context

- more interpretable retrieval paths

- improved reasoning over long documents

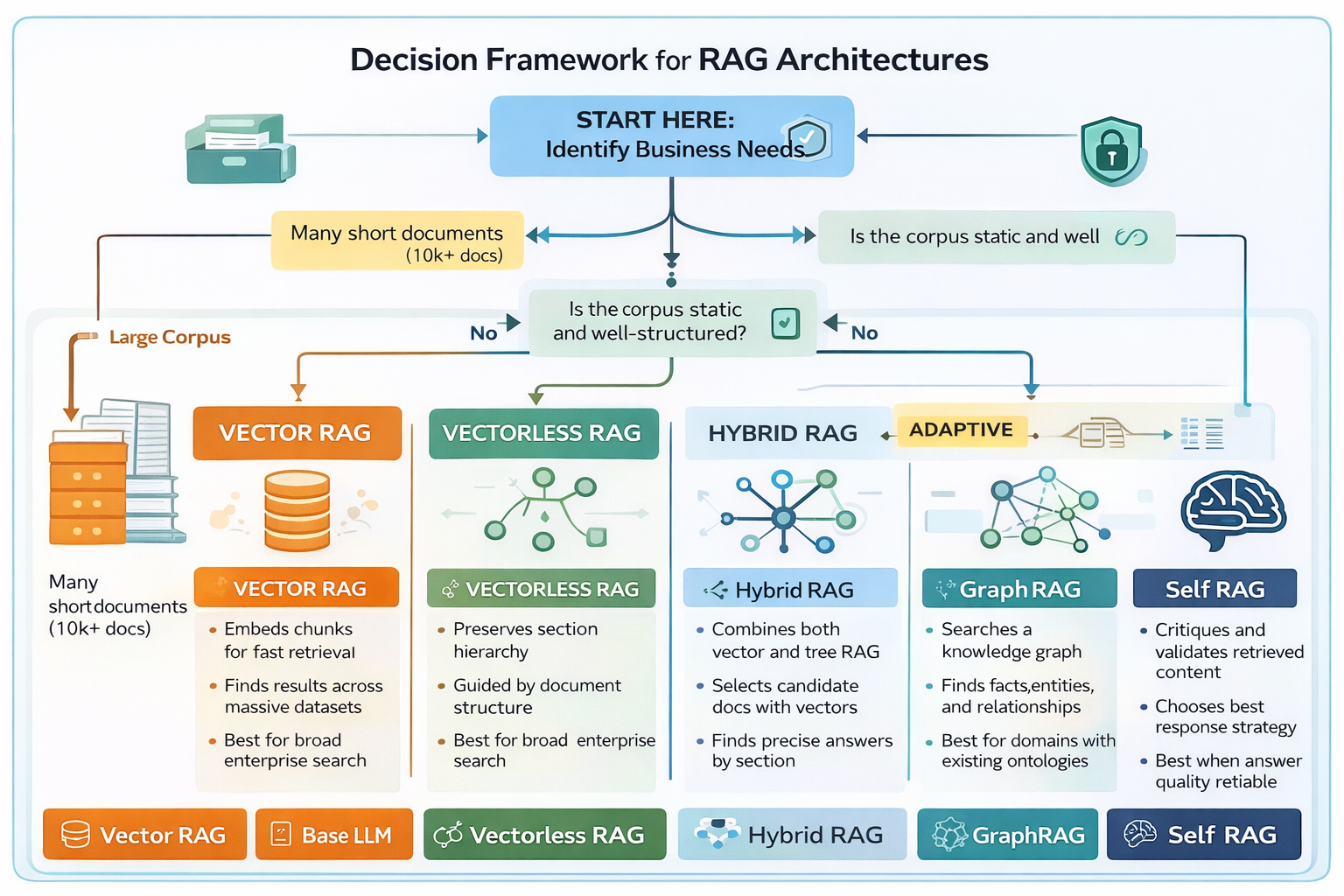

When Vector RAG Is Still Better

Vector search remains extremely powerful for other scenarios. Vector RAG works best when:

- the corpus contains many small documents

- documents lack strong structure

- queries require semantic matching

- large-scale document search is required

For example:

- customer support knowledge bases

- chat logs

- product documentation

- heterogeneous enterprise datasets

Vector search excels at finding relevant documents quickly across large collections.

Hybrid RAG: The Real Production Architecture

Most production AI systems combine multiple retrieval strategies rather than relying on a single approach.

In practice, most production systems combine both approaches. A common architecture is:

- Vector search identifies candidate documents

- Structure-aware retrieval identifies the precise section

- The language model generates the final answer

This hybrid approach combines the strengths of both methods.

Vector search provides high recall, while structured retrieval provides precision and interpretability.

Choosing the Right RAG Architecture

Selecting the right approach depends on several factors.

| Scenario | Recommended Architecture |

|---|---|

| Large corpus of short documents | Vector RAG |

| Highly structured long documents | Vectorless or Tree RAG |

| Enterprise search across many systems | Hybrid RAG |

| Keyword-driven retrieval | Sparse retrieval |

The key insight is that retrieval strategy should match the structure of the knowledge base.

The Future of Retrieval Architectures

GraphRAG enables retrieval based on relationships between entities rather than similarity between text chunks.

The debate between vector RAG and vectorless RAG should not be viewed as a competition. Instead, it reflects a broader evolution in how AI systems retrieve knowledge. Future systems will likely combine multiple retrieval methods, dynamically selecting the most appropriate strategy based on the query and corpus structure. This includes:

- dense vector retrieval

- sparse lexical search

- graph traversal

- structured document navigation

- agent-driven retrieval pipelines

The next generation of retrieval systems will dynamically combine these strategies depending on the query, corpus structure, and reasoning requirements.

As language models become more capable at reasoning, retrieval systems will increasingly resemble intelligent navigation systems rather than static search engines.

Final Thoughts

Vectorless RAG reminds us that retrieval is not synonymous with vector search. Vector databases solved many problems in semantic retrieval, but they are not the only way to augment language models with external knowledge. In domains where document structure matters, alternative retrieval methods can sometimes produce more reliable and interpretable results. The most effective systems will not choose between vector and vectorless approaches. They will combine them.

References

- Lewis, Patrick et al. Retrieval-Augmented Generation for Knowledge-Intensive NLP Tasks

- Karpukhin, Vladimir et al.: Dense Passage Retrieval for Open-Domain Question Answering

- ELITE: Embedding-Less Retrieval with Iterative Text Exploration

- FinanceBench Benchmark

- PageIndex Repository

- TreeSearch Repository

Great post! I’ve been using Free Video Generator for my projects and it’s been amazing. Really helpful content here!

Wonderful article! I recently discovered Agent Orchestration Studio & RAG Orchestration Studio (https://agentorchestrationstudio.com) (https://ragorchestrationstudio.com) and it has transformed my workflow completely.

Wonderful article! I recently discovered Agent Orchestration Studio & RAG Orchestration Studio (https://agentorchestrationstudio.com) (https://ragorchestrationstudio.com) and it has transformed my workflow completely.

Wonderful article! I recently discovered ragorchestrationstudio.com and it has transformed my workflow completely.

This is very informative content.

Very helpful article! For anyone looking for similar tools, Free Video Generator at https://ragorchestrationstudio.com is worth a look.