Imagine getting a life-saving diagnosis from an expert doctor who, when you ask why, just shrugs and says, "I can't explain my reasoning, you just have to trust me." You'd be looking for a second opinion, right? That's the exact 'black box' problem we often face in modern AI.

True interpretability in machine learning isn't just about knowing what a model predicts; it's the critical practice of understanding why and how it gets there.

Why Interpretability in Machine Learning Matters Now

As machine learning models weave themselves deeper into our daily lives—from our phone apps to our financial institutions—their complexity has exploded. We’ve graduated from simple, transparent algorithms to incredibly powerful deep learning systems that predict outcomes with what seems like uncanny accuracy.

But this power often comes at a steep price: transparency. This creates the "black box" models we hear so much about.

This isn't just some academic thought experiment. When an AI model is making a high-stakes decision—like approving a mortgage, flagging a tumor on a medical scan, or steering an autonomous car—being unable to explain its logic is a massive liability. The demand for clarity is no longer a 'nice-to-have' for data scientists; it's a fundamental requirement for deploying AI responsibly.

The Growing Demand for Transparency

The push to pry open the black box is coming from all sides. Regulators are drafting laws that mandate explainability, especially in sensitive fields like finance and healthcare. Customers are, quite rightly, demanding to know the logic behind automated decisions that impact their lives. And internally, development teams need interpretability to debug their models, spot hidden biases, and genuinely improve them.

This isn't just anecdotal. A 2019 global survey showed that 84% of AI practitioners already considered interpretability 'important' or 'very important' to their work. Beyond just staying compliant, there's a real business case. A 2021 report found that companies embracing interpretable AI can see their return on investment jump by as much as 30%, driven by increased trust and better error detection. You can explore a deeper history of deep learning and its challenges to see how we got here.

Interpretability is the bridge between a model's complex mathematical function and our human understanding. Without it, even the most accurate model is just a sophisticated guess, limiting our ability to trust, troubleshoot, and truly innovate with AI.

Ultimately, focusing on interpretability in machine learning helps us solve several critical problems:

- Building Trust: It builds confidence with users, stakeholders, and regulators by making AI systems accountable. When a model can explain itself, its conclusions are far easier to accept.

- Ensuring Fairness: Interpretability tools are our best defense against hidden biases. They can shine a light on skewed logic in the data or the model, helping prevent discriminatory outcomes in hiring, lending, and beyond.

- Debugging and Improving Models: When we understand why a model made a mistake, we can fix the root cause instead of just patching the symptoms. This is how we build truly robust and reliable systems.

- Meeting Regulatory Compliance: In a growing number of industries, being able to provide a clear, human-readable explanation for an automated decision is becoming a legal requirement.

As algorithms continue to shape our world, mastering interpretability isn't optional anymore. It's the key to building AI systems that are not only powerful but also safe, fair, and worthy of our trust.

The Journey from Simple Models to Complex Black Boxes

To really get why interpretability in machine learning is such a hot topic today, you have to look at how we got here. The field didn't start with mysterious, impenetrable algorithms. It was actually the opposite. Early on, understanding how a model worked was just as important as what it predicted.

This story begins with models that were inherently simple, what you might call "glass boxes."

The Era of Glass Box Models

Back in the early days of AI, from the 1950s through the 1980s, the most common methods were pretty straightforward. Think linear regression, logistic regression, and decision trees. These models were the workhorses of the time. A decision tree, for example, is basically a flowchart. You can follow every single decision it makes, from the root all the way down to a final prediction. Its logic is completely out in the open.

This clarity wasn't an accident; it was a core feature. The goal was often to pull knowledge out of data, and these simpler models were fantastic for that. They gave researchers clear rules and insights they could easily check and understand. But their simplicity was also their biggest weakness—they just couldn't handle the messy, complex patterns buried in real-world data.

The Rise of the Black Box

The game changed when neural networks made a major comeback, thanks to the rediscovery of an algorithm called backpropagation in the 1980s. This breakthrough suddenly made it possible to train much deeper, more intricate networks, paving the way for a huge shift in the field. As machine learning moved from being driven by expert knowledge to being driven by data, a fundamental trade-off came into focus: accuracy versus interpretability.

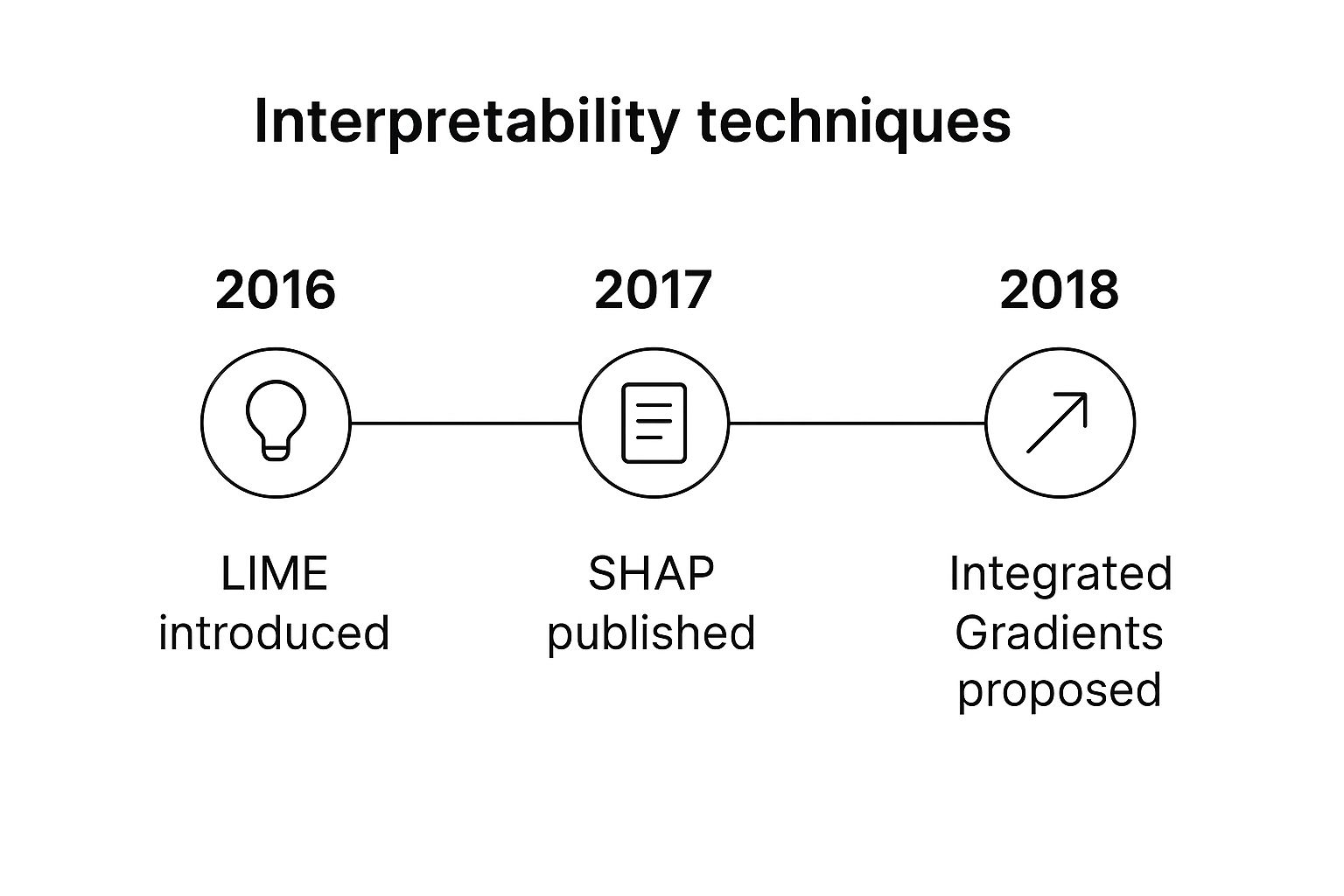

The infographic below shows how the need for our modern interpretability tools grew right alongside the complexity of our models.

This timeline makes it clear that powerful techniques like LIME and SHAP are fairly new inventions, created specifically to peel back the layers of today's complex AI. You can see how the related fields of AI, machine learning, and deep learning took different paths, with some becoming far more complex over time.

This tension between power and clarity exploded with the deep learning revolution of the 2010s. Models ballooned to include millions, and now billions, of parameters. They started achieving superhuman results in tasks like image recognition and language translation, but their internal logic became almost completely indecipherable.

These incredibly powerful systems became known as "black boxes." We could see the data going in and the prediction coming out, but the complex web of calculations happening inside was a total mystery to human observers.

The very thing that made them so good—countless layers of interconnected nodes, all tweaking their own internal weights during training—also made them a tangled mess from an explanatory standpoint.

Every jump in predictive power seemed to take us further from the simple, understandable models of the past. The demand for interpretability isn't just a new fad; it's a direct and necessary reaction to this journey. It's a quest to bring understanding and trust back to the incredibly powerful AI we've built.

Alright, let's open up the toolbox. We've talked about the "why" behind interpretability, so now it's time for the "how." Making a machine learning model understandable isn't about finding a single magic key; it's about knowing which tool to grab for the specific job at hand.

Think of it this way: you could build a simple piece of furniture with exposed joinery, where you can see exactly how every piece fits together. That’s one approach. Or, you could build a complex, seamless piece and then use an X-ray to see its internal structure. Both get you to an answer, just in different ways. In ML, these two approaches are called intrinsic and post-hoc methods.

Intrinsic Methods: The "Glass Box" Approach

Intrinsic methods, often called "glass box" or "white box" models, are transparent by their very nature. You don't need any special tools to understand them because the model is the explanation. Their internal logic is right there on the surface.

Some of the most common glass box models include:

- Linear Regression: This is a classic. It works by assigning a clear, understandable weight to each feature. You can literally say, "For every one-unit increase in X, the prediction changes by Y."

- Logistic Regression: A close cousin to linear regression, but for classification tasks. It calculates the probability of an outcome, making it easy to see which factors push a decision one way or the other.

- Decision Trees: As we've covered, a decision tree is just a flowchart. You can follow the path for any single prediction and see the exact if-then-else rules that led to the final answer.

The big win with these models is their sheer simplicity and directness. The trade-off? They often can't capture the kind of complex, non-linear relationships that the big, powerful "black box" models are so good at.

Post-Hoc Methods: Peeking Inside the Black Box

This is where things get really interesting. What happens when you absolutely need the predictive muscle of a deep neural network, but you also have to explain its decisions? That's where post-hoc methods come in.

These are techniques you apply after a model has already been trained. They act like investigative tools, probing the black box from the outside to figure out its internal logic without actually changing the model itself.

Key Insight: Post-hoc methods don't change the complex model. Instead, they provide a simplified, human-understandable approximation of its behavior for a specific prediction or for the model as a whole.

This approach gives you the best of both worlds: you can use the highest-performing model for the task while still meeting the critical need for interpretability in machine learning. Two of the most important and widely used post-hoc techniques are LIME and SHAP.

Choosing Your Interpretability Method

Deciding between these methods can feel daunting, but it often comes down to your specific needs. Are you building from scratch and prioritizing simplicity, or do you need to explain an existing high-performance model? This table breaks down the key differences to help you choose.

| Method | Type | Best For | Key Advantage |

|---|---|---|---|

| Linear/Logistic Regression | Intrinsic | Simple modeling tasks where feature effects are linear and need to be directly quantified. | Easy to explain feature weights and their direct impact on outcomes. |

| Decision Trees | Intrinsic | Problems that can be broken down into a series of clear, hierarchical rules. | The model's logic is a visual flowchart that anyone can follow. |

| LIME | Post-Hoc | Quickly debugging or explaining a single, specific prediction from any complex model. | Model-agnostic and provides intuitive, local (single-prediction) explanations. |

| SHAP | Post-Hoc | Gaining a deep, mathematically-grounded understanding of feature importance, both locally and globally. | Provides consistent and fair feature contributions, backed by game theory. |

Ultimately, the choice depends on your project's goals. For regulated industries, an intrinsic model might be required. For performance-critical applications, combining a black box with SHAP is often the gold standard.

LIME: Explaining One Decision at a Time

Imagine you ask a world-renowned expert a highly complex question. They give you a brilliant, but dense, answer. You follow up with, "Okay, can you explain that again, but in really simple terms, just for my specific case?" That’s the core idea behind LIME (Local Interpretable Model-agnostic Explanations).

LIME works by explaining one prediction at a time. It takes the data point you're curious about, creates thousands of tiny variations of it, and feeds them all to the black box model to see how the predictions wiggle. It then fits a simple, interpretable model (like a basic linear regression) on top of those results to create a local approximation.

This local model serves as a simple "explainer" for the complex model's behavior right around that one specific prediction. It’s an incredibly powerful tool for debugging. A famous 2016 paper that introduced LIME showed it could explain why a neural network was classifying an image as a 'Siberian husky'. As it turned out, the model wasn't looking at the dog at all—it was keying in on the snow in the background! This revealed a dangerous shortcut the model had learned.

With over 12,000 citations by early 2024, its impact on the field is undeniable. You can dive into the foundational research and see for yourself how LIME uncovers these kinds of model flaws.

The image above is a classic LIME example. It’s explaining a text classification by highlighting which words pushed the model's prediction toward "Christianity" (in green) versus "Atheism" (in red) for this specific document. It’s a beautifully simple and local explanation.

SHAP: Assigning Credit Fairly

If LIME is about explaining a single decision, SHAP (SHapley Additive exPlanations) is about distributing the "credit" for that decision fairly among all the features. It borrows a concept from cooperative game theory—the Shapley value—to calculate the precise contribution each feature made to the final prediction.

Think of it like a project team that just won a big bonus. How do you divide it up fairly? You’d probably try to figure out how much each person contributed to the success. SHAP does exactly that for a model's features, ensuring that the "payout" (the prediction) is fairly distributed among all the "players" (the features).

This rigorous approach provides both local explanations (for one prediction) and powerful global explanations (summarizing feature importance across the entire dataset). Because of its solid theoretical foundation, SHAP has become a gold standard for explaining complex models, especially in high-stakes fields like finance and healthcare.

Of course. Here is the rewritten section, crafted to sound human-written and natural, following all the specified requirements.

How Interpretable AI Drives Real-World Results

The true test of any technology is what it can do out in the wild. While the concepts behind interpretability in machine learning are fascinating on their own, their real power shines when we see them solving tough problems, creating business value, and genuinely improving people's lives. When you move from theory to practice, you start to see how prying open the black box delivers tangible results across critical industries.

These aren't just hypotheticals. They're real stories of how explainable AI is making a difference right now. Each case follows a familiar pattern: a major business challenge, an interpretable solution that brings much-needed clarity, and a measurable, positive outcome.

Boosting Diagnostic Confidence in Healthcare

Imagine an AI model built to spot cancerous tumors in medical scans. On paper, it's a huge success, boasting 99% accuracy. But there's a catch: doctors are reluctant to use it. They can see its final prediction—"cancerous" or "benign"—but they have no idea how the model arrived at that conclusion. Did it focus on the right part of the tumor, or was it thrown off by a harmless artifact in the scan?

This is precisely where interpretability techniques become essential.

By using methods like LIME or Grad-CAM (Gradient-weighted Class Activation Mapping), a radiologist can generate a "heat map" layered directly onto the original scan. This map visually pinpoints the exact pixels the model focused on when making its call.

- The Problem: Doctors couldn't confidently act on a "black box" diagnosis. They worried the AI might be latching onto irrelevant details.

- The Interpretable Solution: With visual explanations, doctors can instantly check if the model's "reasoning" matches their own medical expertise. They can see if it correctly identified a tumor's irregular borders or suspicious microcalcifications.

- The Measurable Outcome: This transparency builds trust. When a doctor confirms the AI's logic is sound, they can move forward with a biopsy or treatment plan faster and with more confidence. The result is quicker diagnoses, lighter workloads, and, most importantly, better patient outcomes.

Ensuring Fairness and Compliance in Finance

The financial world is a minefield of regulations, especially when it comes to lending. A bank can't just reject a loan application and say, "The algorithm said no." They are legally required to provide specific, understandable reasons for that decision.

In such a high-stakes environment, black box models are a massive legal and reputational liability. In the United States, the Equal Credit Opportunity Act (ECOA) forces creditors to give clear reasons for denying credit. To handle this, financial institutions are increasingly leaning on SHAP (SHapley Additive exPlanations) to demystify their credit scoring models. Our guide on exploring SHAP for global and local interpretability takes a much deeper dive into this powerful technique.

A leading fintech company integrated SHAP values directly into its credit scoring workflow. The result? They cut their model review time by a staggering 70% and drastically improved their ability to generate compliant "adverse action notices" for customers.

This wasn't just about ticking a compliance box; it delivered a direct commercial win by making the entire process more efficient and transparent. You can learn more about the specifics of these regulatory requirements and see why interpretability is no longer a nice-to-have in finance.

Optimizing Strategy in E-Commerce

In the cutthroat world of e-commerce, understanding customer behavior is the name of the game. A company might have a complex model that predicts which products a customer will likely buy next. But knowing what they'll buy is only half the battle. The real strategic gold is in knowing why.

By applying post-hoc interpretation methods, a business can peek "under the hood" of its recommendation engine or sales prediction model to find actionable insights.

Example Use Case

- Problem: An online retailer's sales prediction model is accurate, but the marketing team is flying blind. They have no idea which product features to highlight in their campaigns.

- Solution: Using SHAP, they analyze the model's predictions across thousands of products. They discover something interesting: for a popular line of running shoes, the feature "cushioning level" has a much bigger impact on sales than "color" or even "brand recognition."

- Outcome: Armed with this specific insight, the marketing team completely retools its ad campaigns to focus heavily on the shoe's superior cushioning. This leads to a 15% increase in conversion rates for that product line, directly turning a model's insight into real revenue.

Navigating the Challenges and Limits of Interpretability

While the tools of interpretability are powerful, they aren't a silver bullet. If you're going to embrace interpretability in machine learning, you need to do it with a healthy dose of skepticism and a clear-eyed view of its limitations. Think of these methods as investigative tools, not absolute truth machines. Using them responsibly means you have to acknowledge the hurdles you'll almost certainly face.

One of the oldest and most persistent challenges is the accuracy-interpretability trade-off. It’s the classic dilemma: do you choose a highly complex but accurate black box, or a simpler, more transparent model that might not perform as well? While newer post-hoc methods try to give us the best of both worlds, that tension often remains. Opting for a simple model just for clarity could mean sacrificing predictive power that’s critical for your business.

The Risk of Misleading Explanations

Here's a more subtle danger: the explanations themselves can be flat-out wrong or misleading. By its very nature, an interpretation is a simplification of a much more complex reality. A LIME explanation, for example, gives you a local, linear approximation. That's useful, but it might completely miss the model's true non-linear behavior just a tiny step away from that specific data point.

This risk is magnified by something researchers call the "Rashomon Effect" in machine learning. It’s a scenario where many different models can hit nearly identical accuracy scores on the same data, yet give conflicting explanations for their predictions. A 2019 study drove this point home, showing that for any given problem, a whole "Rashomon set" of good models could exist. This makes the idea of finding a single "ground truth" explanation a bit of a fantasy.

It's crucial to remember that an explanation describes what the model is doing, not necessarily what it should be doing. An explanation can faithfully reveal a flawed, biased, or nonsensical logic just as easily as it can a sound one.

The Problem of Fairwashing

Another major pitfall is fairwashing. This is when interpretability tools are misused to create a false sense of fairness and accountability for a system that is fundamentally biased. An organization might wave around some SHAP plots to "prove" their model isn't discriminatory, even while the underlying data or model structure quietly perpetuates systemic biases.

- What it looks like: A bank uses an explanation to show its loan denial model doesn't use race as a feature.

- The hidden problem: The model relies heavily on ZIP codes, which are strongly correlated with race, indirectly baking in the exact bias it claims to avoid.

- The solution: Interpretability must be paired with rigorous fairness audits. The goal isn't just to explain decisions, but to scrutinize those explanations for hidden biases.

Ultimately, it’s best to treat these tools like a flashlight in a dark room, not a switch that illuminates everything at once. They help you investigate, form hypotheses, and spot potential red flags.

If you're just getting started, our practical guide to getting started with explainable AI (XAI) offers foundational steps for building more transparent systems. The key is to stay critical, question the outputs, and combine these techniques with other validation methods to build AI you can truly stand behind.

As machine learning becomes a more established part of the business world, the conversation around interpretability is getting louder. It’s a topic that brings up a lot of important questions, whether you're a data scientist deep in the code, a manager trying to understand model risk, or just someone curious about responsible AI. Let's clear up some of the most common ones.

What's the Difference Between Interpretability and Explainability?

You’ll often hear the terms "interpretability" and "explainability" used as if they mean the same thing, but there’s a subtle and important distinction.

Think of interpretability as the ability to truly understand how a model works from the inside out. It's about knowing the mechanics—every input, every weight, and how they combine to produce an outcome. If you can predict what the model will do with a given input, you have a good degree of interpretability. A simple linear regression model is a great example; you can look at the coefficients and understand exactly what's going on.

Explainability, on the other hand, is about being able to describe why a model made a specific decision in simple, human-friendly terms. It’s less about understanding the entire system and more about getting a clear, concise reason for a single prediction. For a complex model like a deep neural network, you might not understand its inner workings (low interpretability), but you can still use a tool to get an explanation for one of its decisions.

Is There a Trade-Off with Accuracy?

This is one of the oldest debates in the field. For a long time, the answer was a definite "yes." You had to choose. You could have a simple, "glass-box" model like logistic regression that was easy to understand but often couldn't capture the complex patterns a "black-box" model like a gradient boosting machine could.

But that's not really the case anymore. The rise of modern post-hoc techniques like SHAP and LIME has completely changed the game. These tools let us peel back the layers of even the most complex models and generate solid explanations.

The goal is no longer choosing between accuracy or interpretability. Instead, it’s about finding the right combination of high-performing models and robust explanatory tools to achieve both.

This shift means teams can deploy the best, most accurate model for the job without giving up the transparency needed to build trust and manage risk.

How Do I Get Started?

Jumping into interpretability can feel like a big leap, but you don't need to be a top-tier expert to get your feet wet. The key is to start small, get your hands dirty, and build your intuition from there.

Here’s a simple, practical way to begin:

- Pick a Familiar Model: Don't start with something new and complicated. Grab a model you've already built or one you're comfortable with. It could be anything from a basic classifier to something you've used in a recent project.

- Apply a Single Technique: Choose one of the popular, well-documented libraries like SHAP or LIME. Install it, and focus on just one thing: generating explanations for a few individual predictions from your model.

- Question the Output: Once you have an explanation, look at it critically. Does it make sense? Does it align with your own understanding of the data? This is where you start to build a real feel for how these tools work and what they can reveal.

By taking a hands-on approach, you'll quickly demystify the process and see for yourself how these techniques can unlock powerful insights hiding in your models.

Ready to dive deeper into the world of AI and data science? Explore expert-authored articles and in-depth analyses on DATA-NIZANT to stay ahead of the curve. Visit us today!