Navigating the Data Deluge: Essential Practices for 2025

Effective data management is crucial for success in data-intensive fields. This listicle presents eight best practices for data management in 2025, offering actionable insights to help you maximize the value of your data assets. Learn how to implement a robust data governance framework, manage metadata effectively, master data quality, ensure data security and privacy, and navigate the data lifecycle. We’ll also cover data integration, interoperability, and the implementation of DataOps. Applying these best practices for data management empowers you to extract valuable insights and drive informed decision-making.

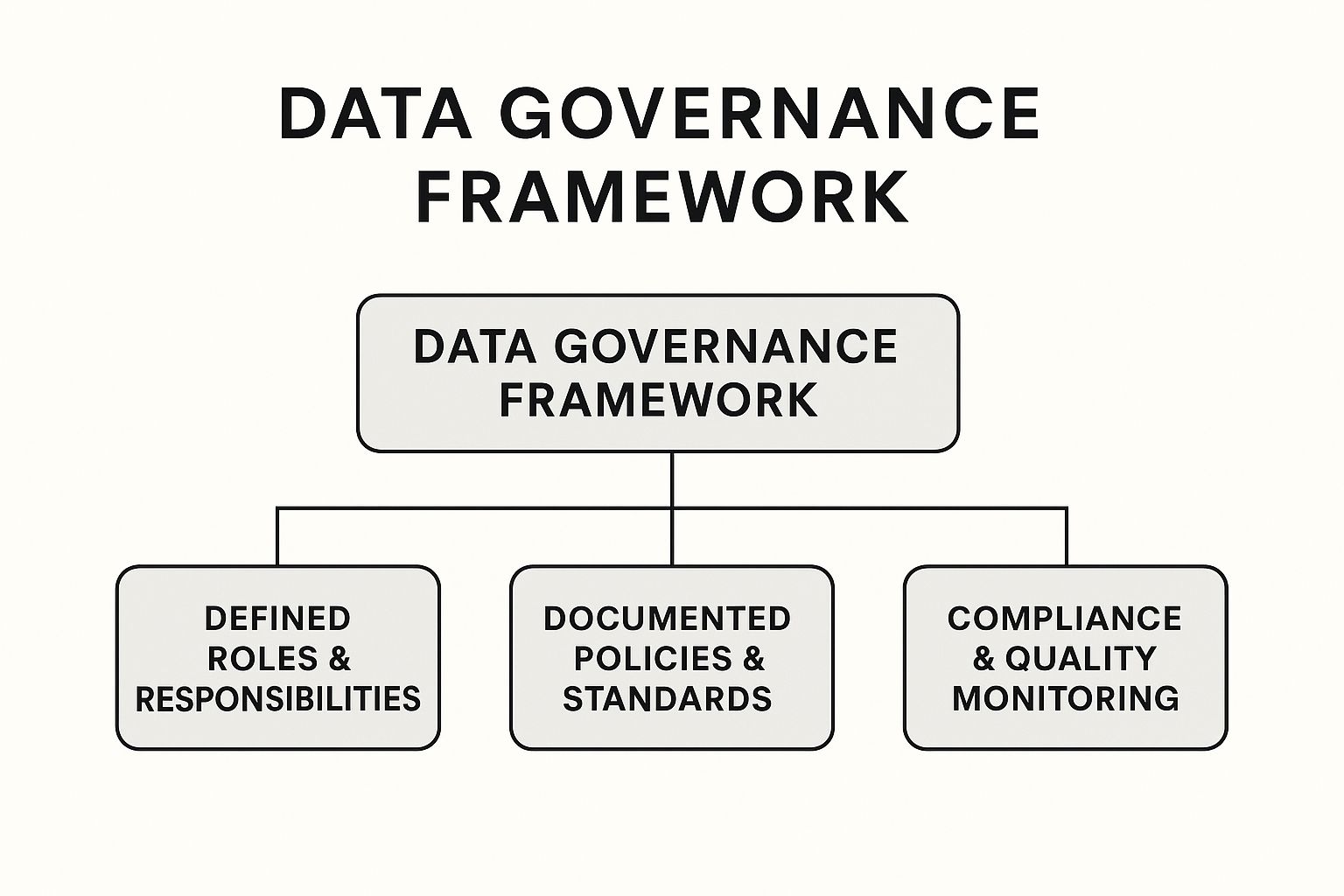

1. Data Governance Framework Implementation

Implementing a robust data governance framework is arguably the most crucial step in establishing best practices for data management. It lays the foundation for treating data as a valuable asset, ensuring its consistency, quality, security, and usability throughout its lifecycle. A data governance framework establishes clear policies, procedures, and standards for how data is collected, stored, processed, accessed, and used across an organization. It defines roles, responsibilities, and accountability for every stage of the data lifecycle, eliminating ambiguity and fostering a culture of data responsibility. This framework is essential for maximizing the value of your data and minimizing potential risks.

This infographic visualizes the hierarchical structure of a typical data governance framework, starting with the overarching vision and mission at the top. It then breaks down into key components like data principles, policies, standards, and procedures. These, in turn, lead to the assignment of specific roles and responsibilities (e.g., Data Stewards, Data Owners) and the implementation of control mechanisms (e.g., data quality metrics, compliance protocols). Finally, the foundation of the framework relies on effective communication and training to ensure successful adoption across the organization. The infographic emphasizes the hierarchical and interconnected nature of data governance, demonstrating how each level supports and informs the levels above and below it.

Developing a comprehensive data governance framework can be a complex undertaking, but resources like Kleene.ai’s Data Governance Framework Template provide valuable guidance and pre-built components to streamline the process. This structured approach to managing data assets ensures consistency, quality, and security.

A well-defined data governance framework incorporates several key features: clearly defined roles and responsibilities (such as Data Stewards, Data Owners, and Data Custodians), documented data policies and standards, established decision-making processes for data-related issues, metrics and procedures for monitoring data quality, and protocols for ensuring regulatory compliance.

Benefits of Implementing a Data Governance Framework:

- Ensures data consistency across the organization: Standardized definitions and processes minimize discrepancies and improve data reliability.

- Improves data quality and reliability: By implementing data quality checks and controls, the framework helps ensure accurate and trustworthy data for better decision-making.

- Reduces regulatory compliance risks: A robust framework helps organizations adhere to data privacy regulations and avoid costly penalties.

- Creates accountability for data management: Assigning clear roles and responsibilities ensures everyone understands their part in maintaining data quality and security.

- Facilitates better decision-making: Access to high-quality, consistent data empowers organizations to make informed decisions based on reliable insights.

Challenges of Implementing a Data Governance Framework:

- Requires significant organizational commitment: Successful implementation necessitates buy-in from all levels of the organization.

- Can be perceived as bureaucratic: Excessive processes and procedures can hinder agility and efficiency if not implemented carefully.

- Implementation may be time-consuming: Establishing a comprehensive framework requires careful planning, execution, and ongoing monitoring.

- Requires ongoing maintenance and updates: The framework needs to evolve with the changing business and regulatory landscape.

- May face resistance from employees: Change management is crucial to address resistance and foster a data-driven culture.

Real-world Success Stories:

- Microsoft’s Data Governance Council improved cross-functional collaboration by reducing data silos and establishing centralized governance.

- Nationwide Insurance saw a 25% reduction in data errors and improved compliance reporting after implementing their data governance framework.

- Wells Fargo standardized data definitions across business units through their enterprise data governance program.

Tips for Successful Implementation:

- Phased approach: Start small and gradually expand the scope of the framework rather than trying to implement everything at once.

- Executive sponsorship: Secure support from senior leadership to ensure organizational commitment and resource allocation.

- Clear metrics: Define measurable key performance indicators (KPIs) to track the effectiveness of the governance framework.

- Steering committee: Establish a cross-functional steering committee to oversee implementation and address any challenges.

- Centralized documentation: Store all policies and procedures in a readily accessible central repository for all stakeholders.

When striving for best practices in data management, a well-implemented data governance framework is not merely beneficial – it’s foundational. It’s essential for organizations that rely heavily on data for decision-making, operations, and compliance. By establishing clear guidelines and processes, organizations can effectively manage their data as a valuable asset, driving better business outcomes while mitigating risks.

2. Metadata Management

Metadata management is a crucial best practice for data management, encompassing the systematic collection, organization, and maintenance of information about your data. This “data about data” provides context and meaning, acting as a roadmap to navigate your data landscape. It includes details like definitions, data lineage (the origin and journey of data), technical attributes (data types, schemas), business context (how the data relates to business processes), and usage information (who is using the data and for what purpose). Implementing robust metadata management creates a comprehensive foundation for effective data governance, discoverability, and utilization.

Metadata management works by creating a centralized repository, often in the form of a data catalog, that stores and manages metadata. This repository allows users to search, discover, and understand data assets. Key features of a robust metadata management system include:

- Business glossary with standardized definitions: Ensures consistent understanding of data elements across the organization.

- Data lineage tracking capabilities: Provides visibility into the origin, transformations, and dependencies of data.

- Technical metadata capture: Automatically captures information about data structure, format, and quality.

- Search and discovery functionality: Allows users to quickly locate relevant data assets.

- Integration with data catalogs: Enables seamless access to metadata within a broader data governance framework.

- Versioning and change management: Tracks changes to metadata over time, ensuring accuracy and consistency.

Why is Metadata Management a Best Practice?

In today’s data-driven world, effective metadata management is no longer optional—it’s essential. It directly addresses the challenges of data silos, inconsistent data definitions, and the difficulty of finding and understanding relevant data. For data scientists and AI researchers, it means easier access to high-quality, trusted data for model training and analysis. For machine learning engineers, it simplifies data pipeline development and debugging. For IT leaders and architects, it streamlines data governance and compliance efforts. And for business executives, it unlocks the true potential of data for informed decision-making.

Examples of Successful Implementation:

- Netflix’s “Maven”: Tracks data lineage across their vast data ecosystem, ensuring data quality and reliability for their recommendation engine and other data-driven applications.

- LinkedIn’s “DataHub”: Provides a centralized platform for metadata management, supporting data discovery, governance, and collaboration across the organization.

- Airbnb’s “Dataportal”: Centralized metadata to dramatically improve data discovery and understanding, enabling data analysts and scientists to find and use the right data more efficiently.

Pros:

- Improves data discoverability and accessibility

- Enhances data understanding across technical and business teams

- Supports regulatory compliance through data transparency and lineage tracking

- Facilitates impact analysis for system changes

- Reduces time spent searching for data

Cons:

- Requires ongoing maintenance to remain valuable

- Can become outdated quickly without proper processes

- Initial population of metadata can be resource-intensive

- May require specialized tools and expertise

- Cultural adoption challenges within the organization

Actionable Tips for Implementation:

- Focus on high-value metadata first: Don’t try to document everything at once. Prioritize metadata for critical data assets and business processes.

- Automate metadata collection where possible: Leverage tools and technologies to automate the capture of technical metadata.

- Integrate metadata management into data pipeline processes: Embed metadata management into your existing data workflows to ensure consistency.

- Establish ownership for maintaining different types of metadata: Clearly define roles and responsibilities for metadata management.

- Create feedback mechanisms to improve metadata quality: Encourage users to provide feedback on the accuracy and completeness of metadata.

By implementing these best practices, organizations can leverage metadata management to unlock the full potential of their data assets and drive data-driven decision making. While tools like Informatica, Collibra, and Alation can assist in this process, the insights from thought leaders like Alex Gorelik, author of “The Enterprise Big Data Lake,” provide valuable guidance for navigating the complexities of metadata management.

3. Master Data Management (MDM)

Master Data Management (MDM) is a crucial best practice for data management, especially for organizations dealing with large volumes of data across various systems. It provides a comprehensive method for linking all critical data to a single “master file,” creating a single source of truth and a common point of reference. This approach streamlines data management, enhances data quality, and improves overall operational efficiency, making it an essential consideration for data scientists, AI researchers, machine learning engineers, IT leaders, and business executives alike.

MDM primarily focuses on managing core business entities such as customers, products, locations, and suppliers. By consolidating and standardizing this information, MDM eliminates data inconsistencies and redundancies that often plague organizations with siloed systems. This, in turn, allows for better analytics and reporting, improves operational efficiency, and enhances customer experience through accurate and consistent data. Imagine a scenario where a customer interacts with different departments within a company, each with its own record of the customer’s information. MDM helps avoid this scenario by creating a single, unified view of the customer.

How MDM Works:

MDM solutions typically employ several key features to achieve data consistency:

- Data Matching and Merging: Identifies and merges duplicate records across different data sources.

- Hierarchical Relationship Management: Defines and manages relationships between data entities (e.g., a customer belongs to a specific region).

- Data Quality Rules and Validation: Enforces data quality standards and validates data against pre-defined rules.

- Versioning and History Tracking: Tracks changes made to data over time, enabling audits and rollbacks.

- Integration with Operational Systems: Connects to existing systems (CRM, ERP, etc.) to synchronize master data and ensure consistency across the enterprise.

Benefits of Implementing MDM:

- Eliminates data inconsistencies: Creates a “single source of truth,” eradicating conflicting information across different systems.

- Improves operational efficiency: Streamlines business processes by providing accurate and consistent data.

- Enables better analytics and reporting: Provides reliable data for business intelligence and decision-making.

- Enhances customer experience: Ensures accurate and consistent customer data, leading to better personalized interactions.

- Supports regulatory compliance: Helps organizations comply with data governance regulations.

Challenges of Implementing MDM:

- Implementation can be expensive and complex: Requires significant investment in software, hardware, and expertise.

- Requires significant cross-departmental coordination: Success hinges on collaboration between different departments and stakeholders.

- May involve complex data migration efforts: Migrating existing data into the MDM system can be challenging and time-consuming.

- Ongoing governance is challenging: Maintaining data quality and consistency requires continuous effort and dedicated resources.

- ROI may take time to realize: The benefits of MDM may not be immediately apparent and may require time to materialize.

Real-World Examples:

Several organizations have successfully implemented MDM to improve their data management practices:

- Procter & Gamble: Standardized product data across 300+ brands, reducing time-to-market by 25%.

- Johnson & Johnson: Implemented a global MDM program that unified customer data across 60+ operating companies.

- Unilever: Standardized product information across global markets using MDM.

Tips for Successful MDM Implementation:

- Define clear business objectives: Identify the specific business problems that MDM will address.

- Start with one domain: Focus on a single domain (e.g., customer or product) before expanding to others.

- Establish data stewardship roles: Assign responsibility for data quality and maintenance.

- Implement rigorous change management processes: Ensure that changes to master data are properly managed and controlled.

- Develop matching rules appropriate to your business context: Define specific rules for identifying and merging duplicate records.

Popular MDM Solutions:

- IBM InfoSphere MDM

- Informatica MDM

- SAP Master Data Governance

MDM, as championed by experts like David Loshin (author of ‘Master Data Management’), is a valuable best practice for data management. By providing a single source of truth for critical data, MDM enables organizations to improve data quality, enhance operational efficiency, and gain deeper insights from their data. While implementation can be complex, the long-term benefits of MDM make it a worthwhile investment for organizations striving for data-driven success. It’s particularly relevant in fields like AI and data science, where high-quality data is paramount for building accurate and reliable models. By incorporating MDM as a best practice, organizations can ensure the integrity and consistency of their data, laying a solid foundation for advanced analytics and machine learning initiatives.

4. Data Quality Management

Data quality management is a critical component of best practices for data management. It encompasses the strategies, processes, and tools used to measure, improve, and maintain the integrity, accuracy, completeness, timeliness, consistency, and relevance of data. Essentially, it ensures your data is fit for its intended purpose, whether that’s operational efficiency, strategic decision-making, or advanced analytics. Without robust data quality management, even the most sophisticated AI and machine learning models will produce unreliable results, leading to flawed insights and potentially harmful decisions.

How Data Quality Management Works:

Data quality management is an iterative process that typically involves these key steps:

- Profiling: Understanding the current state of data quality through automated tools that analyze data characteristics, identify patterns, and flag anomalies.

- Cleansing: Correcting or removing inaccurate, incomplete, or inconsistent data using specialized tools and techniques. This includes deduplication, standardization, and validation.

- Monitoring: Continuously tracking data quality metrics and KPIs (Key Performance Indicators) to identify emerging issues and ensure ongoing compliance with established standards.

- Improvement: Implementing corrective actions and process changes to address root causes of data quality problems and prevent future occurrences. This often involves exception handling workflows and root cause analysis capabilities.

Features of Effective Data Quality Management Systems:

- Automated data profiling and monitoring: Tools that automatically assess data quality and alert stakeholders to potential issues.

- Data cleansing and standardization tools: Software solutions that automate data correction, transformation, and enrichment processes.

- Data quality metrics and KPIs: Clearly defined metrics that track data quality dimensions such as accuracy, completeness, and timeliness.

- Exception handling workflows: Processes for managing and resolving data quality exceptions in a systematic manner.

- Root cause analysis capabilities: Tools and techniques to identify the underlying causes of data quality issues.

- Continuous improvement processes: Ongoing efforts to refine and optimize data quality management practices.

Pros of Implementing Data Quality Management:

- Improved decision-making: Reliable data leads to better informed decisions, minimizing risks and maximizing opportunities.

- Reduced operational costs: Eliminating data errors reduces wasted time, effort, and resources.

- Increased user trust: High-quality data fosters trust in organizational data and analytical insights.

- Enhanced analytical insights: Accurate and consistent data enables more sophisticated and reliable analysis.

- Prevents compliance issues: Robust data quality management helps organizations comply with data governance regulations and industry standards.

Cons of Implementing Data Quality Management:

- Requires ongoing investment and attention: Implementing and maintaining data quality initiatives require continuous effort and resources.

- Can slow down data processing pipelines: Data quality checks and cleansing processes can add complexity to data pipelines.

- May require specialized expertise: Managing complex data quality issues may require specialized skills and knowledge.

- Difficult to establish universal quality standards: Defining data quality standards that apply across all data sources and business units can be challenging.

- Cultural resistance to acknowledging data issues: Some organizations may be reluctant to acknowledge and address data quality problems.

Examples of Successful Implementations:

- Experian: Implemented data quality processes that reduced customer data errors by 85%, improving marketing campaign effectiveness.

- Bank of America: A data quality program saved millions by reducing payment processing errors.

- Mayo Clinic: A data quality initiative improved patient matching accuracy to 99.5%, enhancing patient safety and care coordination.

Actionable Tips for Implementing Data Quality Management:

- Establish clear data quality dimensions: Define what constitutes “quality” data for your specific business needs.

- Implement data quality checks at the point of entry: Prevent errors from entering the system in the first place.

- Create dashboards to monitor quality metrics over time: Track progress and identify emerging trends.

- Prioritize quality issues based on business impact: Focus resources on addressing the most critical data quality problems.

- Automate routine data cleansing tasks where possible: Improve efficiency and reduce manual effort.

Why Data Quality Management is Essential for Best Practices:

In today’s data-driven world, data quality is paramount. For data scientists, AI researchers, and machine learning engineers, high-quality data is the foundation for building accurate models and generating meaningful insights. For enterprise IT leaders and business executives, reliable data is crucial for making informed decisions and driving operational efficiency. Data quality management ensures that data is a valuable asset rather than a liability, justifying its place as a core component of best practices for data management. Ignoring data quality can lead to inaccurate analytics, flawed decision-making, lost revenue, and reputational damage. Investing in robust data quality management is an investment in the future success of any data-driven organization. Experts like Thomas Redman (“The Data Doc”) and companies like Talend and Informatica, alongside standards like ISO 8000, further emphasize the importance of data quality management within the broader context of data management.

5. Data Security and Privacy Management

Data security and privacy management is a crucial aspect of best practices for data management. It encompasses the strategies, technologies, and controls implemented to safeguard valuable data assets from unauthorized access, use, disclosure, disruption, modification, or destruction. Furthermore, it ensures compliance with stringent privacy regulations by meticulously managing how personal data is collected, processed, stored, and shared. In today’s data-driven world, robust data security and privacy management is no longer a luxury, but a necessity for any organization handling sensitive information. This is why it is a cornerstone of best practices for data management.

How it Works:

Data security and privacy management operates on several interconnected layers. It starts with understanding the data itself through data classification and cataloging. This process identifies sensitive data, categorizes it based on its level of confidentiality, and creates a comprehensive inventory. This then informs the implementation of appropriate security measures.

Access control and authentication systems restrict access to data based on predefined roles and permissions, ensuring that only authorized individuals can view or manipulate specific datasets. Encryption, both for data at rest and in transit, transforms data into an unreadable format, protecting it even if unauthorized access occurs. Further protection can be achieved through data masking and anonymization, techniques that obscure sensitive information while preserving the data’s utility for analysis and research. Audit logging and monitoring provide a record of all data access and modifications, enabling the detection of suspicious activity. Finally, privacy impact assessments evaluate the potential privacy risks of new projects or systems, and data breach response protocols outline the steps to be taken in the event of a security incident.

Features and Benefits:

- Data classification and cataloging: Provides a clear overview of data assets and their sensitivity levels.

- Access control and authentication systems: Restrict data access to authorized personnel only.

- Encryption for data at rest and in transit: Protects data even if unauthorized access occurs.

- Data masking and anonymization: Enables safe data sharing and analysis while preserving privacy.

- Audit logging and monitoring: Tracks data access and modifications for security analysis.

- Privacy impact assessments: Proactively identify and mitigate privacy risks.

- Data breach response protocols: Minimize the impact of security incidents.

Pros:

- Protects against data breaches and associated costs: A robust security posture significantly reduces the risk of costly data breaches.

- Ensures regulatory compliance (GDPR, CCPA, HIPAA, etc.): Adhering to data privacy regulations avoids hefty fines and legal repercussions.

- Builds customer trust and brand reputation: Demonstrating a commitment to data security enhances customer trust and strengthens brand image.

- Reduces legal and financial risks: Proactive security measures mitigate potential legal liabilities and financial losses.

- Enables safe data sharing and collaboration: Secure data management practices facilitate safe and efficient data sharing within and across organizations.

Cons:

- Can create friction in data accessibility: Strict security measures can sometimes impede data access for legitimate users.

- Requires continuous updating to address new threats: The cybersecurity landscape is constantly evolving, necessitating continuous updates and improvements to security systems.

- May involve complex implementation across legacy systems: Integrating security measures into existing legacy systems can be challenging.

- Balancing security with usability is challenging: Finding the right balance between robust security and seamless user experience requires careful consideration.

- Compliance requirements vary by jurisdiction: Navigating diverse and evolving data privacy regulations across different regions can be complex.

Examples of Successful Implementation:

- Capital One: Implemented a comprehensive data security framework encompassing encryption and access controls, significantly reducing vulnerability exposure.

- Apple: Utilizes differential privacy techniques to collect analytics data while preserving user privacy.

- Salesforce: Offers the Shield platform, providing field-level encryption and monitoring for sensitive data.

Actionable Tips:

- Implement the principle of least privilege: Grant users only the minimum level of access required to perform their tasks.

- Conduct regular security assessments and penetration testing: Identify vulnerabilities and weaknesses in your security posture.

- Create data handling procedures specific to data sensitivity levels: Tailor data handling procedures to the specific risks associated with different data types.

- Train employees regularly on security awareness: Educate employees about best practices for data security and privacy.

- Implement privacy by design in all new data initiatives: Integrate privacy considerations into every stage of the data lifecycle from the outset.

When and Why to Use This Approach:

Data security and privacy management is essential for any organization that collects, stores, or processes data, particularly sensitive or personal information. It is a continuous process that must be integrated into every aspect of data management. The benefits of robust data security and privacy management far outweigh the costs, protecting organizations from financial losses, legal liabilities, and reputational damage.

Popularized By:

- Ann Cavoukian (creator of Privacy by Design framework)

- Bruce Schneier (security technologist)

- International Association of Privacy Professionals (IAPP)

- Cloud Security Alliance

6. Data Lifecycle Management

Data Lifecycle Management (DLM) is a crucial best practice for data management, offering a comprehensive, policy-based approach to governing data throughout its entire existence. From the moment data is created or acquired to its eventual archival or deletion, DLM provides a structured framework for maximizing data value while minimizing risks and costs. This makes it an essential consideration for data scientists, AI researchers, machine learning practitioners, IT leaders, and anyone working with data in a professional capacity.

DLM works by implementing a set of policies, processes, and tools that dictate how data is handled at each stage of its lifecycle. These stages typically include creation/acquisition, active use, archiving, and deletion. This structured approach ensures data is readily available and optimized for performance when needed, while also reducing storage costs and ensuring compliance with regulatory requirements for data retention and disposal.

Features of a robust DLM system include:

- Data creation and acquisition controls: Establishing standards and procedures for how data is initially collected and entered into the system. This ensures data quality from the outset.

- Storage optimization strategies: Implementing tiered storage solutions where frequently accessed data resides on faster, more expensive storage, while less frequently accessed data is moved to slower, more cost-effective options.

- Information lifecycle policies: Defining clear rules for how long data is retained, when it is archived, and when it is deleted based on business value, legal obligations, and other relevant factors.

- Automated archiving processes: Automating the movement of data to archival storage based on predefined criteria.

- Compliant data deletion procedures: Securely and permanently deleting data according to regulatory requirements and internal policies.

- Retention schedule management: Maintaining a comprehensive schedule of data retention periods and ensuring adherence to it.

- Version control systems: Tracking different versions of data and enabling rollback to previous versions if necessary.

Pros of implementing DLM:

- Reduced storage costs: Through proper tiering and deletion of obsolete data.

- Improved system performance: By optimizing active data storage and access.

- Ensures regulatory compliance: With data retention and deletion requirements.

- Minimizes risk: By eliminating unnecessary data and reducing the potential for data breaches.

- Provides historical context when needed: Through well-managed archives.

Cons of implementing DLM:

- Requires coordination across multiple departments: Successful DLM requires buy-in and collaboration from IT, legal, compliance, and business units.

- Legacy systems may not support automated lifecycle management: Integrating DLM with older systems can be challenging.

- Determining appropriate retention periods can be complex: Balancing business needs, legal obligations, and storage costs requires careful consideration.

- May require significant policy development: Creating comprehensive DLM policies can be time-consuming.

- Cultural resistance to data deletion: Some organizations may be hesitant to delete data, even when it’s no longer needed.

Examples of Successful DLM Implementation:

- Pfizer: Implemented a DLM strategy that reduced storage costs by 30% while improving compliance with pharmaceutical regulations.

- HSBC: Implemented automated data archiving and deletion, reducing storage costs by millions annually.

- Amazon S3 Lifecycle configuration: Provides a built-in mechanism for automatically transitioning data between storage tiers and expiring objects after a specified period.

Tips for Implementing DLM:

- Classify data based on business value and compliance requirements: This helps determine appropriate retention periods and storage tiers.

- Implement automated lifecycle actions where possible: Automation streamlines the process and reduces the risk of human error.

- Create clear retention schedules with legal and compliance teams: Ensure that retention policies align with all applicable regulations.

- Document justifications for retention decisions: This provides transparency and helps defend data management practices in the event of an audit.

- Regularly audit and test recovery of archived data: This ensures data integrity and accessibility.

DLM’s place in best practices for data management is firmly established due to its comprehensive approach. It ensures data is treated as a valuable asset, managed effectively throughout its lifecycle, and disposed of responsibly. Organizations that embrace DLM are better positioned to leverage the full potential of their data while mitigating risks and controlling costs. While implementing DLM can present some challenges, the long-term benefits make it a worthwhile investment for any organization seeking to optimize its data management strategy. Organizations like the SNIA (Storage Networking Industry Association), Iron Mountain, the Storage Management Initiative (SMI), and ARMA International provide further resources and guidance on DLM best practices.

7. Data Integration and Interoperability

Data integration and interoperability is a crucial best practice for data management, focusing on combining data residing in disparate sources and ensuring that different systems can effectively exchange and utilize this unified data. This practice sits at the heart of modern data-driven organizations, enabling them to derive actionable insights and make informed decisions. It encompasses a range of techniques, including Extract, Transform, Load (ETL) processes, API management, data virtualization, and establishing robust communication channels between applications, databases, and various platforms. Effective data integration breaks down data silos and creates a cohesive data ecosystem, essential for maximizing the value of your data assets.

This approach involves several key features, including ETL and ELT (Extract, Load, Transform) processing capabilities to handle data ingestion and transformation. Real-time integration options are essential for applications requiring up-to-the-minute data, while API management and development facilitate seamless communication between systems. Data virtualization platforms provide abstracted views of data without requiring physical centralization, and enterprise service bus (ESB) architectures offer a standardized approach for integrating diverse applications. Canonical data models ensure consistency across the integrated data landscape, and schema mapping tools help resolve structural differences between data sources.

Why is Data Integration and Interoperability a Best Practice for Data Management?

In today’s interconnected world, data rarely resides in a single location. For data scientists, AI researchers, and machine learning engineers, accessing and analyzing data from multiple sources is crucial for building accurate and robust models. For enterprise IT leaders and infrastructure architects, efficient data integration simplifies data management and reduces redundancy. Technology strategists and business executives benefit from a unified view of enterprise data, facilitating better decision-making. Students and academics in AI and data science rely on integrated data for research and learning purposes. Data integration and interoperability directly addresses these needs by providing a consolidated and accessible data environment.

Benefits and Drawbacks:

Pros:

- Creates a unified view of enterprise data: Enables holistic analysis and reporting.

- Enables real-time data sharing between systems: Supports timely insights and automated processes.

- Reduces data silos and redundancy: Improves data quality and reduces storage costs.

- Improves business process efficiency: Streamlines workflows and reduces manual data handling.

- Facilitates faster application development: Provides readily available and integrated data for new applications.

Cons:

- Can create complex dependencies between systems: Requires careful planning and management.

- Performance challenges with large-scale integrations: Necessitates efficient architecture and optimization.

- Maintaining integrations requires ongoing resources: Dedicated teams or managed services may be required.

- Data sovereignty issues in cross-border integration: Compliance with regulations is crucial.

- Semantic differences can complicate mapping: Requires careful consideration of data definitions.

Examples of Successful Implementation:

- Airbnb: Their data integration platform processes over 500 billion events per day, enabling real-time analytics for pricing, personalized recommendations, and fraud detection.

- Kaiser Permanente: Their health information exchange integrates patient data across hundreds of facilities, improving care coordination and patient outcomes.

- Walmart: Their data integration framework combines in-store and online data for unified customer analytics, driving personalized marketing and inventory management.

Actionable Tips:

- Implement API-first strategies for new applications: Promotes interoperability and reusability.

- Create a canonical data model for consistent integration: Ensures data consistency and simplifies mapping.

- Consider cloud-based integration platforms for scalability: Leverage the elasticity and cost-effectiveness of the cloud.

- Develop clear error handling and monitoring protocols: Ensures data integrity and system stability.

- Document all integration points and dependencies: Facilitates maintenance and troubleshooting.

Key Players and Technologies: Platforms like Boomi (acquired by Dell), MuleSoft (acquired by Salesforce), and Informatica provide comprehensive data integration solutions. Apache Kafka offers a powerful platform for real-time data streaming. The Open Group, with its TOGAF integration standards, provides architectural guidance for enterprise integration. By embracing these best practices and leveraging the available technologies, organizations can unlock the true potential of their data and achieve a significant competitive advantage in today’s data-driven world.

8. DataOps Implementation

In the realm of best practices for data management, DataOps implementation emerges as a crucial component for organizations striving to harness the full potential of their data. DataOps is a collaborative data management practice that focuses on improving the communication, integration, and automation of data flows between data managers and data consumers across an organization. By adopting principles similar to DevOps, DataOps combines agile development, data integration, and quality assurance to improve the speed and reliability of analytics while safeguarding data quality. Its inclusion in best practices stems from its ability to streamline data pipelines, enhance collaboration, and accelerate time to insight.

How DataOps Works:

DataOps operates on the principle of creating a continuous, automated cycle for data operations. This involves automating data ingestion, transformation, testing, and deployment, much like DevOps automates software delivery. Key features of a robust DataOps implementation include:

- Automated Testing for Data Pipelines: This ensures data quality at every stage of the pipeline, catching errors early and preventing them from impacting downstream processes.

- Version Control for Data and Code: Similar to software development, version control allows for tracking changes, rolling back to previous versions, and facilitating collaboration among team members.

- CI/CD for Data Workflows: Continuous integration and continuous delivery practices automate the deployment of data pipelines and ensure that changes are rolled out smoothly and frequently.

- Infrastructure as Code (IaC): IaC enables managing and provisioning infrastructure through code, allowing for reproducibility, scalability, and version control of the data environment.

- Monitoring and Observability Tools: These provide real-time visibility into the performance and health of data pipelines, enabling proactive identification and resolution of issues.

- Self-Service Data Platforms: Empower data consumers with easy access to data and tools while maintaining appropriate governance and security measures.

- Collaborative Development Environments: Foster collaboration and communication among data engineers, scientists, analysts, and business users.

Why DataOps is a Best Practice:

DataOps addresses several critical challenges in modern data management, making it a deserving best practice. It accelerates time to insight through automation, reducing manual processes and delays. It also minimizes errors in data pipelines through automated testing and monitoring, leading to more reliable analytics. Furthermore, DataOps promotes collaboration between teams, breaking down silos and fostering a shared understanding of data. This collaborative approach also increases the reusability of data processes, reducing redundancy and saving valuable time and resources. Finally, DataOps enables organizations to be more agile in responding to evolving business needs, allowing them to quickly adapt to changing market conditions and leverage new data sources.

Pros and Cons:

Pros:

- Faster time to insight

- Reduced errors in data pipelines

- Improved collaboration

- Increased reusability

- Agile responses to business needs

Cons:

- Requires significant cultural and process changes

- May necessitate new tools and infrastructure

- Cross-functional coordination challenges

- Balancing agility with governance

- Complex implementation in large organizations

Examples of Successful Implementation:

- Netflix: Processes 450+ billion events daily using DataOps principles, incorporating robust testing and monitoring into their data pipelines.

- Facebook: Employs data engineering practices rooted in DataOps, enabling rapid experimentation while maintaining data quality.

- Capital One: Implemented a DataOps program that dramatically reduced analytics delivery time from months to days.

Actionable Tips for Implementation:

- Start with a Pilot Project: Demonstrate the value of DataOps by starting with a small, well-defined project.

- Automated Testing at Multiple Levels: Implement unit, integration, and data quality tests to ensure comprehensive coverage.

- Comprehensive Monitoring: Monitor both technical and business metrics to gain a holistic view of data pipeline performance.

- Cross-Functional Teams: Create teams with both technical and domain experts to foster collaboration and shared understanding.

- Self-Service with Guardrails: Empower users with self-service access to data while implementing appropriate governance and security measures.

Popularized By: Andy Palmer (co-founder of Tamr), Christopher Bergh (DataKitchen), Lenny Liebmann (early proponent), DataOps Manifesto, Cloudera

By incorporating these best practices and addressing the associated challenges, organizations can leverage DataOps to unlock the full potential of their data, driving better decision-making and achieving a competitive advantage.

Top 8 Data Management Practices Comparison

| Practice | Implementation Complexity 🔄 | Resource Requirements ⚡ | Expected Outcomes 📊 | Ideal Use Cases 💡 | Key Advantages ⭐ |

|---|---|---|---|---|---|

| Data Governance Framework Implementation | High – requires organizational commitment and cross-functional coordination | High – time-consuming setup and ongoing maintenance | Improved data quality, compliance, accountability | Organizations needing centralized data policies and compliance | Ensures data consistency, reduces compliance risks |

| Metadata Management | Medium – requires continuous updates and specialized tools | Medium – initial resource-intensive, ongoing efforts | Enhanced data discoverability and transparency | Enterprises aiming for better data understanding and lineage | Improves data accessibility, supports impact analysis |

| Master Data Management (MDM) | High – complex, expensive, cross-departmental efforts | High – significant coordination and technical integration | Consistent, accurate master data across systems | Businesses needing single source of truth for key entities | Eliminates inconsistencies, improves analytics and operations |

| Data Quality Management | Medium – ongoing investment and expertise needed | Medium – requires specialized tools and attention | Reliable, accurate data for decision making | Organizations focused on improving data trust and reducing errors | Enhances decision-making, reduces operational costs |

| Data Security and Privacy Management | High – complex, ongoing updates, balancing usability | High – technology, training, and compliance efforts | Protected data, regulatory compliance, customer trust | Organizations handling sensitive or regulated data | Protects against breaches, builds trust |

| Data Lifecycle Management | Medium to High – requires multi-department coordination | Medium – policy development and automation tools | Optimized storage, compliance, risk reduction | Organizations managing data retention and archival policies | Reduces costs, improves compliance |

| Data Integration and Interoperability | High – complex system dependencies and performance tuning | High – continuous resource commitment | Unified data view and real-time sharing | Enterprises integrating diverse systems and data sources | Reduces silos, improves process efficiency |

| DataOps Implementation | High – cultural shift, new tooling, cross-team collaboration | High – infrastructure and automation investments | Faster, reliable analytics with better collaboration | Organizations seeking agile, automated data workflows | Accelerates insights, reduces errors, improves agility |

Data Excellence: A Continuous Journey

Implementing best practices for data management, as outlined in this article, is not a destination but a continuous journey towards data excellence. From establishing a robust data governance framework and mastering metadata management to ensuring data quality, security, and implementing effective DataOps, each element plays a vital role in unlocking the true potential of your data. By prioritizing Master Data Management (MDM) and understanding the complexities of data lifecycle management, organizations can build a strong foundation for data integration and interoperability. Mastering these concepts empowers data scientists, AI researchers, machine learning engineers, and business leaders alike to make informed decisions, drive innovation, and achieve impactful business outcomes. This comprehensive approach to best practices for data management ensures that your data remains a valuable asset, contributing to a competitive edge in today’s data-driven world.

The evolving data landscape demands continuous adaptation and refinement of your data management strategies. To stay ahead of the curve and maximize the value of your data initiatives in 2025 and beyond, explore DATA-NIZANT. DATA-NIZANT provides cutting-edge tools and resources to empower your organization in implementing these best practices for data management, ensuring data quality, security, and governance. Visit DATA-NIZANT today to discover how we can help you achieve data excellence.